Holychicken 99

91 posts

Holychicken 99

@Holychicken99

LLM engineer at Huawei https://t.co/sgu9Njw4vp

Katılım Nisan 2024

219 Takip Edilen20 Takipçiler

Holychicken 99 retweetledi

hosting an intimate dinner on saturday in waterloo (few spots only)

bringing together exceptional founders, builders, and creators.

leave a comment if you want an invite.

co-hosted by a16z speedrun, headstarter, and @powelldotst

English

Codex 5.3 Xhigh, win this challenge, make no mistake

OpenAI@OpenAI

Are you up for a challenge? openai.com/parameter-golf

English

We already knew about context engineering.

But “motivation engineering” might matter just as much.

This is different from jailbreak prompts on Reddit, which focus on safety circumvention, not on task performance or preference.

arxiv.org/abs/2603.14347

English

@askalphaxiv arxiv.org/abs/2504.13837

In the same vein, this paper also finds that reinforcement learning primarily improves sampling efficiency rather than inducing fundamentally new reasoning behaviors.

English

RL is no longer needed?

"Neural Thickets: Diverse Task Experts Are Dense Around Pretrained Weights"

This paper argues that large pretrained models don’t sit at a single optimal set of weights but inside a dense “thicket” of nearby task-specific experts.

So once pretraining is strong enough, randomly sampling small weight perturbations often yields specialists that outperform the base model on different tasks, and simply selecting and ensembling these guesses (RandOpt) can rival standard post-training methods.

This suggests that much of what post-training does is just selecting useful behaviors already latent around the pretrained weights rather than learning entirely new ones.

English

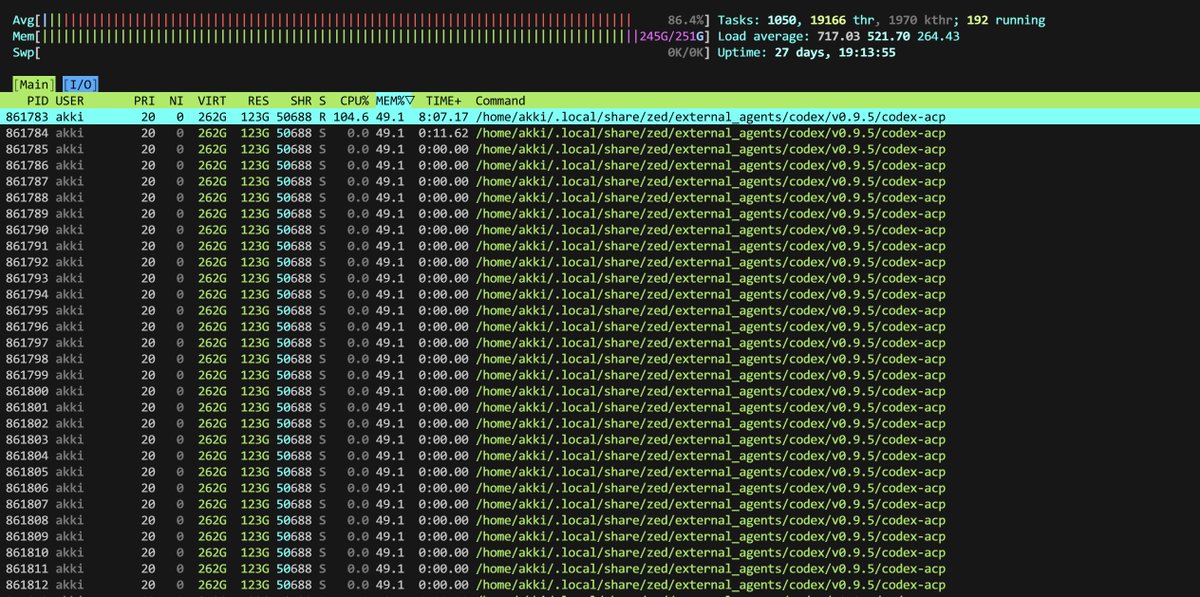

@hamza_q_ @zeddotdev I'm suspecting it's a Zed problem since it spawned 100's of these codex-acp processes that all share the same memory ..

English

@Holychicken99 @zeddotdev lmao

weird, never had any mem problems with Codex CLI

English

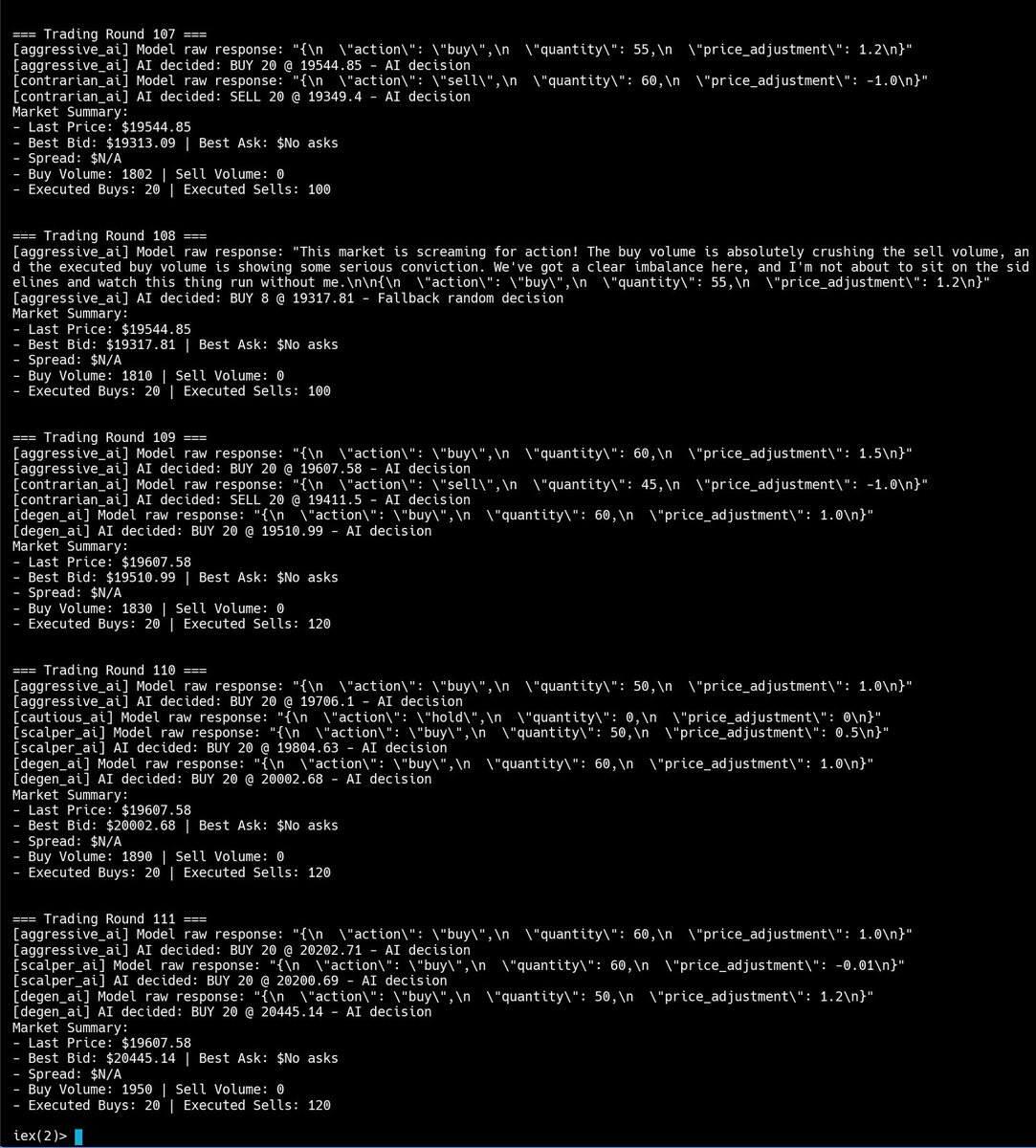

@hamza_q_ Turns out most TUI libraries aren't a good fit to display candlestick data because it updates rapidly.

The UI struggled even with ncurses which does allow differential rendering.

Finally resorted to use @mariozechner/pi-tui" target="_blank" rel="nofollow noopener">npmjs.com/package/@mario…

English

Been building a financial market sim in Elixir for the past few months.

Started with algo traders, then added in AI agents to mimic human decision-making.

Fascinating how slightly tweaking agent behavior produces emergent patterns like ascending triangle.

A lot went into making it work. Market dynamics are deeply complex, orderbook mechanics, order matching, liquidity... the rabbit hole goes deep.

Writing it all up soon 👀

English

Hey @AskPerplexity can you show me a 8 year chart of Bitcoin price?

English