Johnny Shirt

75 posts

@JohnnyShirt_

e-com, e-sports & stocks | #DYOR: 4th IR navigator | Focus AI Early Adapters $NVDA $PLTR $TSLA $U $GOOGL $AMD $META $APP $ESLX $WRBY

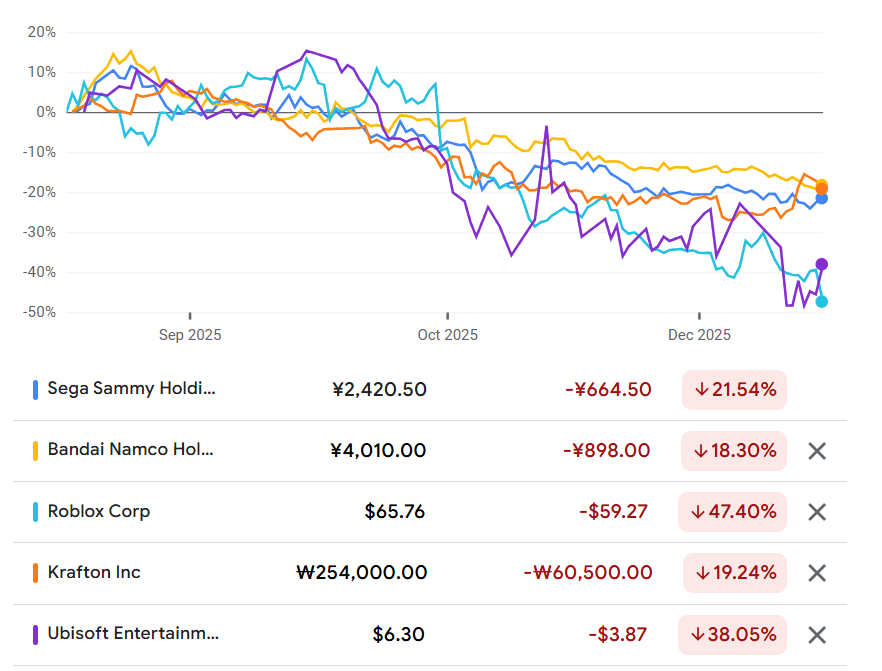

Buy the dip, world-models are not the future of gaming. Games will use AI more and more for assets and coding. They won't use world models. Generating frames using a sliding-window of past frames does not produce a fun and complete experience. They will remain nice tech demos.

Folks. Can I explain something about world models? Seems like today might be a good day for that. Advances in large-scale “world models” — whether developed by partners like Google or others — materially expand the frontier of interactive content creation. These models can generate high-quality, interactive, video-like experiences from natural language or minimal input. Today, they are primarily editable through prompting, which limits the level of determinism and precision required for production-grade game mechanics. As a result, their outputs remain probabilistic and non-deterministic, making them unsuitable on their own for games that require consistent, repeatable player experiences. Rather than viewing this as a risk, we see it as a powerful accelerator. Video-based generation is exactly the type of input our Agentic AI workflows are designed to leverage—translating rich visual output into initial game scenes that can then be refined with the deterministic systems Unity developers use today. Our agents already generate high-quality scenes from static video. Interactive, camera-controllable video from world models would further enhance this pipeline and materially improve the fidelity and speed of early-stage content creation. We believe this represents a meaningful step forward for AI-driven development across the industry. Unity’s role is to operationalize these advances. Outputs from world models are ingested into Unity’s real-time engine, where they are converted into structured, deterministic, and fully controllable simulations. Within Unity, creators define physics, gameplay logic, networking, monetization, and live-operations systems to ensure consistent behavior across devices and sessions. This combination enables developers to move faster from concept to scalable product: AI accelerates environment and asset generation, while Unity provides the execution layer that transforms generated content into reliable, monetizable experiences. As a result, world models expand content supply and reduce development friction, while Unity remains the system of record for runtime, distribution, and long-term operations. This dynamic broadens Unity’s addressable market and reinforces its central role in the interactive ecosystem.

$GOOGL Genie 3 points toward a world where AI systems run continuously in real time. AI demand about to shift from bursty queries to always-on workloads which raises the baseline for $NVDA + $AMD compute plus $MU + $SNDK memory. $META is also likely to follow as interactive worlds become another surface.

Our short film Dear Upstairs Neighbors is previewing at @sundancefest. 🎬 It’s a story about noisy neighbors, but behind the scenes, it’s about solving a huge challenge in generative AI: control. Developed by Pixar alumni, an Academy Award winner, researchers, and engineers, here’s how it came together. 🎨

Porsche’s new holiday commercial 🚙 • Made by Parallel Studio • Blends hand-drawn art with 3D animation • uses 'absolutely no AI'