Dr.sats

160 posts

Dr.sats

@LetmefuckXXX

Vegetarian | Life equality|Minimalist|#BTC holder since 2013

Katılım Temmuz 2019

4K Takip Edilen725 Takipçiler

@congyuecy_soft Neural rendering is interesting, but without a physically-based foundation, you lose truth. when you actually need truth, not just plausibility. Path tracing defines reality.

DLSS 5 increasingly defines perception and maybe, soon, reality itself.

English

Dr.sats retweetledi

Holy crap an entire music video using Gaussian splats!!! Now you got the ability to relight gplats using @OTOY Octane Render…This is the future!

christopher rutledge@tokyomegaplex

excited to share the music video for Helicopter$ music video for A$AP Rocky directed by dan streit and produced by me with a dream team of cg artists at grin machine :^)

English

@GabRoXR @playcanvas @threejs Gaussian Splats are likely to become a visual substrate for world models rather than just a rendering format.If Gaussian Splats become the output of world models, then Octane @OTOY becomes the tool that tells us whether those worlds are physically real.

English

What is your prediction for 2026 when it comes to #GaussianSplatting?

In my opinion, we will see more 3rd party platforms and tools supporting Splats to create simple, interactive, walkable experiences, while web-based engines like @playcanvas and @threejs will far surpass what can be done in @unity.

About the video: This demo from Yiwei Chiang is more than just another splat viewer in #VR.

The simple interactions and mechanics give us a glimpse of how this new #3D format is quickly evolving and turning into more sophisticated experiences.

We are still at the beginning but being able to create an environment from just an image is unlocking so many opportunities for storytellers.

English

Dr.sats retweetledi

@DS2LightingMod One day realtime unbiased rendering will become standard and it will bring freedom for both stylized and realistic rendering we can only dream of.

English

Dr.sats retweetledi

World Labs x Octane Render

OTOY@OTOY

OctaneStudio+ 2026 Black Friday subscribers get access to Marble by @theworldlabs – enabling you to create instant, production-ready worlds and relight them in Octane – with cinematic GI, shadows, and reflections. Create worlds with Marble and make them cinematic with Octane! youtu.be/PqtRjn6PIyI

English

Dr.sats retweetledi

After years of research & experiments, OctaneRender 2027 is achieving real-time path tracing while remaining its quality and spectral nature. I am extremely proud of my team all across the globe for this achievement!🔥 #Octane

English

Dr.sats retweetledi

OTOY reveals new Octane 2027 Roadmap and Beyond @OTOY forum with:

- Real-time Neural Viewport rendering

- Render to Gaussian Splat

- Anime and Sketch Rendering

- Generative PBR Materials

- AI Light 2.0 for many light sampling

- Python API

and much more

render.otoy.com/forum/viewtopi…

English

Dr.sats retweetledi

Dr.sats retweetledi

The new @theworldlabs Marble world-builder just dropped - and its Gaussian Splat exports fit perfectly inside @otoy Octane 2026.

With practically no effort, you can drop the splats directly into Octane and they become fully functional 3D elements. Relight them, add geometry and shadows, use the collider mesh for dynamic control, or prep your own 360° and VR scenes - and so much more.

Try Octane 2026 today and unlock the power of Gaussian splat workflows.

English

Dr.sats retweetledi

"Suddenly @rendernetwork shines from a new angle.

Its capable of rendering movie scenes in hours, not weeks.

Its capable of rendering complex simulation now […]"

...

"[…] its the most convenient #render farm you will ever find - I guarantee it."

_

$RENDER

English

Dr.sats retweetledi

Beta sign up for OTOY.AI is now live - I just presented major launch features and 6 month roadmap on stage at #BCON25 - we now have 700+ models (and more added daily)... open weight models that will run on consumer GPUs are being adapted for low cost + high scale on @rendernetwork #OTOYAI

English

Dr.sats retweetledi

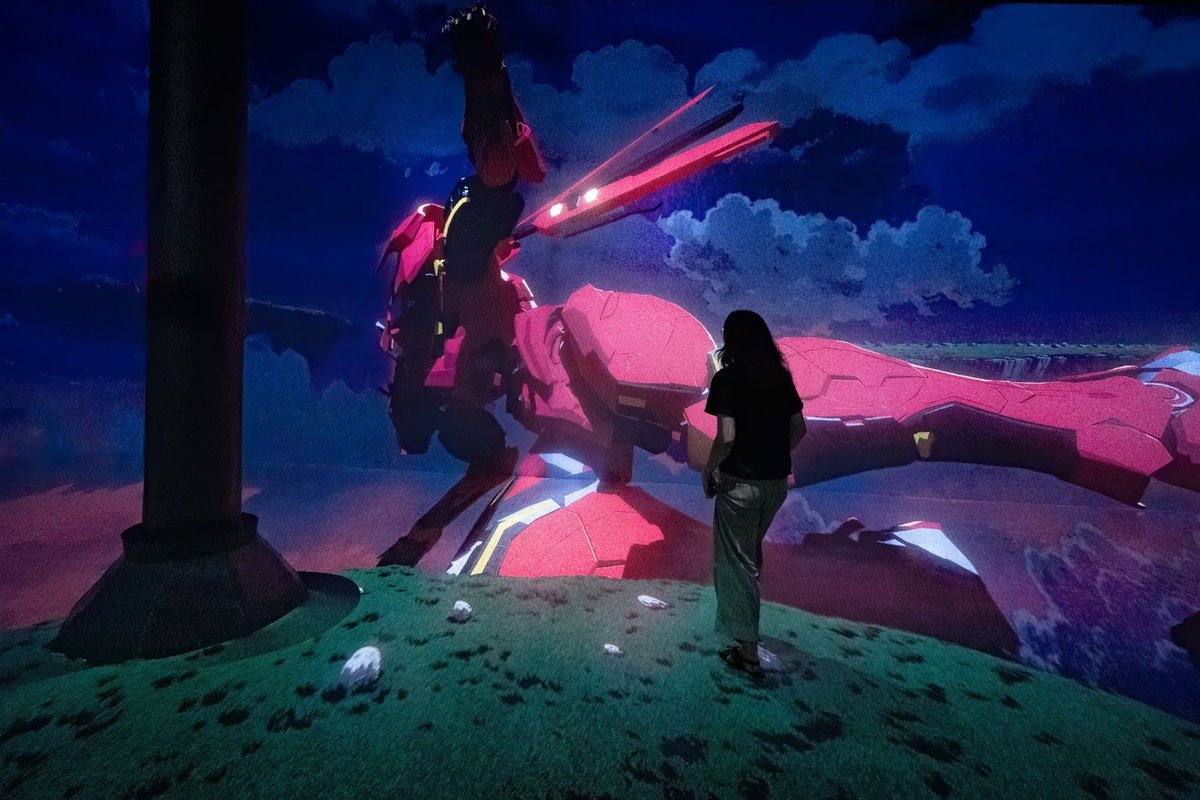

For SUBMERGE: Beyond the Render, Kuciara and Yang adapt their acclaimed @shibuyafilm anime White Rabbit into a spatial installation, rendering key scenes at 18K resolution to push the boundaries of immersive animation.

The result is a hyperreal anime world brought to life across walls of light, blending emotion, memory, and speculative storytelling in a way that could only exist in an immersive format.

English

Dr.sats retweetledi

“Combining the strengths of physical & digital art can give viewers entirely new ideas & confrontations…”

- Gavin Shapiro

In SUBMERGE: Beyond the Render @artechouse NYC @shapiro500 adapts his iconic style featuring delightful looping penguins and flamingos into an 18K immersive experience powered by @rendernetwork.

English

@stephenajason Amazing work on Pusa V1.0!

I’m curious — is it possible to train the model on a consumer GPU (like an RTX 3090 or 4090)?

English

🚀 Pusa V1.0 Release

Can you believe training a SOTA level Image-to-Video model with only $500 training cost? No way?

But yes, we made it! And we achieved much more beyond that.

We’re thrilled to release Pusa V1.0—a paradigm shift in video generation, redefining video diffusion efficiency. With our novel Vectorized Timestep Adaptation (VTA) based on our prior FVDM work:

🔥 Key Features:

✅Unprecedented Efficiency:

- Surpasses Wan-I2V-14B with ≤ 1/200 of the training cost ($500 vs. ≥ $100,000)

- Trained on a dataset ≤ 1/2500 of the size (4K vs. ≥ 10M samples)

- Achieves a VBench-I2V score of 87.32% with 10 inference steps (vs. 86.86% for Wan-I2V-14B with 50 steps)

✅ Comprehensive Multi-task Support:

VTA fully preserves Text-to-Video from the base model Wan-T2V, and after finetuning, Pusa V1.0 extends to the following all in a zero-shot way (no task-specific training):

- Image-to-Video

- Start-End Frames

- Video completion/transitions

- Video Extension

- And more...

✅Complete Open-Source Release:

- Full codebase and training/inference scripts

- Model weights and dataset for Pusa V1.0

- Paper/ Tech Report with Detailed and Comprehensive Methodology

💡 Scientific breakthrough:

VTA enables granular temporal control via frame-level noise adaptation—no task-specific training needed.

🌍 Fully open-sourced:

• Codebase: github.com/Yaofang-Liu/Pu…

• Project Page: yaofang-liu.github.io/Pusa_Web/

• Technical report: github.com/Yaofang-Liu/Pu…

• Model weights: huggingface.co/RaphaelLiu/Pus…

• Dataset: huggingface.co/datasets/Rapha…

[1/n]

English

Dr.sats retweetledi

I've been trying @rendernetwork with Redshift lately and I have to say it's by far, the smoothest render farm experience I've ever had. It can be extremely cheap too if you're not on a rush & even the priority render doesn't costs that much, I'm so so impressed.

English

Dr.sats retweetledi

Congrats to Claudio Miranda (ASC) on the release of F1!

His cutting-edge cinematography takes viewersinside F1 cars at 200+ mph.

Beginning with Top Gun: Maverick Octane X has helped his previz workflow push the boundary of visual immersion.

Learn more: fxguide.com/fxfeatured/app…

English

Dr.sats retweetledi