Rob Bailey

33.8K posts

Rob Bailey

@RMB

AI-Native & Agentic Founder/Operator (Working On Something New)

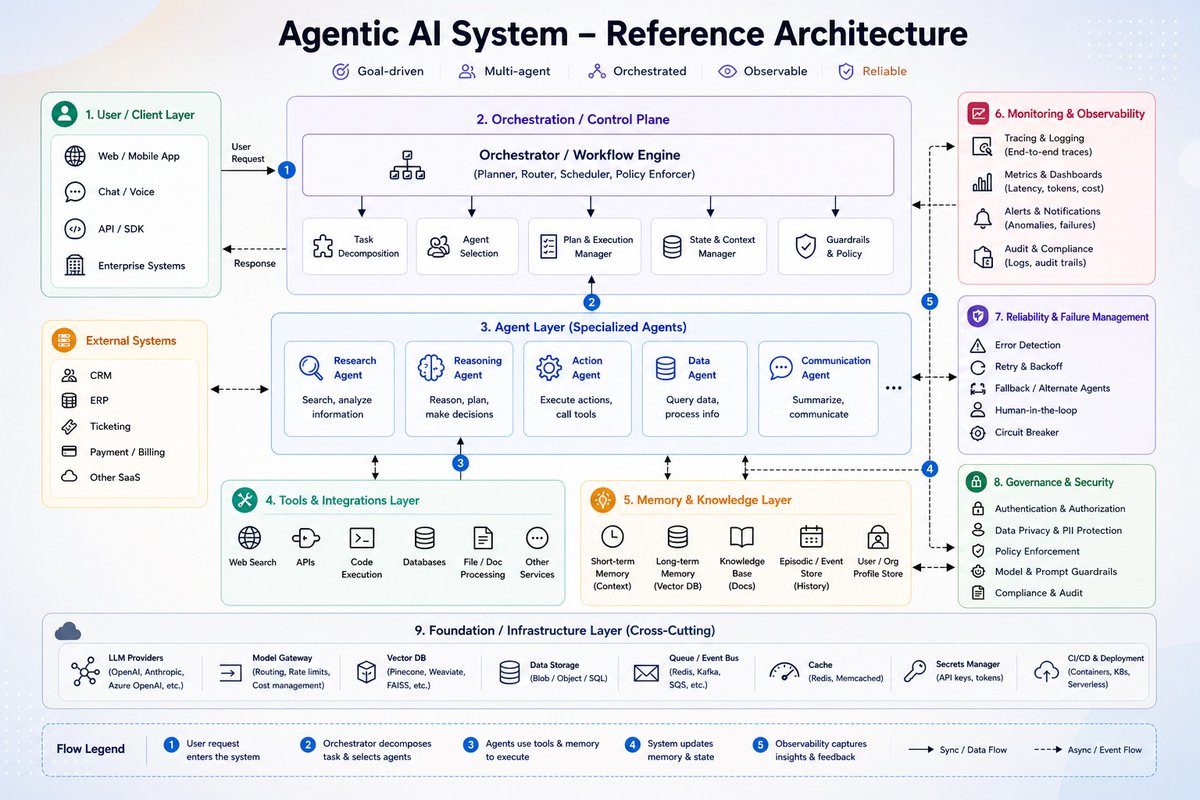

AI PM interviews are now testing "AI product sense." So I recorded a mock to demystify what it is with Ankit Virmani, who just nabbed AI PM offers at Uber, Atlassian, and Cisco. 6:59 3 tiers running it 12:04 Live mock 52:30 9/10 breakdown

Token spend will be on your next performance review. Maybe not next quarter. But soon. Boards and CEOs are already asking. Everyone bought Claude Code, Cursor, and a dozen other AI tools. Nobody can tell you what came out of it. Adoption isn't proficiency, and most companies have zero idea who's actually getting value from any of it. Deel Engage closes that gap. We integrate with Anthropic and every major LLM. AI usage lands next to KPIs, feedback, and competencies in your reviews module. One view of AI maturity across every location, time zone, and employment type. No manual stitching. What we measure: token spend across every major LLM provider. Where direct data isn't available, we approximate from usage patterns. One number, consistent across every tool and team. Is it the whole story? No. It's gameable. Anyone can burn tokens to look busy. But it's a real signal in a space where most companies have zero. And as Anthropic and the other model providers ship deeper analytics, Engage absorbs them. Sharper signal, faster than you could build it. Your next review cycle is the test. Walk in with data, or walk in guessing. Deel Engage is the difference! Full article below