(╥﹏╥) | samuu | α|Δ|ι

3.6K posts

(╥﹏╥) | samuu | α|Δ|ι

@Samunder12or8

18 l Coder | Tech paglu | AI ML l Web 3 | Sketching newbie | Music | affiliated with okara ai: https://t.co/Xkgzwa9Gep

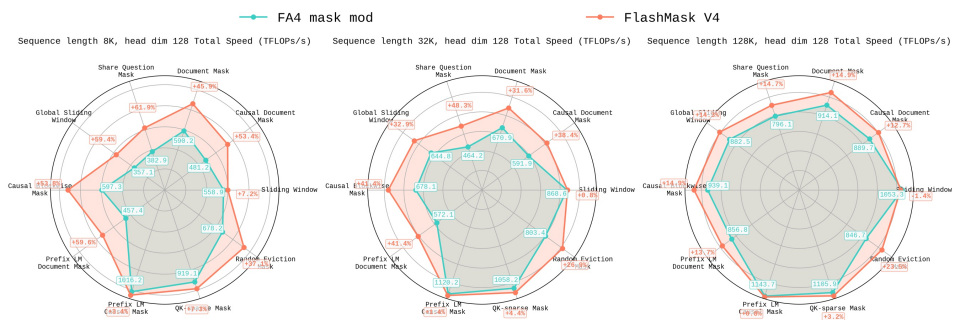

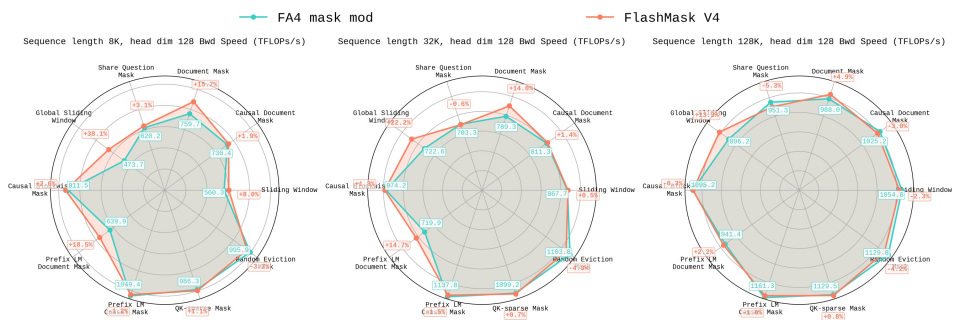

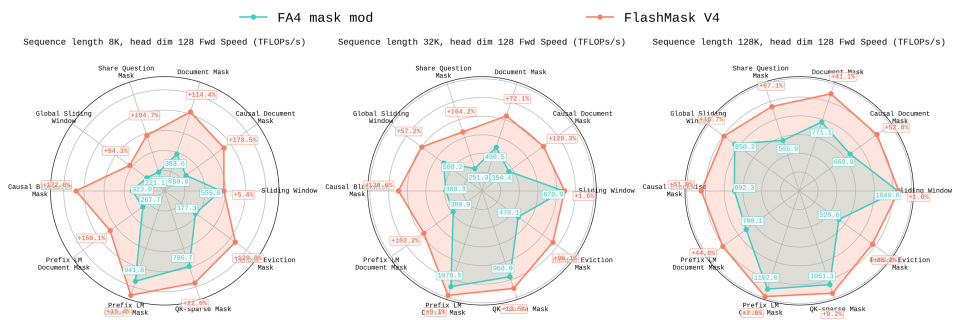

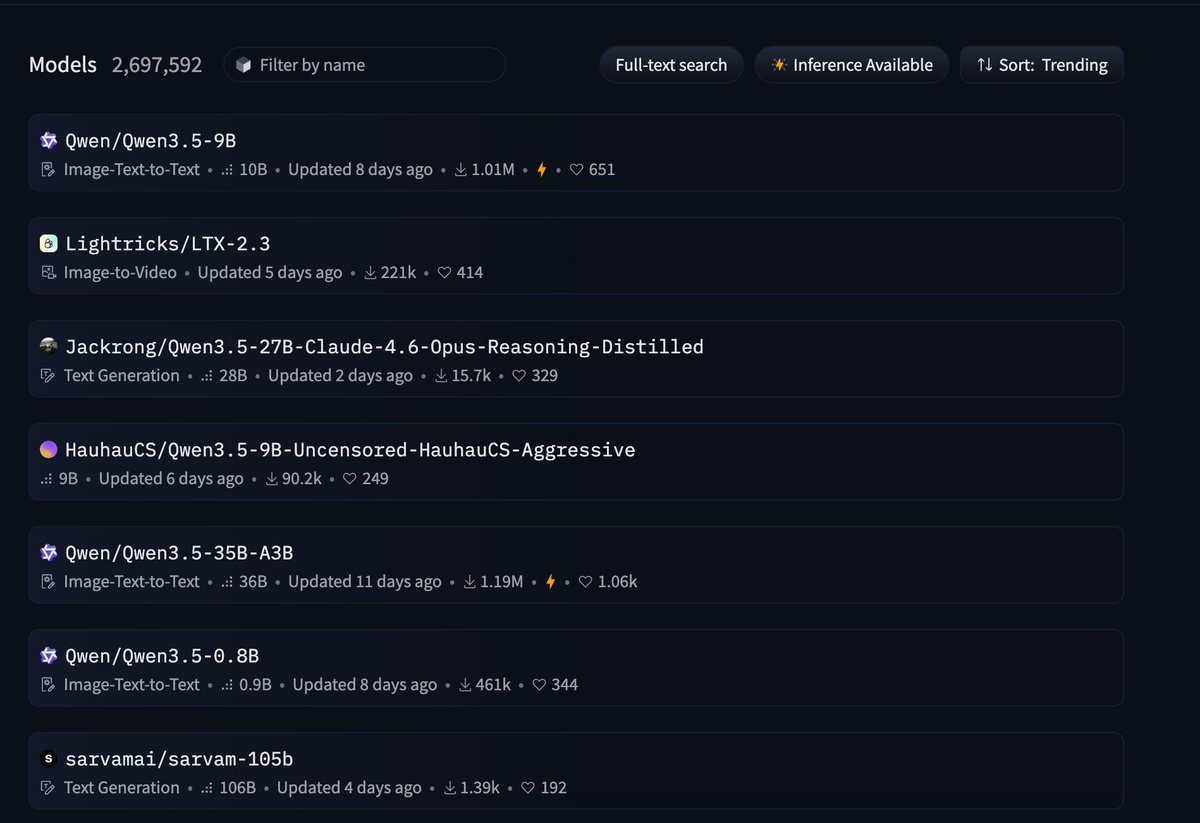

🧵 1/4 Still waiting for DeepSeek-V4? We (@Zai_org) made DSA 1.8× faster with minimal code change — and it's ready to deliver real inference gains on GLM-5. IndexCache removes 50% of indexer computations in DeepSeek Sparse Attention with virtually zero quality loss. On GLM-5 (744B), we get ~1.2× E2E speedup while matching the original across both long-context and reasoning tasks. On our experimental-sized 30B model, removing 75% of indexers gives 1.82× prefill and 1.48× decode speedup at 200K context. How? 🧵👇 #DeepSeek #GLM5 #Deepseekv4 #LLM #Inference #Efficiency #LongContext #MLSys #SparseAttention

Running Qwen3.5-9B Unsloth on a 3yr old PC performing at ~15 tps perfectly handling a suite of 10 tools. I can’t same the same for Phi-4 or Gemma3-12B.