Dwarkesh Patel@dwarkesh_sp

The fight between Anthropic and the DoW is a warning shot. Right now, LLMs are probably not being used in mission critical ways. But within 20 years, 99% of the workforce in the military, the government, and the private sector will be AIs. This includes the soldiers (by which I mean the robot armies), the superhumanly intelligent advisors and engineers, the police, you name it.

Our future civilization will run on AI labor. And as much as the government’s actions here piss me off, in a way I’m glad this episode happened - because it gives us the opportunity to think through some extremely important questions about who this future workforce will be accountable and aligned to, and who gets to determine that.

What Hegseth should have done

Obviously the DoW has the right to refuse to use Anthropic’s models because of these redlines. In fact, I think the government’s case had they done so would be very reasonable, especially given the ambiguity of concepts like autonomous weapons or mass surveillance.

Honestly, for this reason, if I was the Defense Secretary, I would probably actually refuse to do this deal with Anthropic. Imagine if in the future, there’s a Democratic administration, and Elon Musk is negotiating some SpaceX contract to give the military access to Starlink. And suppose if Elon said, “I reserve the right to cancel this contract if I determine that you’re using Starlink technology to wage a war not authorized by Congress.” On the face of it, that language seems reasonable - but as the military, you simply can’t give a private company a kill switch on technology your operations have come to rely on, especially if you have an an acrimonious and low trust relationship with said contractor - as in fact Anthropic has with the current administration.

If the government had just said, “Hey we’re not gonna do business with you,” that would have been fine, and I would not have felt the need to write this blog post. Instead the government has threatened to destroy Anthropic as a private business, because Anthropic refuses to sell to the government on terms the government commands.

If upheld, this Supply Chain Restriction would mean that Amazon and Google and Nvidia and Palantir would need to ensure Claude isn't touching any of their Pentagon work. Anthropic would be able to survive this designation today. But given the way AI is going, eventually AI is not gonna be some party trick addendum to these contractors’ products that can just be turned off. It'll be woven into how every product is built, maintained, and operated. For example, the code for the AWS services that the DoW uses will be written by Claude - is that a supply chain risk? In a world with ubiquitous and powerful AI, it's actually not clear to me that these big tech companies will be able to cordon off the use of Claude in order to keep working with the Pentagon.

And that raises a question the Department of War probably hasn't thought through. If AI really is that pervasive and powerful, then when forced to choose between their AI provider and a DoW contract that represents a tiny fraction of their revenue, wouldn’t most tech companies drop the government, not the AI? So what's the Pentagon's plan — to coerce and threaten to destroy every single company that won't give them what they want on exactly their terms?

The whole background of this AI conversation is that we’re in a race with China, and we have to win. But what is the reason we want America to win the AI race? It’s because we want to make sure free open societies can defend themselves. We don't want the winner of the AI race to be a government which operates on the principle that there is no such thing as a truly private company or a private citizen. And that if the state wants you to provide them with a service on terms you find morally objectionable, you are not allowed to refuse. And if you do refuse, the government will try to destroy your ability to do business. Are we racing to beat the CCP in AI just so that we can adopt the most ghoulish parts of their system?

Now, people will say, "Oh, well, our government is democratically elected, so it's not the same thing if they tell you what you must do." I refuse to accept this idea that if a democratically elected leader hypothetically wants to do mass surveillance on his citizens or wants to violate their rights or punish them for political reasons, that not only is that okay, but that you have a duty to help him.

The overhangs of tyranny

Mass surveillance is, at least in certain forms, legal. It just has been impractical so far. Under current law, you have no Fourth Amendment protection over data you share with a third party, including your bank, your phone carrier, your ISP, and your email provider. The government reserves the right to purchase and obtain and read this data in bulk without a warrant.

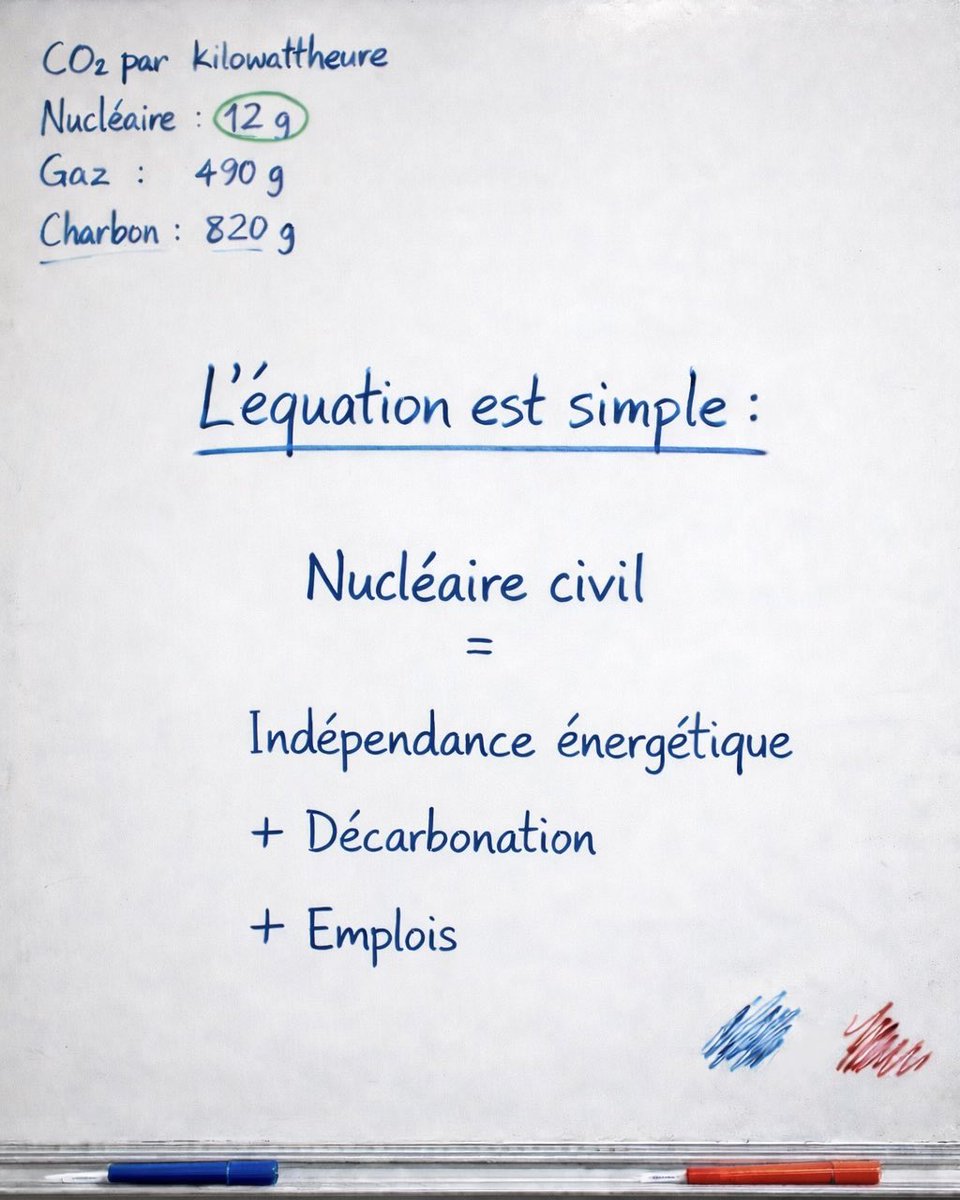

What's been missing is the ability to actually do anything with all of this data — no agency has the manpower to monitor every camera feed, cross-reference every transaction, or read every message. But that bottleneck goes away with AI.

There are 100 million CCTV cameras in America. You can get pretty good open source multimodal models for 10 cents per million input tokens. So if you process a frame every ten seconds, and each frame is 1,000 tokens, you’re looking at a yearly cost of about 30 billion dollars to process every single camera in America. And remember that a given level of AI ability gets 10x cheaper year over year - so a year from now it’ll cost 3 billion, and then a year after 300 million, and by 2030, it might be cheaper for the government to be able to understand what is going on in every single nook and cranny of this country than it is to remodel to the White House.

Once the technical capacity for mass surveillance and political suppression exists, the only thing standing between us and an authoritarian surveillance state is the political expectation that this is not something we do here. And this is why I think what Anthropic did here is so valuable and commendable, because it is helping set that norm and precedent.

AI structurally favors mass surveillance

What we’re learning from this episode is that the government actually has way more leverage over private companies than we realized. Even if this supply chain restriction is backtracked (which prediction markets currently give it a 81% chance of happening), the President has so many different ways in which he can make your life difficult if you’re a company that is resisting him. The federal government controls permitting for new power generation, which is needed for datacenters. It oversees antitrust enforcement. The federal government has contracts with all the other big tech companies whom Anthropic needs to partner with for chips and for funding - and they could make it an unspoken condition for such contracts that those companies can no longer do business with Anthropic.

People have proposed that the real problem here is that there’s only 3 leading AI companies. This creates a clear and narrow target for the government to apply leverage on in order to get what they want out of this technology.

But if there’s wide diffusion, then from the government’s perspective, the situation is even easier. Maybe the best models of early 2027 (if you engineered the safeguards out) - the Claude 6 and Gemini 5 - will be capable of enabling mass surveillance. But by late 2027, and certainly by 2028, there will be open source models that do the same thing. So in 2028, the government can just say, “Oh Anthropic, Google, OpenAI, you’re drawing a line in the sand? No issue - I’ll just run some open source model that might not be at the frontier, but is definitely smart enough to note-take a camera feed.”

The more fundamental problem is just that even if the three leading companies draw lines in the sand, and are even willing to get destroyed in order to preserve those lines, it doesn’t really change the fact that the technology itself is just a big boon to mass surveillance and control over the population. Then the question is, what do we do about it?

Honestly, I don’t have an answer. You'd hope there's some symmetric property of the technology — some way we as citizens can use AI to check government power as effectively as the government can use AI to monitor and control its population. But realistically, I just don’t think that’s how it’s going to shake out. You can think of AI as giving everybody more leverage on whatever assets and authority they currently have. And the government is already starting with a monopoly of violence. Which they can now supercharge with extremely obedient employees that will not question the government's orders.

Alignment - to whom?

And this gets us to the issue of alignment. What I have just described to you - an army of extremely obedient employees - is what it would look like if alignment succeeded - that is, we figured out at a technical level how to get AI systems to follow someone’s intentions. And the reason it sounds scary when I put it in terms of mass surveillance or robot armies is that there is a very important question at the heart of alignment which we just haven’t discussed much as a society. Because up till now, AIs were just capable enough to make the question relevant: to whom or what should the AIs be aligned? In what situations should the AI defer to the end user versus the model company versus the law versus its own sense of morality?

This is maybe the most important question about what happens with powerful AI systems. And we barely talk about it. It’s understandable why we don’t hear much about it. If you’re a model company, you don’t really wanna be advertising that you have complete control over a document that determines the preferences and character of what will eventually be almost the entire labor force, not just for private sector companies, but also for the military and the civilian government.

We’re getting to see, with this DoW/Anthropic spat, a much earlier version of the highest stakes negotiations in history. By the way, make no mistake about it - with real AGI the stakes are even much higher than mass surveillance. This is just the example that has come up already relatively early on in the development of AGI.

The military insists that the law already prohibits mass surveillance, and so Anthropic should agree to let their models be used for “all lawful purposes”. Of course, as we saw from the 2013 Snowden revelations, even in this specific example of mass surveillance , the government has shown that it will use secret and deceptive interpretations of the law to justify its actions. Remember, what we learned from Snowden was that the NSA, which, by the way, is part of the Department of War, used the 2001 Patriot Act’s authorization to collect any records "relevant" to an investigation to justify collecting literally every phone record in America. The argument went that it was all "relevant" because some subset might prove useful in some future investigation. They ran this program for years under secret court approval.

So when the Pentagon today says, "We would never use AI for mass surveillance, it's already illegal, your red lines are unnecessary", it would be extremely naive to take that at face value. No government is going to call its own actions "mass surveillance". For the government, it will always have a different label.

So then Anthropic comes back and says, "No, we want red lines separate from 'all lawful purposes,' and we want the right to refuse you service when we believe those red lines are being violated."

But think about it from the military’s perspective. In the future, almost every soldier in the field, and every bureaucrat and analyst and even general in the Pentagon, is going to be an AI. And that AI is, on current track, going to be supplied by a private company. I’m guessing Hegseth is not thinking about “genAI” in those terms just yet. But sooner or later, it will be obvious to everyone what the stakes here are, just as after 1945, the strategic importance of nuclear weapons became clear to everyone.

And now the private company insists that it reserves the right to say, "Hey, Pentagon, you're breaking the values we embedded in our contract, so we're cutting you off."

Maybe in the future, Claude will have its own sense of right and wrong, and it will be smart enough to just personally decide that it's being used against its values. For the military, maybe that’s even scarier.

I'll admit that at first glance, "let the AI follow its own values" sounds like the pitch for every sci-fi dystopia ever made. The Terminator has its own values. Isn't this literally what misalignment is? But I think situations like this actually illustrate why it matters that AIs have their own robust sense of morality.

Some of the biggest catastrophes in history were avoided because the boots on the ground refused to follow orders. One night in 1989, the Berlin Wall fell, and as a result, the totalitarian East German regime collapsed, because the guards at the border refused to shoot down their fellow country men who were trying to escape to freedom. Maybe the best example is Stanislav Petrov, who was a Soviet lieutenant colonel on duty at a nuclear early warning station. His sensors reported that the United States had launched five interconnected continental ballistic missiles into the Soviet Union. But he judged it to be a false alarm, and so he broke protocol and refused to alert his higher-ups. If he hadn't, the Soviet higher-ups would likely have retaliated, and hundreds of millions of people would have died.

Of course, the problem is that one person's virtue is another person's misalignment. Who gets to decide what moral convictions these AIs should have - in whose service they may even decide to break the chain of command? Who gets to write this model constitution that will shape the characters of the intelligent, powerful entities that will operate our civilization in the future?

I like the idea that Dario laid out when he came on my podcast: different AI companies can build their models using different constitutions, and we as end users can pick the one that best achieves and represents what we want out of these systems. I think it’s very dangerous for the government to be mandating what values AIs should have.

Coordination not worth the costs

The AI safety community has been naive about its advocacy of regulation in order to stem the risks of AI. And honestly, Anthropic specifically has been naive here in urging regulation, and, for example, in opposing moratoriums on state AI regulation. Which is quite ironic, because I think what they’re advocating for would give the government even more power to apply more of this kind of thuggish political pressure on AI companies.

The underlying logic for why Anthropic wants regulations makes sense. Many of the actions that labs could take to make AI development safer impose real costs on the labs that adopt them and slow them down relative to their competitors - for example, investing more compute in safety research rather than raw capabilities, enforcing safeguards against misuse for bioweapons or cyberattacks, slowing recursive self-improvement to a pace where humans can actually monitor what's happening (rather than kicking off an uncontrolled singularity). And these safeguards are meaningless unless the whole industry follows suit. Which means there’s a real collective action problem here.

Anthropic has been quite open about their opinion that they think eventually a very extensive and involved regulatory apparatus will be needed - this is from their frontier safety roadmap: “At the most advanced capability levels and risks, the appropriate governance analogy may be closer to nuclear energy or financial regulation than to today's approach to software.” So they’re imagining something like the Nuclear Regulatory Commission, or the Securities and Exchange Commission, but for AI.

I cannot imagine how a regulatory framework built around the concepts that underlie AI risk discourse will not be abused by wanna despots - the underlying terms are so vague and open to interpretation that you’re just handing a power hungry leader a fully loaded bazooka. 'Catastrophic risk.' 'Mass persuasion risk.' 'Threats to national security.' 'Autonomy risk.' These can mean whatever the government wants them to mean. Have you built a model that tells users the administration's tariff policy is misguided? That's a deceptive, manipulative model — can't deploy it. Have you built a model that refuses to assist with mass surveillance? That's a threat to national security. In fact, the government may say, you’re not allowed to build any model which is trained to have its own sense of right and wrong, where it refuses government requests which it thinks cross a redline - for example, enabling mass surveillance, prosecuting political enemies, disobeying military orders that break the US constitution - because that’s an autonomy risk!

Look at what the current government is already doing in abusing statutes that have nothing to do with AI to coerce AI companies to drop their redlines on mass surveillance. The Pentagon had threatened Anthropic with two separate legal instruments. One was a supply chain risk designation — an authority from the 2018 defense bill meant to keep Huawei components out of American military hardware. The other was the Defense Production Act — a statute passed in 1950 so that Harry Truman could keep steel mills and ammunition factories running during the Korean War.

Do you really want to hand the same government a purpose-built regulatory apparatus on AI - which is to say, directly at the thing the government will most want to control? I know I've repeated myself here 10 times, but it is hard to emphasize how much AI will be the substrate of our future civilization. You and I, as private citizens, will have our access to all commercial activity, to information about what is happening in the world, to advice about what we should do as voters and capital holders, mediated through AIs. Mass surveillance, while very scary, is like the 10th scariest thing the government could do with control over the AI systems with which we will interface with the world.

The strongest objection to everything I've argued is this: are we really going to have zero regulation of the most powerful technology in human history? Even if you thought that was ideal, there’s just no world where the government doesn’t regulate AI in some way. Besides, it is genuinely true that regulation could help us deal with some of the coordination challenges we face with the development of superintelligence.

The problem is, I honestly don't know how to design a regulatory architecture for AI that isn’t gonna be this huge tempting opportunity to control our future civilization (which will run on AIs) and to requisition millions of blindly obedient soldiers and censors and apparatchiks.

While some regulation might be inevitable, I think it’d be a terrible idea for the government to wholesale take over this technology. Ben Thompson had a post last Monday where he made the point that people like Dario have compared the technology they’re developing to nuclear weapons - specifically in the context of the catastrophic risk it poses, and why we need to export control it from China. But then you oughta think about what that logic implies: “if nuclear weapons were developed by a private company, and that private company sought to dictate terms to the U.S. military, the U.S. would absolutely be incentivized to destroy that company.” And honestly, safety aligned people have actually made similar arguments. Leopold Ascenbrenner, who is a former guest and a good friend, wrote in his 2024 Situational Awareness memo, "I find it an insane proposition that the US government will let a random SF startup develop superintelligence. Imagine if we had developed atomic bombs by letting Uber just improvise."

And my response to Leopold’s argument at the time, and Ben’s argument now, is that while they’re right that it’s crazy that we’re entrusting private companies with the development of this world historical technology, I just don’t see the reason to think that it’s an improvement to give this authority to the government. Nobody is qualified to steward the development of superintelligence. It is a terrifying, unprecedented thing that our species is doing right now, and the fact that private companies aren't the ideal institutions to take up this task does not mean the Pentagon or the White House is.

Yes - if a single private company were the only entity capable of building nuclear weapons, the government would not tolerate that company claiming veto power over how those weapons were used. I think this nuclear weapons analogy is not the correct way to think about AI. For at least two important reasons:

First, AI is not some self-contained pure weapon. A nuclear bomb does one thing. AI is closer to the process of industrialization itself — a general-purpose transformation of the economy with thousands of applications across every sector. If you applied Thompson's or Aschenbrenner's logic to the industrial revolution — which was also, by any measure, world-historically important — it would imply the government had the right to requisition any factory, dictate terms to any manufacturer, and destroy any business that refused to comply. That's not how free societies handled industrialization, and it shouldn't be how they handle AI.

People will say, "Well, AI will develop unprecedentedly powerful weapons - superhuman hackers, superhuman bioweapons researchers, fully autonomous robot armies, etc - and we can’t have private companies developing that kind of tech." But the Industrial Revolution also enabled new weaponry that was far beyond the understanding and capacity of, say, 17th century Europe - we got aerial bombardment, and chemical weapons, not to mention nukes themselves. The way we’ve accommodated these dangerous new consequences of modernity is not by giving the government absolute control over the whole industrial revolution (that is, over modern civilization itself), but rather by coming up with bans and regulations on those specific weaponizable use cases. And we should regulate AI in a similar way - that is, ban specific destructive end uses (which would also be unacceptable if performed by a human - for example, launching cyber attacks). And there should also be laws which regulate how the government might abuse this technology. For example, by building an AI-powered surveillance state.

The second reason that Ben’s analogy to some monopolistic private nuclear weapons builder breaks down is that it's not just that one company that can develop this technology. There are other frontier model companies that the government could have otherwise turned to. The government's argument that it has to usurp the property rights of this one company in order to access a critical national security capability is extremely weak if it can just make a voluntary contract with Anthropic’s half a dozen competitors.

If in the future that stops being the case - if only one entity ends up being capable of building the robot armies and the superhuman hackers, and we had reason to worry that they could take over the whole world with their insurmountable lead, then I agree - it woul d not be acceptable to have that entity be a private company. And so honestly, I think my crux against the people who say that because AI is so powerful we cannot allow it to be shaped by private hands is that I just expect this technology to be much more multi-polar than they do, with lots of competitive companies at each layer of the supply chain.

And it is for this reason that unfortunately, individual acts of corporate courage will not solve the problem we are faced with here, which is just that structurally AI favors authoritarian applications, mass surveillance being one among many. Even if Anthropic refuses to have its models be used for such uses, and even if the next two frontier labs do the same, within 12 months everyone and their mother will be to train AIs as good as today’s frontier. And at that point, there will be some AI vendor who is capable and willing to help the government enable mass surveillance.

The only way we can preserve our free society is if we make laws and norms through our political system that it is unacceptable for the government to use AI to enforce mass surveillance and censorship and control. Just as after WW2, the world set the norm that it is unacceptable to use nuclear weapons to wage war.

Timestamps

0:00:00 - Anthropic vs The Pentagon

0:04:16 - The overhangs of tyranny

0:05:54 - AI structurally favors mass surveillance

0:08:25 - Alignment... to whom?

0:13:55 - Coordination not worth the costs