Jackson-Yuan

612 posts

Jackson-Yuan

@Yuanvis

Co-founder @ Rayvo | POV × AI × Robotics Building the real-world data engine for embodied AI — from egocentric capture to scalable training.

Not just video. Training-ready 3D human behavior. From raw POV to structured 3D trajectories & actions. Built for embodied models.

Today I was catching up with a friend who’s spent decades in China tech and now advises several Chinese robotics companies, and both of us were confused how far perceptions lag reality. In their travels this month, they met with a Japanese CEO of an industrial robotics company and also the head of humanoid robotics at a major global consulting firm. Both said that Chinese companies were just working on dancing robots, Unitree-style. There was very little awareness that, whether or not it’s the right long-term direction, humanoid robots are already in scaled production and operating on factory lines in China today. In fact we plan to visit such lines in April and almost did last week but couldn’t make it work logistically. Additionally, while most of our time was spent on new energy and manufacturing, we also visited a few robotics companies. They were uniformly bullish that robots will replace a meaningful amount of human labor, and in many factory settings, that’s already clearly happening. Some lines were truly mostly devoid of people. The question that always comes up in these conversations BTW is hands. Dexterity. Tactile sensing. People from outside the industry tend to assume this is the hardest part. What I’ve now heard repeatedly across different companies is that, from a hardware perspective, this problem is largely solved. Highly sensitive robot hands already exist. They can handle delicate objects without deforming soft materials, and in some ways they’re already superhuman, able to detect tiny changes in texture, temperature, and weight. You see them everywhere in demo mode at trade shows and exhibitions. These systems aren’t always economical yet, but there’s strong confidence they’ll become cost-effective soon, across many more models. The real bottleneck now is intelligence. Without it, you’re left with a very precise machine that isn’t autonomous and can’t be used in a general-purpose way. Much of the hardware people imagine for future humanoid robots already exists. What’s missing in a big way is real-world understanding and fast, adaptive intelligence. We’re going deeper on this next. We’re partnering with the Shenzhen Robotics Association and attending their conference in April, and we’re putting together a robotics-focused trip from April 20th to 24th. Link in comments (and also pinned to my profile).

Humans learn by watching. Robots should too.

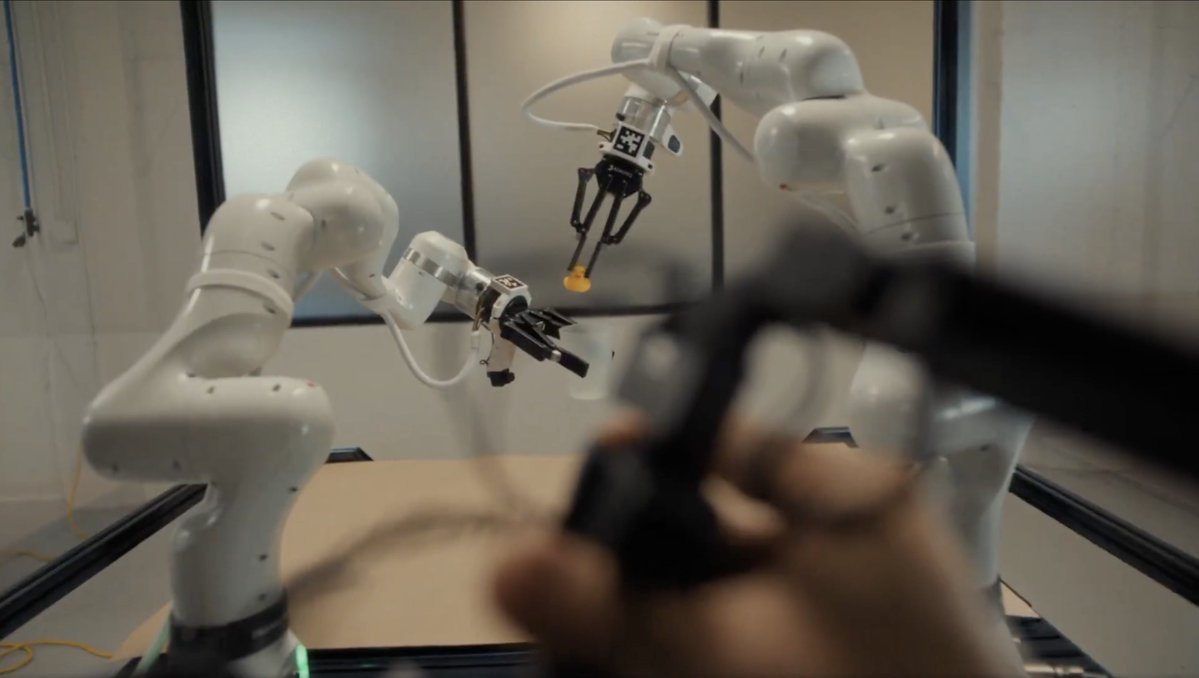

FSD is trained on billions of real-world miles, including power outages 📸 @edgecase411

Why does the same household task keep breaking robots? Because the world changes. At EgoScale, we collect thousands of unscripted egocentric household videos, capturing how the same task varies across objects, materials, geometries, and lighting conditions. With explicit task structure and subtask-level annotations, this data helps robots move beyond a single environment and generalize to the real world. Here is a simple example from the wild: wiping a table while holding another object, a natural bimanual coordination pattern captured by EgoScale. #VLA #Humanoid

This also shows up in the representations learned by the model. We plot the model’s representations of human and robot images. As pre-training is scaled up, the representation of humans and robots become more aligned: to a scaled-up model, human videos "look" like robot demos.