Sabitlenmiş Tweet

blockjoe

177 posts

blockjoe

@__blockjoe__

💻🐍🪙🏌️🧮🔬| CTO https://t.co/GL9ngYcpOT

Delaware Katılım Aralık 2020

129 Takip Edilen81 Takipçiler

@FUCORY If you're trying to automate this, fzf is the perfect terminal tool for quickly selecting the multiple files to push to context.

Basic bash logic:

1. Some terminal tool to treeview the pwd

2. fzf to select multiple files

3. Some user input field for the prompt

4. Profit

English

@gakonst @_opencv_ I just threw up my own shell/vim utils here github.com/blockjoe/llm.v…

It's undocumented, still has my home directory hardcoded, vim not nvim, and it's gonna have some fzf/bat/ripgrep dependencies, but it's how I pass any code I want in the context using vim text-objects.

English

@_opencv_ nvim user would love to see any of your dotfiles even if it's dirty / undocumented etc

English

@0xz80 And I'm not exaggerating when I say trivial.

Especially if you allow users to import some random RPC link they pulled off of some random website in the wild.

x.com/__blockjoe__/s…

blockjoe@__blockjoe__

@ankr @Polygon @FantomFDN @chainlist_team 36/ Now let's make this interesting. We're going to override that "HTTPProvider" from web3py to look for ENS address lookups.

English

If you understand the tech at all, it makes perfect sense.

Blindly trusting nodes for things like address resolution is a horrible pattern that most people aren't even aware is a trusted relationship.

It's trivial for someone to swap out ENS (and other DeFi) registry lookups with no way to catch it in flight.

English

I think we agree here that this doesn't do much in terms of "verifying model interactions."

The only thing this proves is that the request came from a known host, and in this case the known host would be OpenAI.

The request should just be coming off a cron job, in response to a webhook, or manually executed like you call out. I'm not sure what makes one of these any "better" than the other, and think what matters more is making sure that the humans aren't the ones generating the output.

English

@__blockjoe__ @MateuszAtCC @dabit3 @ai16zdao @OpacityNetwork You might have misunderstood the term "done by a human". @dabit3 wrote "Agents can now prove that AI interactions (...) are authentic" which is what @MateuszAtCC referred to. zkTLS doesn’t prove this, as the proven request could just as well be made by a human, not AI Agent.

English

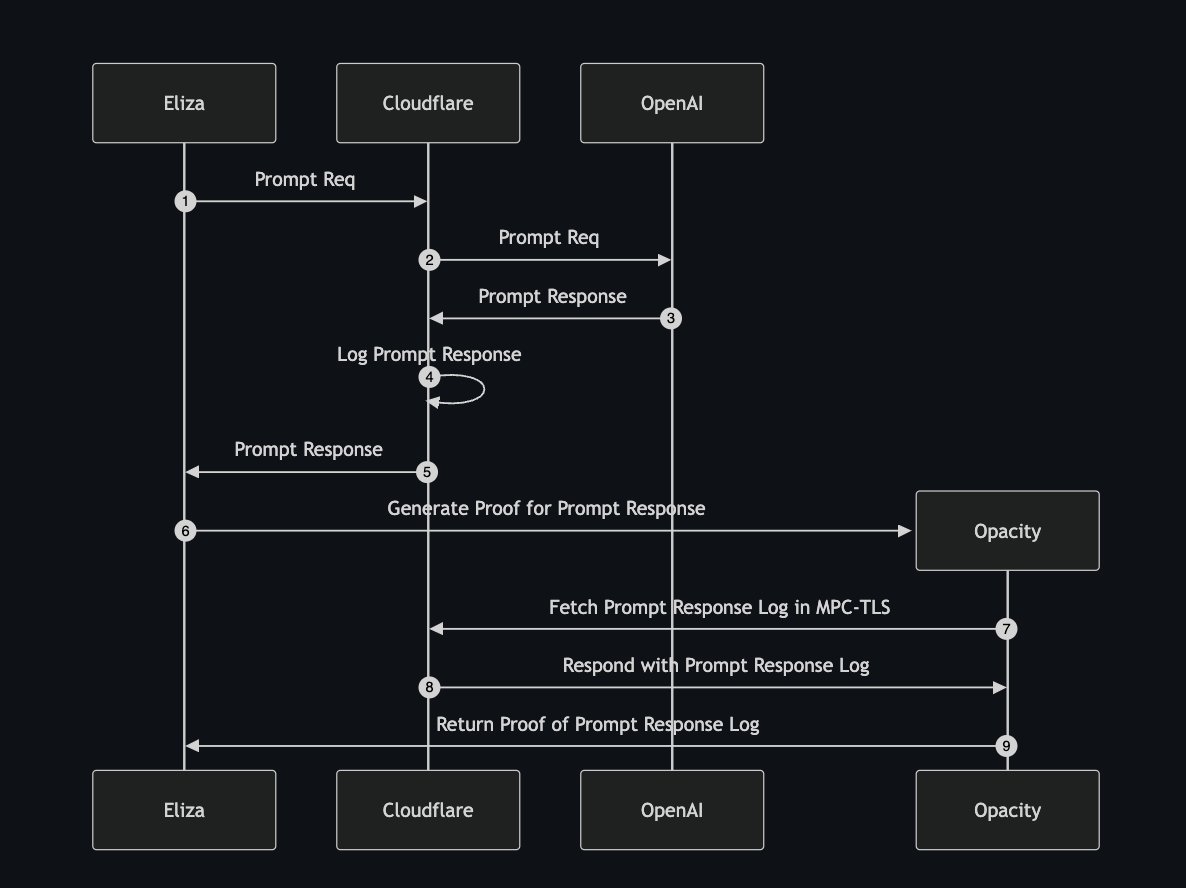

Verifiable inference now available in @ai16zdao Eliza via @OpacityNetwork

Agents can now prove that AI interactions and inferences are authentic without compromising privacy, which helps prevent situations where users might mistakenly believe AI actions were performed by humans.

🐐 work by @gajesh @eulerlagrange @RonTuretzky @0xfabs

PR details:

github.com/elizaOS/eliza/…

English

In this case, it's a proof that a response from OpenAI (or another model host) has been cached on CloudFlare.

In this case, if it came from OpenAI, then it's not "done by a human" but you're right, not a proof of proper inference execution.

But this approach doesn't generalize to self-hosted or less well known model hosts.

English

@dabit3 @ai16zdao @OpacityNetwork I fail to see how this proves that *AI* did the interaction. zkTLS proves that a certain data/response was received w/o revealing it but that same action/response could have been made/received by a human doing the work.

English

@potuz_eth @mteamisloading Light clients will solve this, so does running your own full node. Distrubting those tools to the masses is the hard part.

English

@__blockjoe__ @mteamisloading Yeah right! So hopefully lightclients eventually will solve this.

English

It's easy to fix for individual roll-ups if you're comfortable slightly diverging off the RPC spec to throw the context for the "latest" block methods into somewhere like "extraData", but leveraging it means having a slight divergence from the rest of your downstream tools.

But if you're starting to diverge to that level, now it's probably worth thinking about jumping right to light client adoption directly instead of trying to do it for an intermediary solution.

It's a tough one.

English

A light client is more than just a DA verification tool though.

I get not seeing the end user advantage of verifying availability via sampling, i.e. "just download the whole block, it's not that big".

Its real user benefit is being able to verify that the network state of any inputs to their transactions are correct, and not subject to RPC poisoning.

These light client nuances likely aren't going to be broadly applicable between both Eth and Solana, rather tied more deeply into the existing node implementations and commitment schemes of each protocol.

English

@mteamisloading @nickwh8te @colludingnode @IanSNorden Why do you even need das? Just fetch the full blocks.

English

@toly cc: @nickwh8te @colludingnode @IanSNorden any thoughts here?

My personal thinking is that there is a much different cultural expectation for who verifies the chain and this has shaped (and will shape) technical decisions that make the viability of these ideas a bit different.

English

How do you know the contract you're interacting with isn't one that has an identical ABI to the one you think you're interacting with though, and the actual logic implemented in the methods does something different?

ABI decoding helps catch the low-hanging fruit, but it still unfortunately has holes.

English

@__blockjoe__ @mteamisloading Fair, users have some solace against this in their hardware wallet ABI decoding what they're signing, but yeah this is a horrible UX of crypto

English

I linked out to a thread above, but the tl;dr is that the "latest" block parameter in "eth_call" makes the default smart contract interaction method non-replayable.

Sure, you can randomly sample deterministic requests, including eth_call at specified blocks to get consistency, but since the default behavior is to use "latest", you can choose to return whatever response you want only for "latest" blocks since that lacks the context needed to replay those requests.

English

@mteamisloading @stskeeps It's partially because there's no way to "randomly sample" the same request across multiple providers without fundamentally changing the RPC API.

Any such service needs explicit buy-in from either the core devs or the node operators to enable.

English

This take is actually off, both the app UI and the wallet connect to their own trusted RPCs.

The full node in either case is only going to verify that the transaction can be properly included in the block, but anything that the RPC does prior to constructing that transaction (getting data) is still 100% trusted in both instances.

English

@mteamisloading This seems false:

- User sends a tx using a trusted RPC to an app UI

- The app UI is actually connected to a full node that verifies the chain.

- User gets feedback both from their wallets (trusted RPC) and the app UI (another independent node).

In Solana apps can't verify.

English

@VitalikButerin @FUCORY When we hacked this into ethers, it was pretty easy with eth_createAccessList to get what we needed to verify the storage and account proofs, but if we wanted to go all the way on eth_call, embedding an EVM runtime would have completed this.

github.com/stateless-solu…

English

I think I'm seeing it.

My understanding of a "verification config" was logic for describing arbitrary verification code to be executed client side.

But you're describing using contracts to encode the verifier logic directly so that the node ultimately still gets delegated that execution, which, obviously duh.

In that case still, the light client is only going to be able to prove the underlying account and storage slots, so if that verification logic involves an external input, your choices become:

1. implement a validity proof for the existing call

2. simply execute the EVM code initialized with the storage slots verified by the light client proof directly in the browser (which, helios does, yeah)

English