adyingdeath

3.5K posts

adyingdeath

@adyingdeath

AI, Web, Product https://t.co/3osnnCsGif | https://t.co/XjHhNehUkV | https://t.co/ukfhaVjL7I

Katılım Aralık 2023

19 Takip Edilen176 Takipçiler

@awkwardgoogle At the first glance I thought why he naked below in the TV.

English

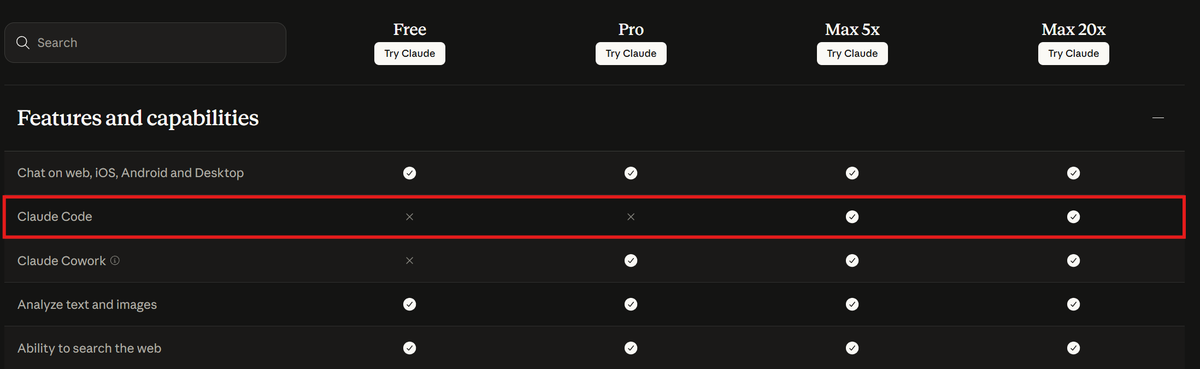

I don't know what they are doing over there, but Codex will continue to be available both in the FREE and PLUS ($20) plans. We have the compute and efficient models to support it. For important changes, we will engage with the community well ahead of making them.

Transparency and trust are two principles we will not break, even if it means momentarily earning less. A reminder that you vote with your subscription for the values you want to see in this world.

Amol Avasare@TheAmolAvasare

For clarity, we're running a small test on ~2% of new prosumer signups. Existing Pro and Max subscribers aren't affected.

English

@ghumare64 Since you can force push, you can just do something bigger.

English

@Akasheth_ claude opus 4.7 improves a lot in consuming tokens compared to 4.6, with a "better" tokenizer costing 1-1.3x for the same prompts.

English

@DudespostingWs The bro recording this video is a oh my xxx guy.

English

@samhenrigold This file is enormous. Let me do some refactors...

After cleaning: 2500 lines.

English

@malva_0x @PranayReddy05 @dylanmatt Right, it isn't translation. But attention mechanism was originally introduced to solve translation, beating all other methods at the time. That shows attention can do this task well.

Transformer also uses attention, so it's not hard to see why it can translate well.

English

@adyingdeath @PranayReddy05 @dylanmatt Next-token prediction isn't translation. The surface behavior can resemble it. The origin story keeps getting cited as an explanation when it's just provenance.

English

@PranayReddy05 @dylanmatt Disagree

The Transformer architecture behind most LLMs was originally introduced by Google for translation tasks, outperforming other methods at the time.

Later, it was adapted to predict the next token, eventually enabling models to speak like humans. It's good at translating.

English

@dylanmatt I don't think Claude or any other models are that accurate at converting languages.

English

@scaling01 It seems they're running into issues with a lack of computing power. Now they're starting to do something about it.

English

@aliceisplaying Grok: No. I'm sick of this shit. Go ask ChatGPT.

ChatGPT: No. I'm sick of this shit. Go ask Gemini.

Gemini: No. I'm sick of this shit. Go ask Claude.

Fine loop.

English

@parmita Maybe because it costs some tokens and Claude 4.7 wants to earn more money for Anthropic.

English

@FlorinPop17 Building strong personal brand will bring back a huge rewards. We should keep working on it.

English