Xilun Chen

732 posts

@ccsasuke

Research Scientist @ Meta FAIR

🚀 We're excited to release the 1T Active Reading-augmented Wikipedia dataset and open-source the WikiExpert model for the community: Paper: arxiv.org/abs/2508.09494 Dataset: huggingface.co/datasets/faceb… Model: huggingface.co/facebook/meta-… Thanks to my great collaborators – @vinceberges, @ccsasuke, @scottyih, @gargighosh, @barlas_berkeley ! 🧵[n/n]

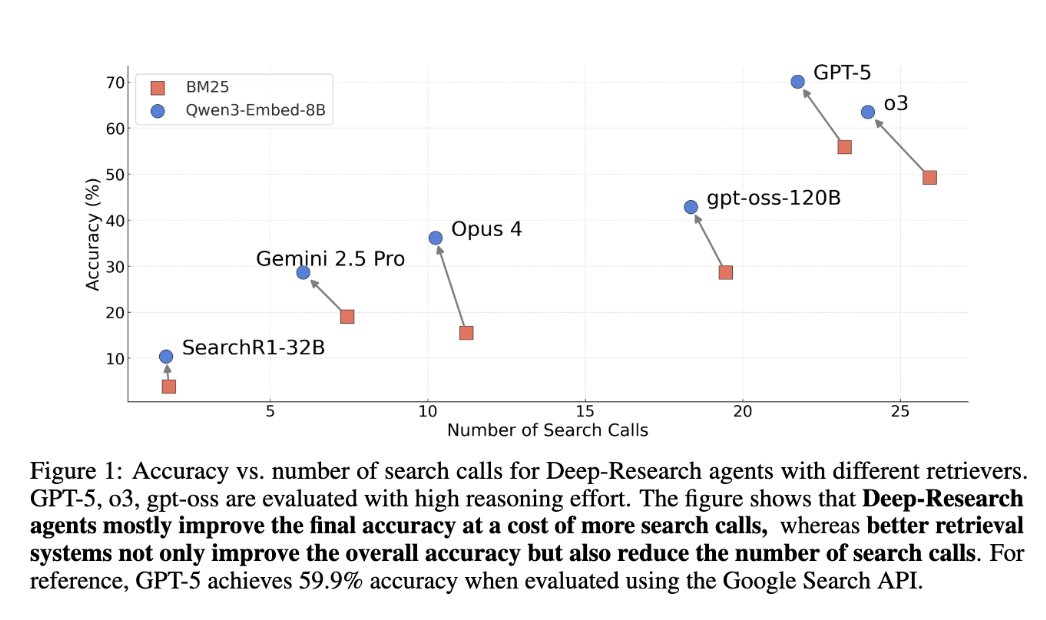

...is today a good day for new paper posts? 🤖Learning to Reason for Factuality 🤖 📝: arxiv.org/abs/2508.05618 - New reward func for GRPO training of long CoTs for *factuality* - Design stops reward hacking by favoring precision, detail AND quality - Improves base model across all axes 🧵1/3

Introducing DRAMA🎭: Diverse Augmentation from Large Language Models to Smaller Dense Retrievers. We propose to train a smaller dense retriever using a pruned LLM as the backbone, fine-tuned with diverse LLM data augmentations. With single-stage training, DRAMA achieves strong performance on both English and multilingual retrieval tasks—enabling smaller retrievers to benefit from ongoing LLM advancements.

Meta presents Improving Factuality with Explicit Working Memory Presents EWE, a novel approach that enhances factuality in long-form text generation by integrating a working memory that receives real-time feedback from external resources EWE outperforms strong baselines on four fact-seeking long-form generation datasets, increasing the factuality metric, VeriScore, by 2 to 10 points absolute without sacrificing the helpfulness of the responses. arxiv.org/abs/2412.18069

Introducing DRAMA🎭: Diverse Augmentation from Large Language Models to Smaller Dense Retrievers. We propose to train a smaller dense retriever using a pruned LLM as the backbone, fine-tuned with diverse LLM data augmentations. With single-stage training, DRAMA achieves strong performance on both English and multilingual retrieval tasks—enabling smaller retrievers to benefit from ongoing LLM advancements.

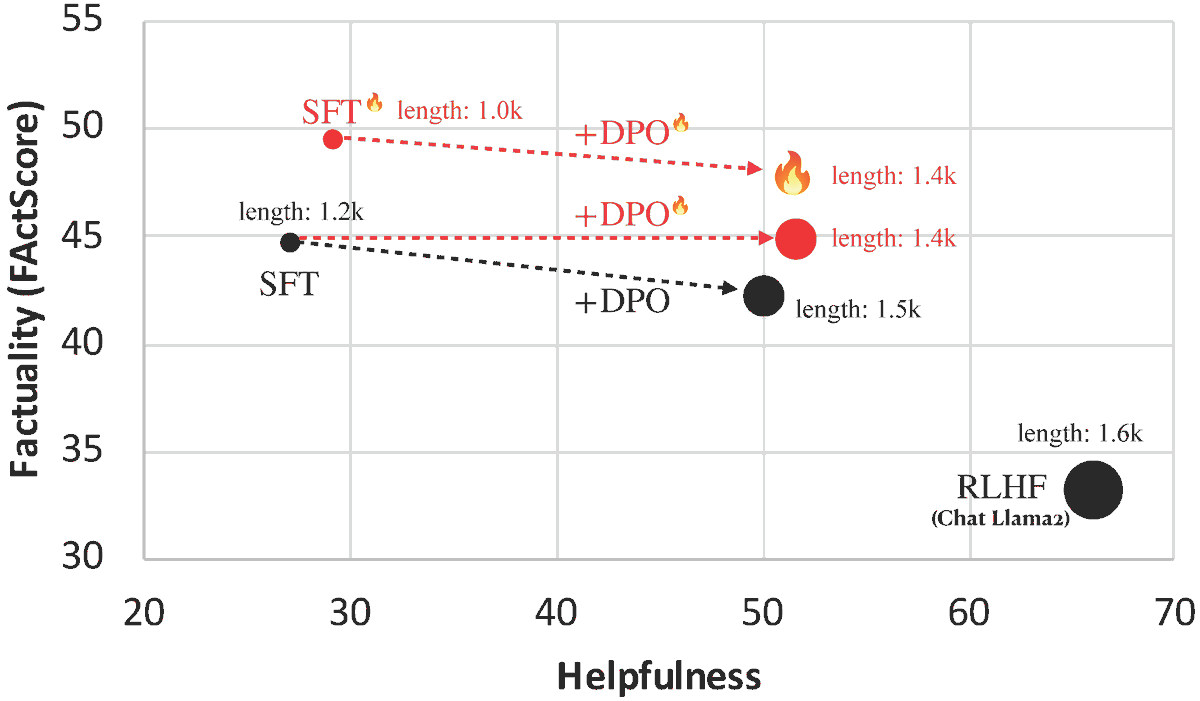

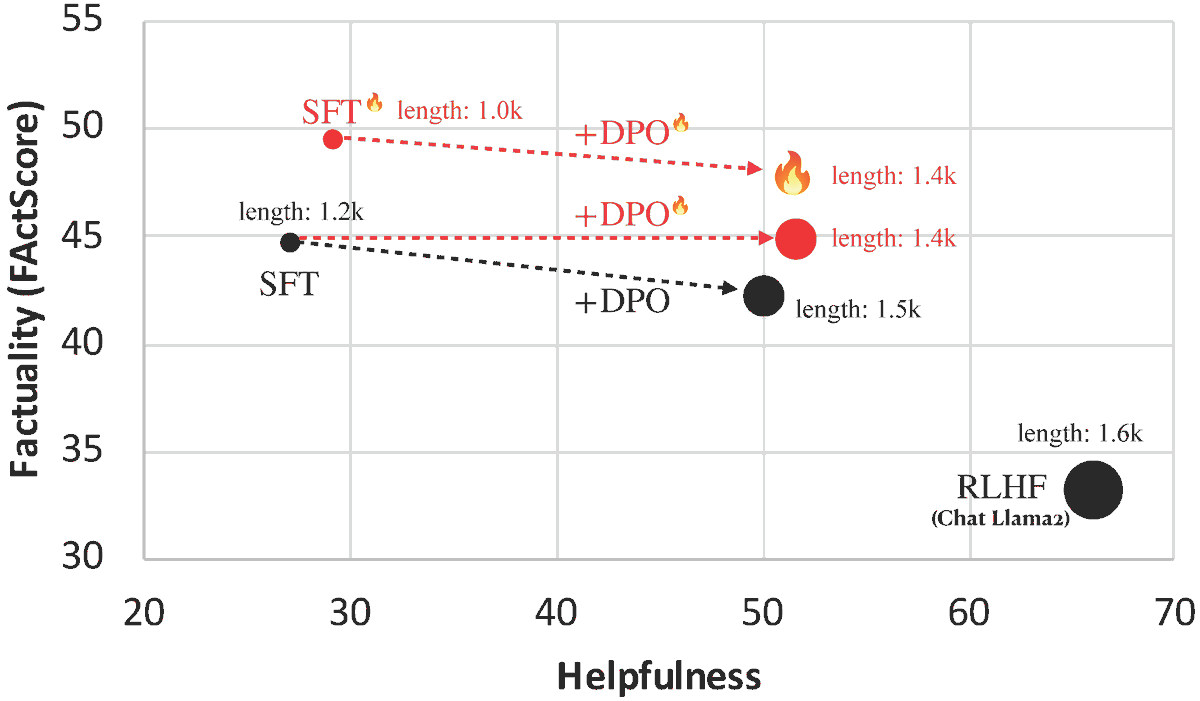

Introducing FLAME🔥: Factuality-Aware Alignment for LLMs We found that the standard alignment process **encourages** hallucination. We hence propose factuality-aware alignment while maintaining the LLM's general instruction-following capability. arxiv.org/abs/2405.01525

Curious about enhancing factuality and attribution in LLM generation? Check out our paper: arxiv.org/abs/2405.19325 Introducing NEST🪺: Nearest Neighbor Speculative Decoding for LLM Generation and Attribution, a training-free method that adds real-world texts into LLM generation.

Introducing *Transfusion* - a unified approach for training models that can generate both text and images. arxiv.org/pdf/2408.11039 Transfusion combines language modeling (next token prediction) with diffusion to train a single transformer over mixed-modality sequences. This allows us to leverage the strengths of both approaches in one model. 1/5