Ehsan Kamalloo

232 posts

Ehsan Kamalloo

@ehsk0

Research Scientist @ServiceNowRSRCH

ServiceNow is easily the Meituan of the West. cooking far harder than any reasonable person would expect them to

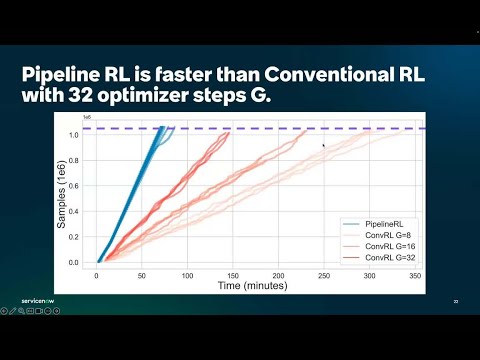

Don't sleep on PipelineRL -- this is one of the biggest jumps in compute efficiency of RL setups that we found in the ScaleRL paper (also validated by Magistral & others before)! What's the problem PipelineRL solves? In RL for LLMs, we need to send weight updates from trainer to generator (to generate data from our latest policy being trained). (Conventional PPO-off-policy) A naive approach would be to "start generators on a batch, wait for all sequences to complete, update the model weights for both trainers and generators, and repeat. Unfortunately, this approach leads to idle generators and low pipeline efficiency due to heterogeneous completion times. (Pipeline-RL) Instead, we simply let the generators continue generating tokens without discarding or finishing ongoing generations in-flight whenever we need to do a weight update -- doing an "in-flight" weight update. As such our KV caches for these generations would be stale, as they would come from LLM with earlier copy(ies) of the weights) but this is ok (see below).