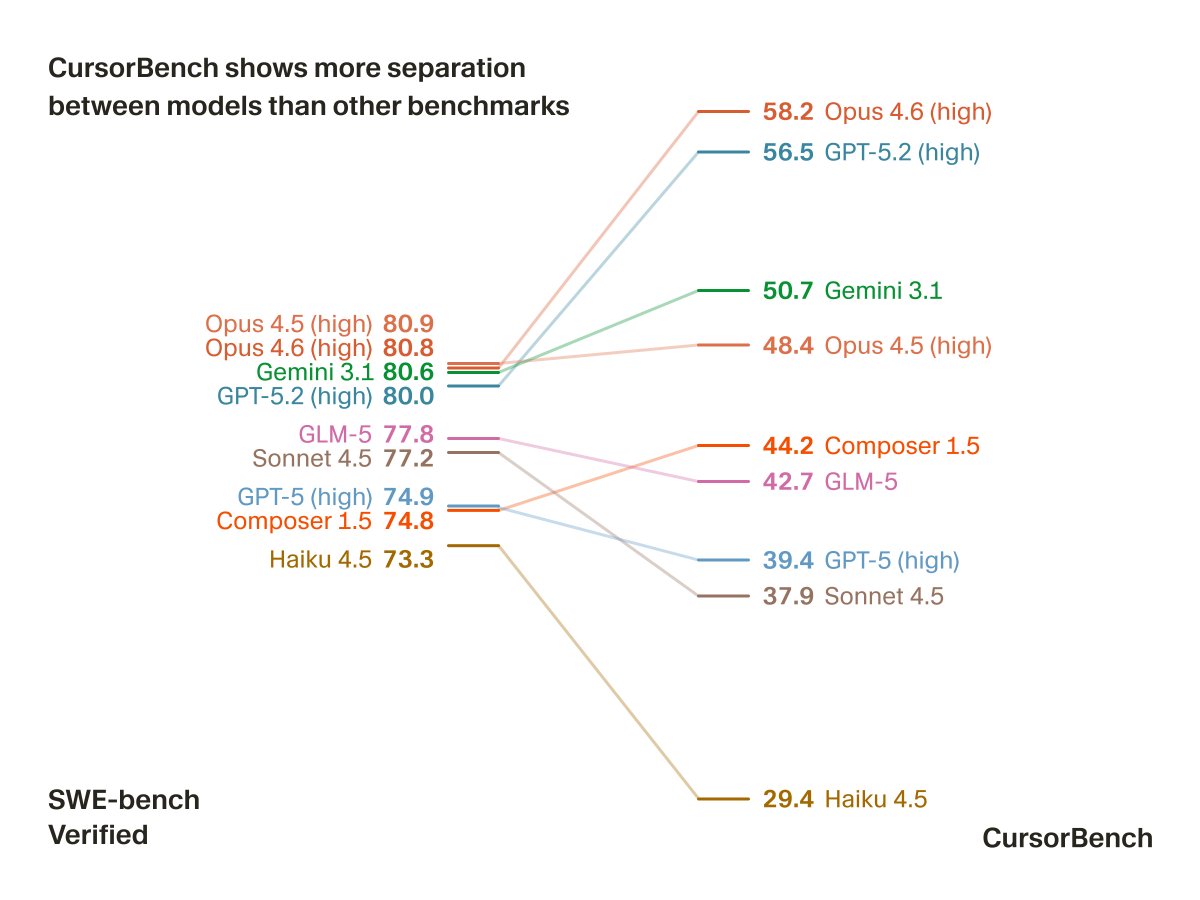

Introducing /orchestrate, a skill that recursively spawns agents to tackle your most ambitious tasks with the Cursor SDK. We’ve used it to: - Autoresearch our internal skills, cutting token use by 20% while improving evals - Cut cold start times on our internal backend by 80%