📣 Hivebrite acquires @orbiit_ai, a pioneering AI-powered matching company, to take community engagement to the next level. Welcome aboard, Orbiit team! 🙌 @BilyanaFreye @luckylwk ➡️ Discover more: lnkd.in/ejcZPCNe

Luuk Derksen

1.9K posts

@luckylwk

Co-Founder of @orbiit_ai (acq. by @hivebrite). Building applied AI.

📣 Hivebrite acquires @orbiit_ai, a pioneering AI-powered matching company, to take community engagement to the next level. Welcome aboard, Orbiit team! 🙌 @BilyanaFreye @luckylwk ➡️ Discover more: lnkd.in/ejcZPCNe

Issue tracking is dead. We are building what comes next. linear.app/next

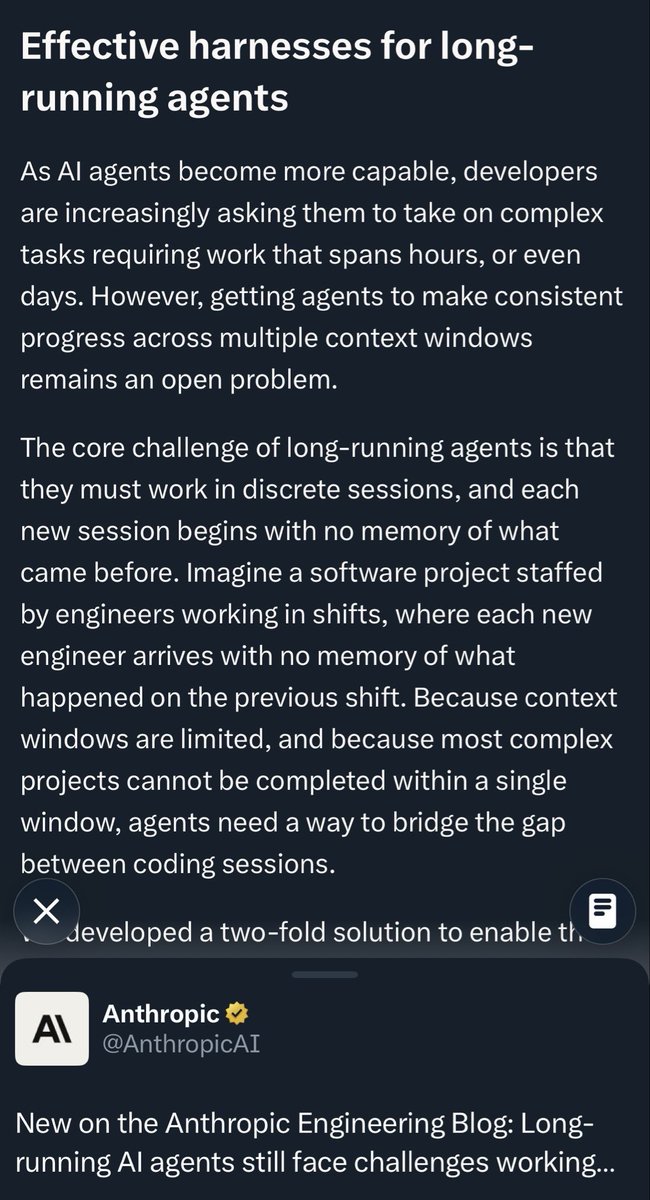

During the iteration process, we also realized that the model's ability to recursively evolve its harness is equally critical. Our internal harness autonomously collects feedback, builds evaluation sets for internal tasks, and based on this continuously iterates on its own architecture, skills/MCP implementation, and memory mechanisms to complete tasks better and more efficiently.

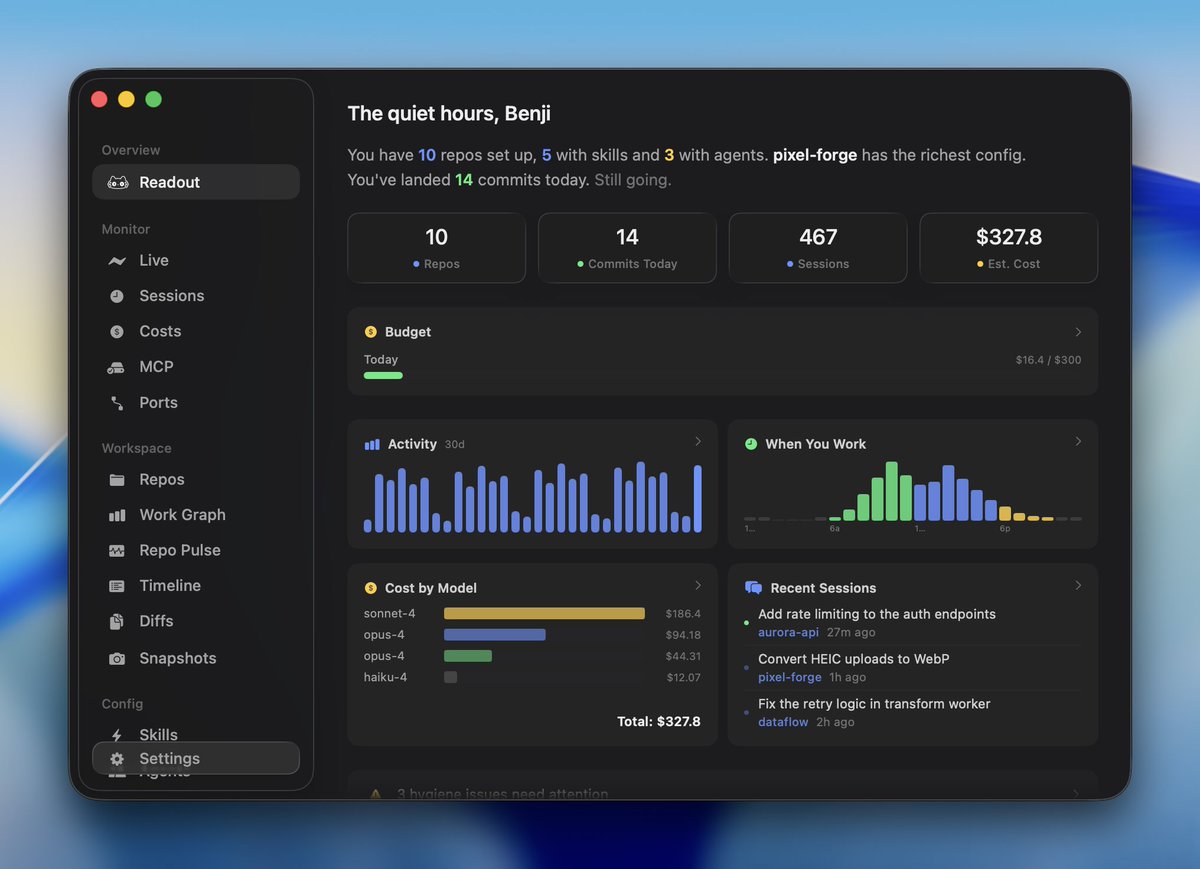

Readout is a fully native macOS app I’ve been building for myself. It provides a real-time overview of your dev environment and Claude Code config. All local, no account required. It's still very much a beta, but now available to try: readout.org

QMD now has its own natively trained query extender model github.com/tobi/qmd/tree/… Seems to do much better at query expansion in my tests. try it out.

New on the Anthropic Engineering Blog: Long-running AI agents still face challenges working across many context windows. We looked to human engineers for inspiration in creating a more effective agent harness. anthropic.com/engineering/ef…

The story gets stranger... Apparently I was never able to use the 🇪🇺 EU's GPUs in the first place Because I wasn't on their pre-approved organization list of "Horizon 2020" So how can you join the Horizon 2020 list as an organization? Well, you can't. It was made in 2014 and closed in 2020! ????