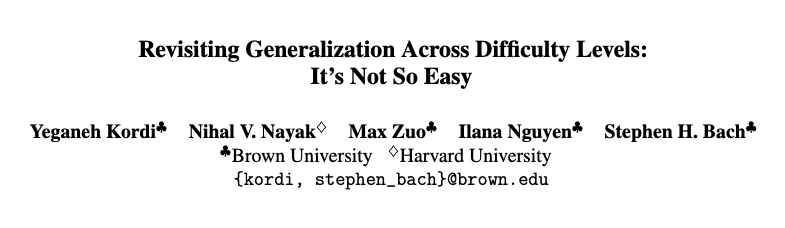

Stephen Bach

1.8K posts

Stephen Bach

@stevebach

Asst. prof. @BrownCSDept. Working on improving how humans teach computers. Weak supervision, zero-shot learning, few-shot learning, and high-level knowledge.

@BrownCSDept is happy to announce that with the anticipated approval of @BrownUniversity's Corporation and effective as of July 1, 2026, @stevebach has been promoted to Associate Professor with tenure. Learn more at Brown CS News: cs.brown.edu/news/2026/05/2…

🚀 Muse Spark Safety & Preparedness Report for Meta AI is out. We start with our pre-deployment assessment under Meta's Advanced AI Scaling Framework, covering chemical and biological, cybersecurity, and loss of control risks. Our assessment flagged potentially elevated chem/bio risk, so we implemented safeguards and validated mitigations before deployment - bringing residual risk to within acceptable levels. Beyond the Framework, we also share findings and early explorations of model behavior (honesty, intent understanding, etc.), jailbreak robustness, eval awareness, and more. We're sharing this report to give a closer look at how we evaluate advanced AI safety. Always more work to do, and we welcome feedback from the community. ai.meta.com/static-resourc…