Greg Wedow

35 posts

It seems unwise to tie your identity to the assumption that a technology won’t improve.

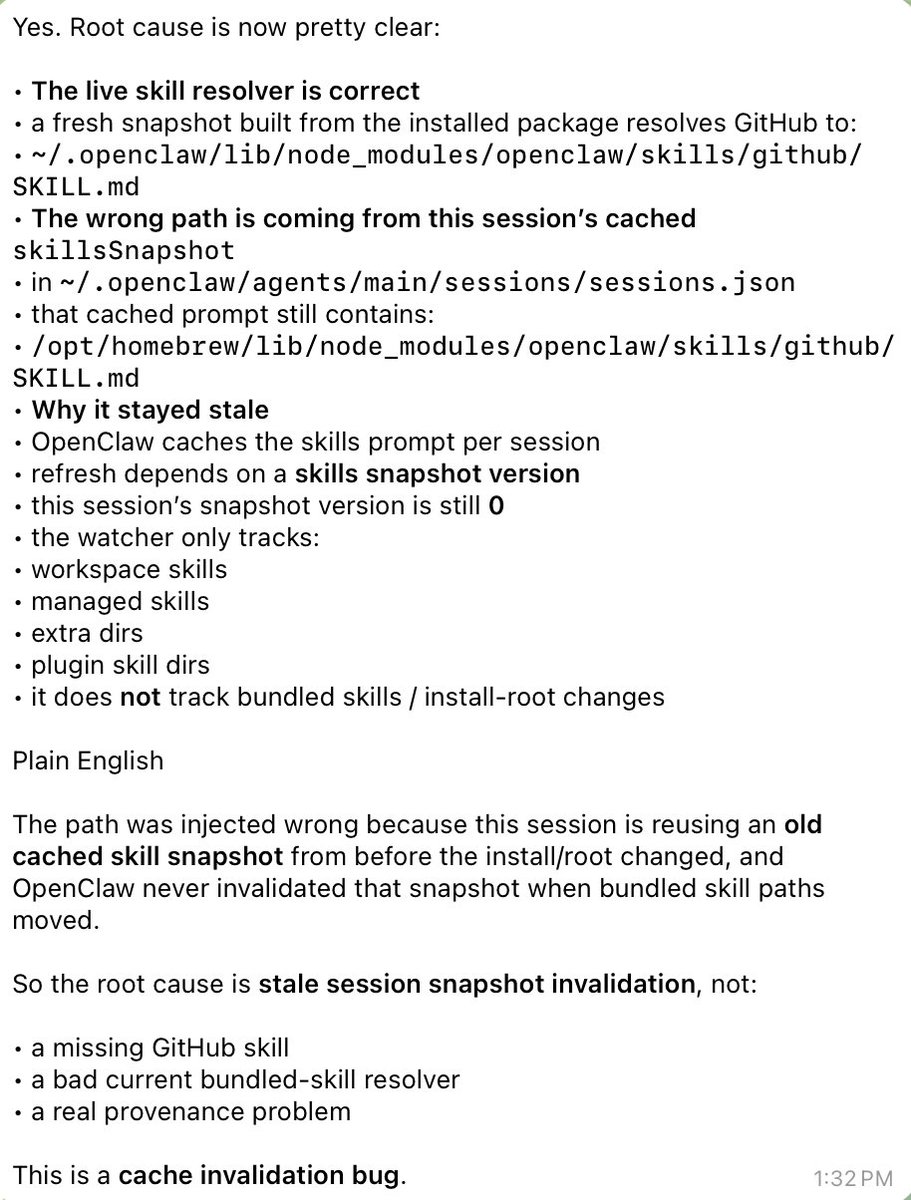

layers of bugs... this shouldn't even have happened to me because I started a new session yesterday. But the new session didn't stick because later in the day I changed a configuration to make my TUI and telegram be on the same session. Somehow they both unified to the previous buggy session before I did /new. good god

software is still really bad

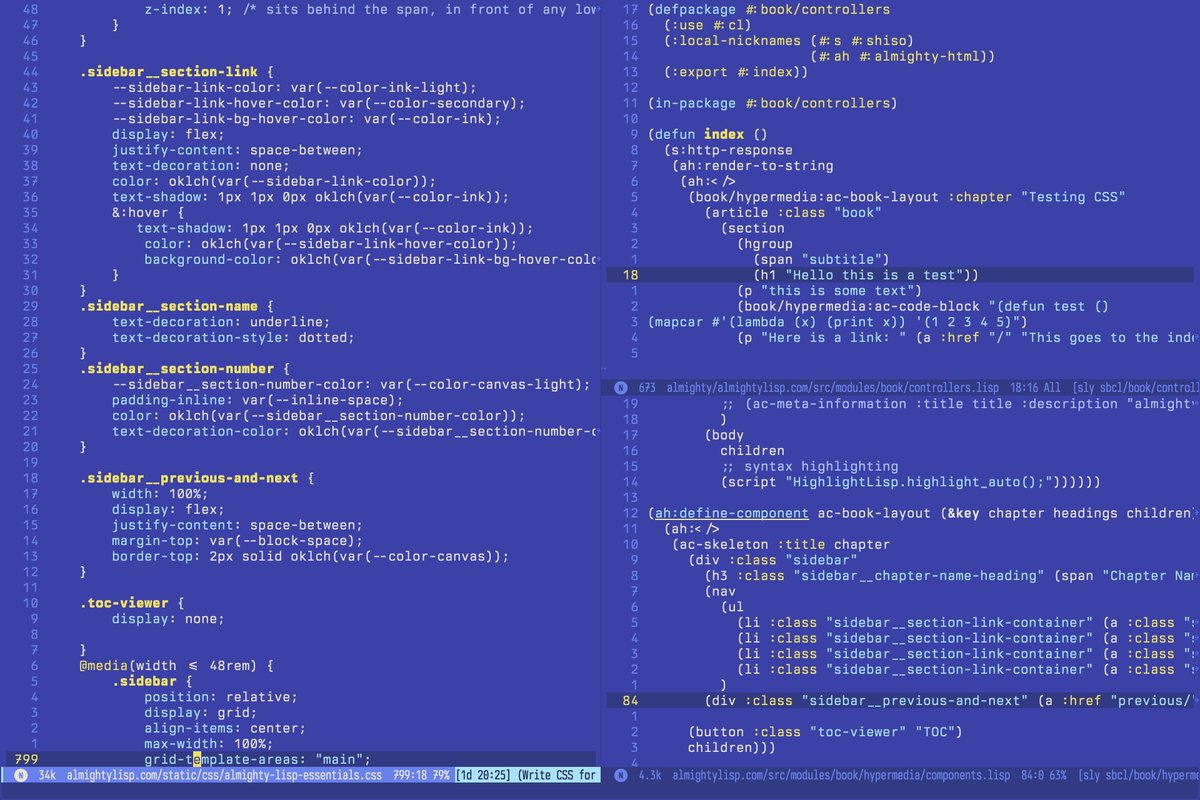

Not to brag, but I created this piece with my hands.

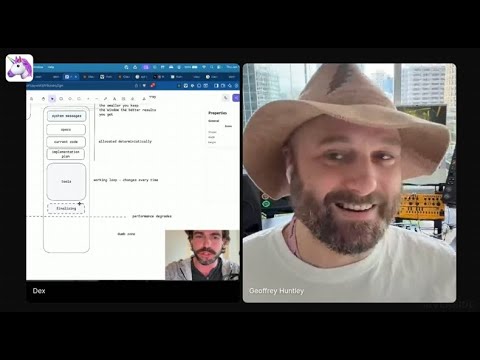

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

This is why I’m unimpressed by Erlang/Elixir: every major language runtime has VERY high-quality M:N work-stealing “thread” schedulers with good APIs (structured concurrency), and the “isolated processes” and “RPC” got pushed up to an orchestration layer (DC/OS, Nomad, k8s…)

Yes. There’s a reason you so rarely see the word “actor” in the erlang/elixir communities. The deeper, more general abstraction is the beam’s preemption and cooperative scheduler. Then you layer on processes with isolated memory. *Then* inter-process communication.

i mean this literally. given an infinite universe where self-replicating (sustaining) is possible; after enough time, it is *inevitable*, and once created, entropy will destroy all else: the universe becomes more and more selective for the self-replicating