Adam Abdalla

50 posts

Adam Abdalla

@yabadba

ece @uoft and ml researcher @MIT | i like making cool stuff

update: - reneged my SF offer - declined my NYC offer - joining @boardyai as a MTS in Toronto

Folks @AIatMeta just released TRIBE v2, a model that predicts fMRI brain activity from video. We used it to build Cortex, so you can test ads on a "digital human" to see where attention drops before you spend a dime validating before launch. cortex.buzz

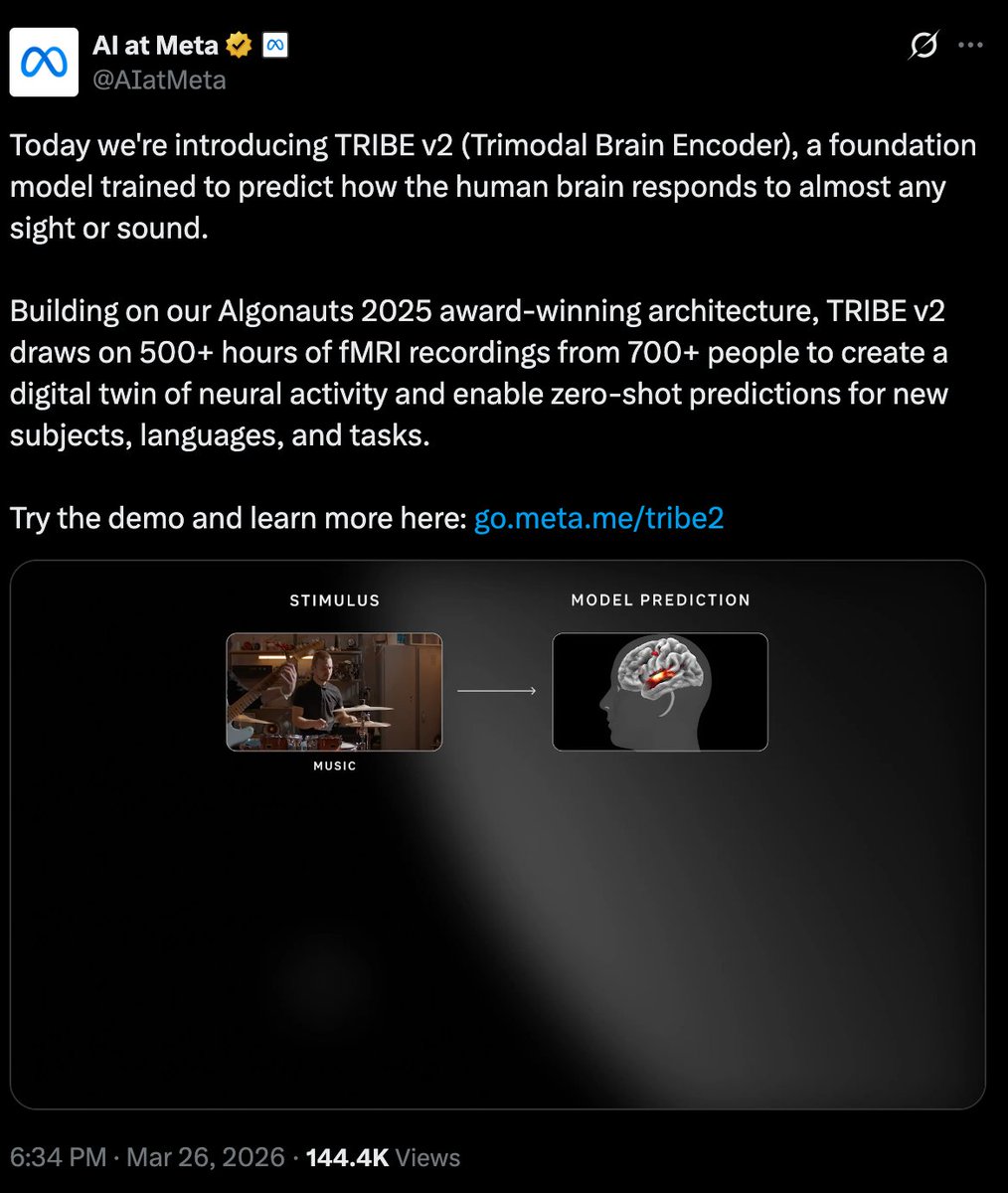

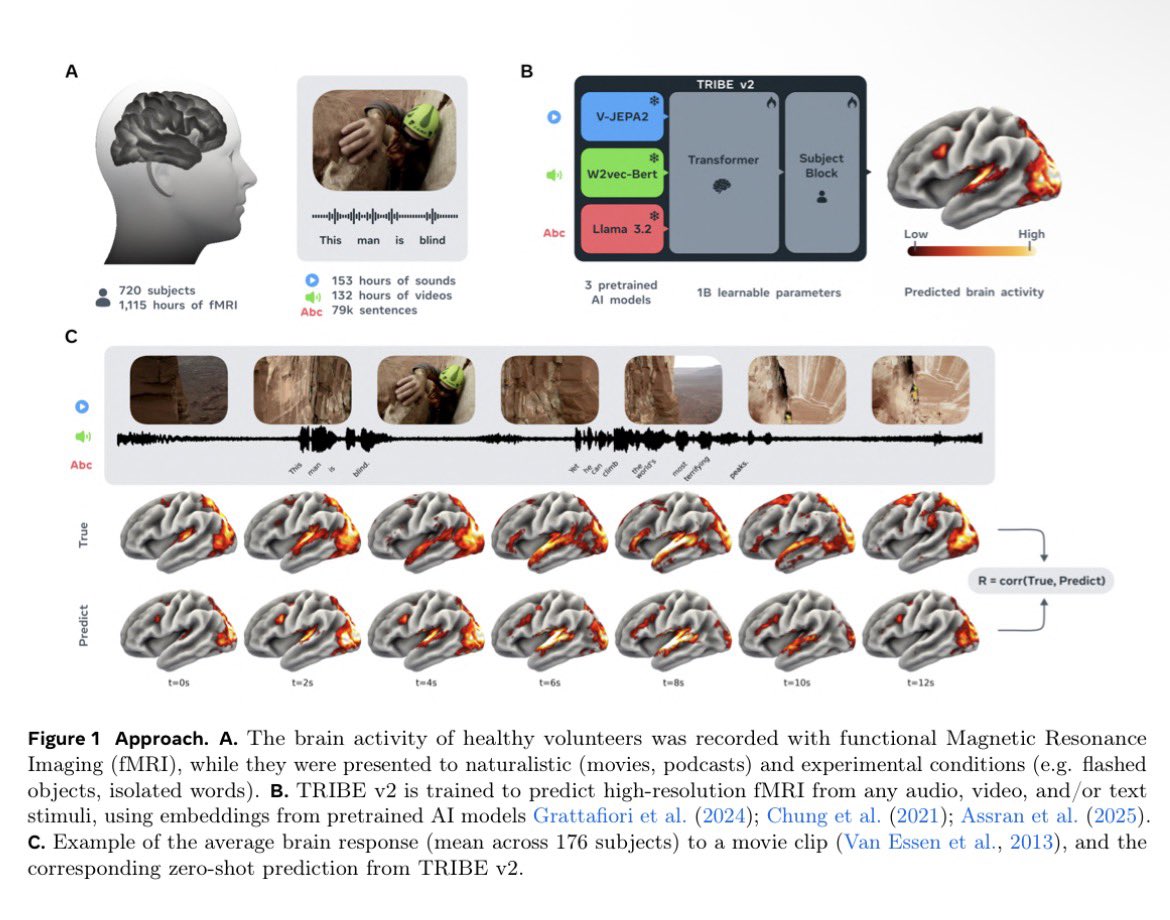

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2

Folks @AIatMeta just released TRIBE v2, a model that predicts fMRI brain activity from video. We used it to build Cortex, so you can test ads on a "digital human" to see where attention drops before you spend a dime validating before launch. cortex.buzz

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2

Folks @AIatMeta just released TRIBE v2, a model that predicts fMRI brain activity from video. We used it to build Cortex, so you can test ads on a "digital human" to see where attention drops before you spend a dime validating before launch. cortex.buzz

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2

Folks @AIatMeta just released TRIBE v2, a model that predicts fMRI brain activity from video. We used it to build Cortex, so you can test ads on a "digital human" to see where attention drops before you spend a dime validating before launch. cortex.buzz

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2

Folks @AIatMeta just released TRIBE v2, a model that predicts fMRI brain activity from video. We used it to build Cortex, so you can test ads on a "digital human" to see where attention drops before you spend a dime validating before launch. cortex.buzz

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2

Folks @AIatMeta just released TRIBE v2, a model that predicts fMRI brain activity from video. We used it to build Cortex, so you can test ads on a "digital human" to see where attention drops before you spend a dime validating before launch. cortex.buzz

Folks @AIatMeta just released TRIBE v2, a model that predicts fMRI brain activity from video. We used it to build Cortex, so you can test ads on a "digital human" to see where attention drops before you spend a dime validating before launch. cortex.buzz

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2

Folks @AIatMeta just released TRIBE v2, a model that predicts fMRI brain activity from video. We used it to build Cortex, so you can test ads on a "digital human" to see where attention drops before you spend a dime validating before launch. cortex.buzz