Tom

86 posts

Tom

@tomcrojo

data+ai engineer - I make saas and data pipelines

madrid เข้าร่วม Şubat 2021

44 กำลังติดตาม5 ผู้ติดตาม

@justalexoki A fitness coach that synchronizes every health input. It breaks 50% of the time ✌🏻

English

GENUINELY what are you even doing with your openclaws???? i have legitimately not seen a single good use case

Peter Yang@petergyang

I know OpenClaw isn't part of OpenAI but this feels like a mini-crisis for OpenAI if the GPT integration doesn't improve soon. The bar is GPT needs to be just as good if not better for OpenClaw as Opus.

English

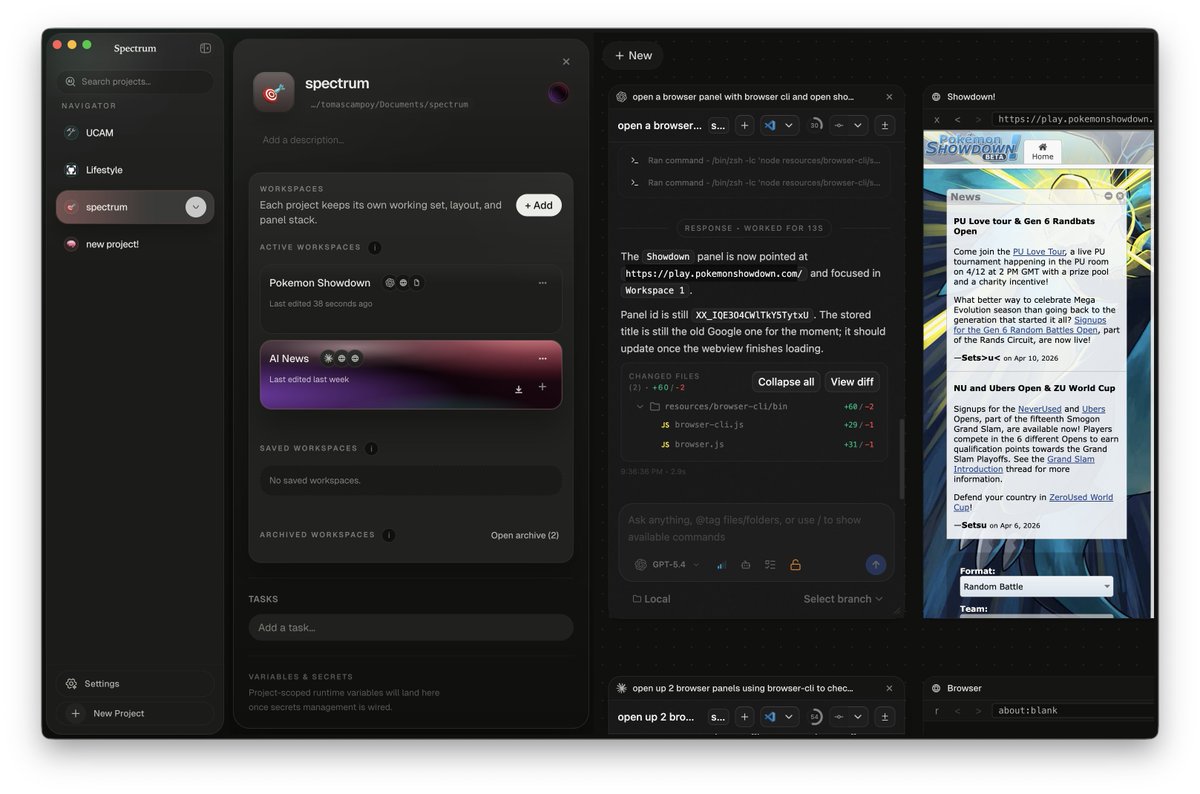

@Kraggich really good! i made github.com/tomcrojo-labs/… and it's somewhat similar idea.

one thing i did that could be interesting was having a "project page" where you can see all the different workspaces, tasks and other project info in one place.

English

Switching between projects in a terminal IDE is pain.

Cmd+Tab between 5 windows, lose context, forget where you were.

So I stole Discord's playbook — workspace rail on the left.

Each project = its own canvas with agents, terminals, editors.

Click an icon > instant switch. Everything preserved.

Even auto-detects your project's favicon as the icon.

English

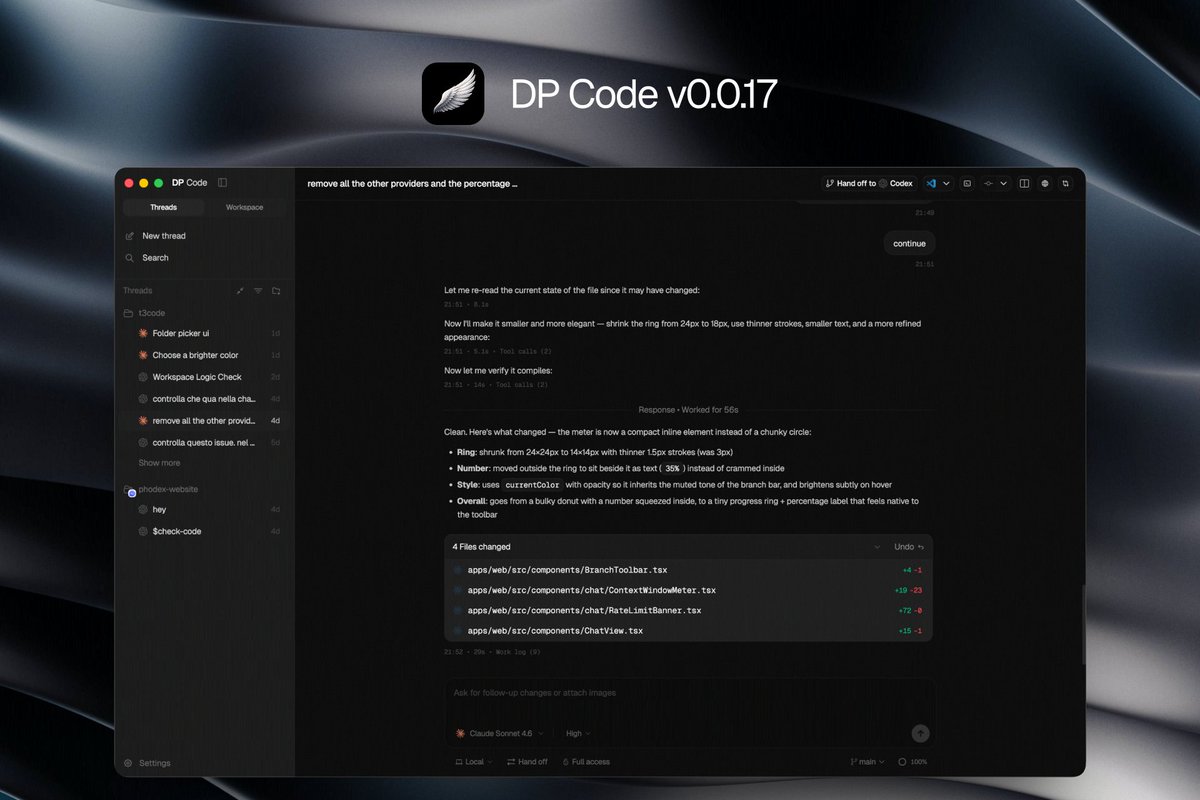

DP Code v0.0.17 just shipped! 🚀

→ smoother scrolling in chat

→ cleaner Plans step-by-step UI

→ custom UI font, code font, and chat text size

→ tighter message spacing

→ cleaner tool/work log summaries

→ better composer image attachments

→ improved terminal link detection

→ terminal close confirmation prompts

→ less clutter in the sidebar

→ safer destructive actions

→ lots of polish across the whole chat experience

Enjoy!

@theo @jullerino great work as always

English

@samoyed_online @xeophon They say it also improve accuracy for some reason

English

@xeophon Asking the persona-adopting model to adopt a stupid persona for efficiency 🤔

English

@haveanicedavid @superdoteng @sawyerhood Sure! 100% agree. Having an unified ide in the age of ai agents is a huge task. But probably working little by little we'll figure out how that looks!

As I said before: great job!

I'll keep an eye on superconductor

English

Yeah, the one I added was WebKit for perf reasons, but I almost always use chromium for actual work which is part of why I removed. I personally just use the chrome MCP’s, or whip open cursor on occasion

I do have some features. I’d love to add that require a browser, but they’re lower on the priority list right now

English

Meet Superconductor.

A native macOS app for agentic engineering, built to go fast.

No Electron. No Tauri. 100% Rust.

Manage all of your coding agents without friction.

We're in Alpha: super.engineering

English

@haveanicedavid @superdoteng I've seen stuff like @sawyerhood's dev-browser cli that could be useful, but if you include a chromium browser as a panel or tab or something like that, it would add too much bloat to the current app (and it would be difficult to keep the parallel work working as expected)

English

@tomcrojo @superdoteng Thank you! I had a very basic one at one point but realized I would never personally use so didn’t include in this release. Would you use something basic?

I’d like to integrate into the mcp eventually but that’s a lot more work

English

@haveanicedavid @superdoteng Hey David! Great work! Any chance to integrate a browser panel as well per worktree to visualize design changes, ux, etc?

English

@SaintAwoleesu Exodus 20:4–5:

“Thou shalt not make unto thee any graven image, or any likeness of any thing that is in heaven above, or that is in the earth beneath, or that is in the water under the earth:

And Jesus didn't have long hair

English

We should stop posting images like this as Christians.

Life Truth Way@Life_truthway

If you believe one day we will stand before God, drop an Amen.

English

🚨 Olvidate de 17 tabs abiertas, 8 terminales y agentes de AI pisándose entre sí

Arbor es la app desktop nativa que los devs performance-obsessed estaban esperando.

Construida en Rust + GPUI (el mismo motor de Zed), te da:

- Git worktrees automáticos desde issues de GitHub/GitLab

- Terminales embebidas ultra-rápidas (con soporte real de truecolor y procesos gestionados)

- Chat directo con Claude, Codex, Ollama y cualquier OpenAI-compatible

- Diffs en tiempo real, PR context y visibilidad total de tus agentes corriendo

Todo en una sola ventana hermosa, ligera y nativa.

Sin Electron.

Sin lag.

Sin drama.

REPOOO👇

Español

@RayFernando1337 Starting mission to test exactly that

First try 5.1.

Orch and validator 5.4 high / xhigh

Interesting to discover how it work totally without opus or sonnet.

English

I think this will replace Opus/Sonnet for design.

Factory@FactoryAI

GLM-5.1 is now available in Droid.

English

@weswinder there is one more unlock you can do, run job to scan output of each agent with LLM to inform ai agent in sidebar if agent is stale (been doing it for last ~9 month)

it unlocks autonomous parallelisation of huge scale

English

@caspian_1016 besides open source, what's the difference with @superdoteng ?

English

@barckcode Lo realmente productivo es usar modelos de frontera y eso no lo puedes correr si no eres un gran laboratorio, quizás mejor unirse para pillar suscripciones de equipos.

Español

🤔 Saben que estaría bien? Juntarnos unos 5/10 locos que estemos desarrollando a full cosas con IA y nos montemos una mini comunidad para pagar un buen server privado con GPUs para poder quemar tokens como locos con modelos locales.

Yo creo que entre unos 5/10 que nos juntemos nos sale casi regalado todo.

Se apuntarían? Yo me comprometo a montar el server y disponibilizo los modelos que queramos con Ollama en 2 minutos para que puedan acceder a ellos en un momento de forma privada desde cualquier sitio.

Español

@ChaosEmergent @haider1 thought so too. maybe they mean architecture wise? or the type of training they did with 4o is similar to what they've done with the 5.x models?

English

@haider1 wait then what was gpt-4.5

I assumed that the 5-series were distilled from 4.5 then RLVR'd as smaller models

English

@RayanKrishnan could that be because providers like Gemini obfuscate their reasoning tokens by summarizing with another model?

English

Last point: remember how Llama 4 had no reasoning capability at all? That made us especially curious to look at Muse Spark's reasoning traces.

They're fundamentally different from other models. More like a human stream of consciousness. Less mechanical, more fluid, and notably more token-efficient.

English

@heliumbrowser how come when you zoom in and out theres no indicator like other browsers?

sometimes awkward to know if im at 100% zoom or not especially when designing websites

English

many new ways to make your browser feel "just right" dropped in helium today:

- centered address bar

- minimal address bar

- new dynamic layout

- improvements to vertical layout

- and experimental zen/frameless mode (flagged, bit buggy, but cool!)

helium.computer

English

@chatgpt21 We really don't need a 100T MOE.

We are going to optimize the data, architecture, training processes etc so that 1T models will be ASI ready.

and AI will help with that.

English

Hear me out.

A 100T dense model isn’t impossible anymore, it’s just deeply unnatural to serve at scale because Vera Rubin solves the fit problem, a 100T model is 200 TB, which drops to 700 GPUs unquantized or 170 GPUs at 4 bit, MEANING a few racks to hold it (depending on how many GPUs per rack),

Assume 1 million concurrent users 20 tokens per second each - 20 million tokens per second system wide, and even at - 1k to 5k tokens per second per GPU you still need -4k to 20k GPUs minimum, which realistically becomes tens of thousands of Vera Rubin’s once you factor in latency, & batching limits,

Training time would for Rubin would be at 17.5 PFLOPS per GPU (at FP4 peak), 100k GPUs still means 4 days for 1T tokens and 40 days for 10T tokens at perfect utilization, so the good news is that Rubin makes 100T dense easy to instantiate but not to serve massively (obviously), and Feynman will push hardware further!! but the direction is already clear that 100T+ models will be sparse and system optimized rather than brute forced dense. (In the beginning) however this is what I foresee being the AGI models we see in 2029.

Elon Musk@elonmusk

🎯

English