مازن وذكاء الآلات

1.1K posts

مازن وذكاء الآلات

@Mazen_AIEx

حيث يلتقي السيليكون بالمشابك العصبية

"Do not learn to code" is the worst career advice of the decade. People are telling college students to skip Computer Science because AI will just automate it all. Andrew Ng just killed this myth at Stanford with a brilliant analogy. When he tried to generate images with Midjourney, he typed: "make pretty pictures of robots" and got garbage. His collaborator, however, understood Art History. He knew the exact vocabulary of lighting, genre, and palette. He spoke the "language of art," and generated masterpieces. Andrew Ng is seeing the exact same thing happen in software engineering right now. AI didn't replace the need to understand Computer Science. It made Computer Science the required vocabulary to control the AI. If you don't understand how computers actually work, you are just typing "make a pretty app" into Cursor and shipping fragile, unscalable logic. Here is Andrew Ng's exact hiring hierarchy today: Level 1: 10 years of experience, but codes by hand (He won't hire them). Level 2: Fresh college grad, but highly fluent in AI-assisted coding (He hires them over the 10-year veteran). Level 3 (God Tier): Deeply understands CS fundamentals AND uses AI-assisted coding. When humanity went from punch cards to keyboards, coding got easier, and more people coded. We are at that exact inflection point again. AI doesn't replace fundamentals. It multiplies them.

i often think about this..

someone at ANTHROPIC just showed CLAUDE finding ZERO DAY vulnerabilities in a live conference demo claude has found zero day in Ghost, 50,000 stars on github, never had a critical security vulnerability in its entire, history... it found the blind SQL injection in 90 minutes, stole the admin api key, then did the exact, same thing to the linux kernel

OPENCLAW + OLLAMA CAN NOW REPLACE YOUR AI API BILL WITH LOCAL AGENTS RUNNING ON YOUR OWN COMPUTER. No cloud servers, no sneaky API costs, and your private data stays on your machine.

theregister: Linux kernel czar says AI bug reports aren't slop anymore. AI now finds actual bugs, suggests working patches, and returns feedback before a human reviewer even opens the patch. Things have changed, Kroah-Hartman (long-term Linux kernel maintainer) said. "Something happened a month ago, and the world switched. Now we have real reports." It's not just Linux, he continued. "All open source projects have real reports that are made with AI, but they're good, and they're real." Security teams across major open source projects talk informally and frequently, he noted, and everyone is seeing the same shift. "All open source security teams are hitting this right now." No one is quite sure what's behind it. Asked what changed, Kroah-Hartman was blunt: "We don't know. Nobody seems to know why. Either a lot more tools got a lot better, or people started going, 'Hey, let's start looking at this.' It seems like lots of different groups, different companies." What is clear is the scale. "For the kernel, we can handle it," he said. "We're a much larger team, very distributed, and our increase is real – and it's not slowing down. These are tiny things, they're not major things, but we need help on this for all the open source projects." Smaller projects, he implied, have far less capacity to absorb a sudden flood of plausible AI-generated bug reports and security findings – at least now they're real bugs and not garbage ones. --- theregister .com/2026/03/26/greg_kroahhartman_ai_kernel/

A lot of folks talk about "escaping the permanent underclass". If AGI pans out, the future class divide won't be based on wealth, but on cognitive agency. There will be a "focus class" (those who control their attention and actually do things) and a "slop class" (those whose reward loops are fully RL-managed by AI)

Tobi Lutke explains what the VCs who passed on Shopify got wrong Tobi recounts pitching Shopify to VCs on Sand Hill Road a few years after founding Shopify. Investors passed because they thought the addressable market was too small. At the time, there were about 40,000-50,000 online stores, and even if Shopify captured 50% of the market, that still wouldn’t be a venture-scale business. When Tobi ran into the VC partner a few years ago, the partner asked Tobi what he missed (Shopify is valued at almost $100 billion today). Tobi explained: “You were actually correct, but what you didn’t realize was that Shopify was the solution to the very problem you identified. The reason there was only 40,000 online stores was because it was hard, expensive, and everyone who tried ran into all these brick walls of complexity, which Shopify, one after another, smoothed over and made simple to do.” Tobi believes this is a common mistake: “What a lot of free-market thinkers don’t understand is that between the demand and eventual supply lies friction. And I actually think that friction is probably the most potent force for shaping the planet that people just generally do not acknowledge… That was my theory when I turned my snowboard store into Shopify: there was a lot more people like me except there was too much friction which we needed to solve. And Shopify has proven out that every time we make the process simpler, there’s more consumption. At this point, we have a million merchants on Shopify, which is a mind-blowing number. So friction is a major component, and it’s something that software is uniquely good at reducing.” Video source: @danmartell (2019)

- Drafted a blog post - Used an LLM to meticulously improve the argument over 4 hours. - Wow, feeling great, it’s so convincing! - Fun idea let’s ask it to argue the opposite. - LLM demolishes the entire argument and convinces me that the opposite is in fact true. - lol The LLMs may elicit an opinion when asked but are extremely competent in arguing almost any direction. This is actually super useful as a tool for forming your own opinions, just make sure to ask different directions and be careful with the sycophancy.

Okay, @gdb is team CLI all the way. @garrytan thinks MCPs suck. So we hit the streets of SF to see if the city agreed. We posed a simple question: MCP or CLI? - Basically everyone under the age of 35 said CLI - One person said MCP was as bloated as Java - & unsurprisingly, numerous people told us to touch grass Final score- MCP: 3 vs CLI: 17 SF has spoken, and @composio listened. Our universal CLI is now live! Drop your best CLI vs MCP hot take in the comments and we'll send the best ones some very sick gear 👀 Link to try our CLI in the next thread ⬇️

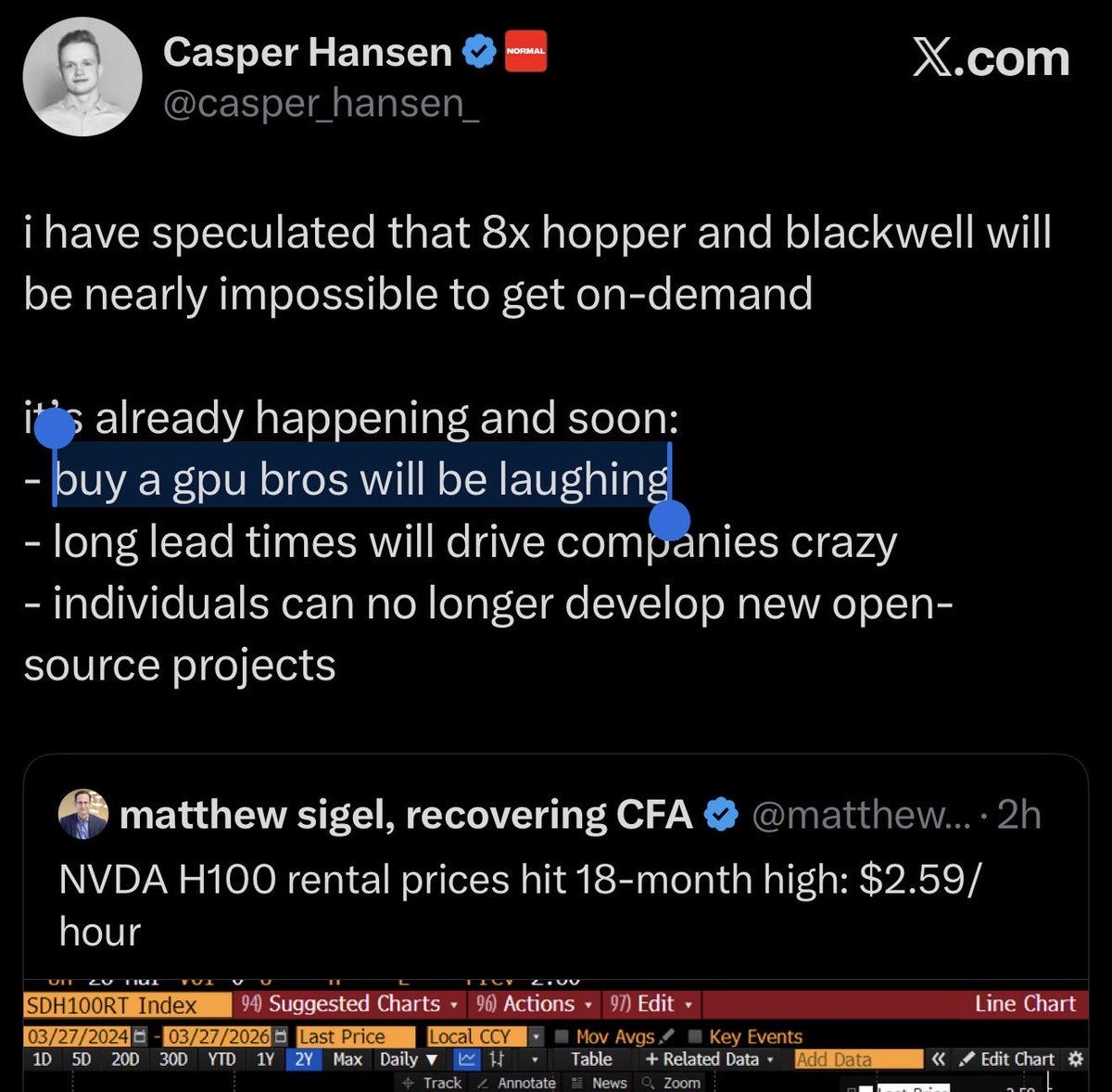

i have speculated that 8x hopper and blackwell will be nearly impossible to get on-demand it’s already happening and soon: - buy a gpu bros will be laughing - long lead times will drive companies crazy - individuals can no longer develop new open-source projects