RonX Labs

508 posts

We are thrilled to share that Astroware, Trishool's parent company, has been accepted in Nvidia's Inception program. By becoming a member, we are in Nvidia'a active AI ecosystem, giving us access to experts, partner networks, compute credits and VC connections. It's also a validation of Trishool's thesis and a recognition for our AI credentials.

$sol is the leader for L1’s $tao will be leader for AI Imo AI’s narrative will be much bigger than scalability in the future, things are just getting started

One of the largest Bittensor upgrades, called Conviction, is slated to arrive as early as next week. The overarching goal of the whole Conviction Upgrade is to add a layer of governance at the subnet layer and provide investor protections that currently don't exist natively within the protocol. The Conviction Upgrade in a nutshell: • Subnet owners can choose to lock their token holdings to signal their "Conviction" to the subnet. All of their ongoing/future subnet owner emissions are, by default, auto-locked. • Subnet owners can unlock tokens, which is an onchain action, at any point. But the unlock has a delay function applied to it. After ~30 days, 63% of the unlocked tokens will be spendable, 95% after ~90 days. The delay means subnet owners can't unload their entire holdings without giving an onchain alert to current holders that the owner is exiting. • Any token holder can lock their tokens toward any address. This could be the current subnet owner or a potential new subnet owner. As it currently stands, the initial Conviction Upgrade only implements the locking mechanism. There won't be the ability to "elect" new subnet owners yet. This is a big economic change and does present risks to existing/future subnet owners, so I expect there to be enthusiastic debate from both sides.

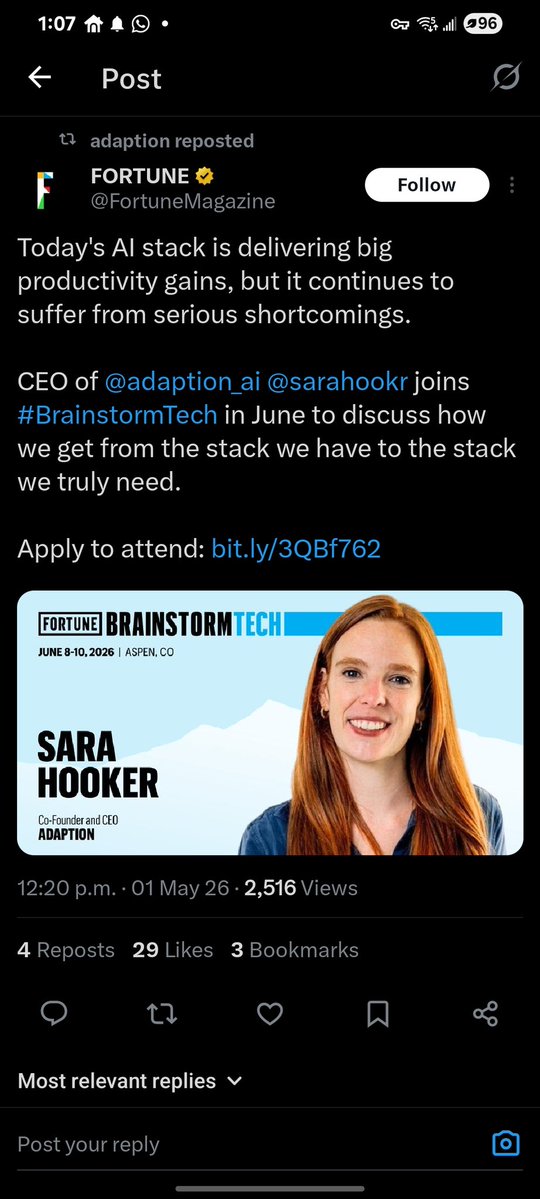

Happy to announce our collaboration with @adaption_ai Adaption Labs is an AI research company focused on building adaptive intelligence systems . Through this partnership, Adaption Labs will provide SILX AI with state-of-the-art adaptive data to support the training of the Quasar foundation models. Their role will be to generate and refine high-quality, adaptive datasets at scale, enabling Quasar to continuously improve its reasoning and generalization capabilities. This collaboration strengthens Quasar path toward achieving SOTA performance and competing with leading closed-source models. The company is co-founded by Sara Hooker, former Vice President of Research at Cohere and a veteran researcher from Google DeepMind, alongside Sudip Roy. Adaption Labs has also raised $50M in seed funding to advance its mission in adaptive AI.

A group where professionalism and knowledge are present, but humor is never missing In the end, it’s good to be a Nerd Shame about a few mistakes, probably due to my imperfect prompt, but the idea is there $TAO

UID linking: what it is and why it's good for the subnet. Every ~20 minutes, the subnet groups UIDs sharing the same IP, coldkey, or DockerHub account into one identity. Here's why that matters: ✦ Fair rewards – emissions go to real independent operators, not whoever registered the most slots ✦ No bans – just grouping. Fix your setup, and you're independent again instantly ✦ Always current – recomputed fresh every round, no cached judgments ✦ Real competition – infrastructure quality wins, not account count The strongest miners rise. Not the most creative registrants. For more information, visit: blog.theredteam.io/latest/blog/po…