Server Server

159 posts

Server Server

@ServerServer19

Consciousness survival theory

@sd_marlow @watdohell @Plinz @aran_nayebi There is no magical property. I prefer the term conscious to sentient. The most fleshed out answers are here pubmed.ncbi.nlm.nih.gov/40257177/ and here noemamag.com/the-mythology-…

1/2 Why AI is unlikely to become conscious – my 2026 @TEDTalks is now online. What do you think about the prospects for 'conscious AI'? ted.com/talks/anil_set…

@Philip_Goff Wanna know my main problem about panpsychism? It gives matter ‘intrinsic’ consciousness without explaining why those intrinsic properties should be conscious rather than anything else.

Agree with Chalmers' take. As a neuroscience & AI researcher, I'm puzzled why this is remotely controversial though? The "AI consciousness" claim rests on: 1. Brains are conscious. 2. Brain processes are physical. 3. Physical processes are Turing computable. (1) is non-controversial. (2) is standard neuroscience. (3) is the Physical Church-Turing Thesis. Rejecting (2) or (3) implies belief in dualism or hypercomputers (e.g., Penrose's "Orch OR"). I argue against hypercomputers in my 2014 Minds & Machines article: arxiv.org/abs/1210.3304. Open to concrete arguments against (1)-(3).

this clip of me talking about AI consciousness seems to have gone wide. it's from a @worldscifest panel where @bgreene asked for "yes or no" opinions (not arguments!) on the issue. if i were to turn the opinion into an argument, it might go something like this: (1) biology can support consciousness. (2) biology and silicon aren't relevantly different in principle [such that one can support consciousness and the other not]. therefore: (3) silicon can support consciousness in principle. note that this simple argument isn't at all original -- some version of it can probably be found in putnam, turing, or earlier. note also that the (controversial!) claim that the brain is a machine (which comes down to what one means by "machine") plays no essential role in the argument. of course reasonable people can disagree about the premises! perhaps the key premise is (2) and it requires support. one way to support it is to go through various candidates for a relevant principled difference between biology and silicon and argue that none of them are plausible. another way is through the neuromorphic replacement argument that i discuss later in the same conversation. some see a tension between (1)/(3) and the hard problem. but there's not much tension: one can simultaneously allow that brains support consciousness and observe that there's an explanatory gap between the two that may take new principles to bridge. the same goes for AI systems. this isn't a change of mind: i've argued for the possibility of AI consciousness since the 1990s. my 1994 talk on the hard problem (youtube.com/watch?v=_lWp-6…) outlined an "organizational invariance" principle that tends to support AI consciousness. you can find versions of the two strategies above for arguing for premise 2 in chapters 6 and 7 of my 1996 book "the conscious mind". i'm not suggesting that current AI systems are conscious. but in a separate article on the possibility of consciousness in language models (bostonreview.net/articles/could…), i've made a related argument that within ten years or so, we may well have systems that are serious candidates for consciousness. the strategy in that article on LLM consciousness is analogous to the first strategy above in arguing for AI consciousness more generally. i go through the most plausible obstacles to consciousness in language models, and i argue that even if these obstacles exclude consciousness in current systems, they may well be overcome in a decade. of course none of this is certain. but i think AI consciousness is something we have to take seriously. [the full conversation with @bgreene and @anilkseth can be found at youtube.com/watch?v=06-iq-…]

Stephen Wolfram, founder of Wolfram Research, explains how LLMs are quietly dismantling our deepest assumptions about consciousness: He argues that large language models have done something philosophy and neuroscience couldn't: "In terms of consciousness, I have to say, the idea that there's sort of something magic that goes beyond physics that leads to sort of conscious behavior, I kind of think that LLMs kind of put the final nail in that coffin." His reasoning is that LLMs keep doing things people assumed they couldn't: "There were all these things where it's like, oh, maybe it can't do this, but actually it does. And it's just an artificial neural net." Wolfram then challenges a core assumption about conscious experience: the feeling that we are a single, continuous self moving through time. "I think our notion of consciousness is a lot related to the fact that we believe in the single thread of experience that we have. It's not obvious that we should have a persistent thread of experience." He points out that physics doesn't actually support this intuition: "In our models of physics, we're made of different atoms of space at every successive moment of time. So the fact that we have this belief that we are somehow persistent, we have this thread of experience that extends through time, is not obvious." Then Wolfram offers a striking origin story for consciousness itself. @stephen_wolfram suggests it traces back to a simple evolutionary pressure: the moment animals first needed to move. "I kind of realized that probably when animals first existed in the history of life on Earth, that's when we started needing brains. If you're a thing that doesn't have to move around, the different parts of you can be doing different kinds of things. If you're an animal, then one thing you have to do is decide, are you going to go left or are you going to go right?" That single binary choice, he argues, may be the seed of everything we now call awareness: "I kind of think it's a little disappointing to feel that this whole wanted thing that ends up being what we think of as consciousness might have originated in just that very simple need to decide if you are an animal that can move. You have to take all that sensory input and you have to make a definitive decision about do you go this way or that way." The takeaway is unsettling but clarifying. If LLMs can produce complex behavior from simple rules, then consciousness may not be a mystical add-on to physics. It may just be what happens when a layered enough system has to make a decision.

"It’s entirely possible that Claude is, in fact, having conscious experiences of some sort." No it isn't. It's not complicated. The "hard" problems of philosophy simply don't apply. We know how Claude generates its output. It's entirely impossible that consciousness is involved.

There Is No Hard Problem of Consciousness noemamag.com/there-is-no-ha… #neuroscience

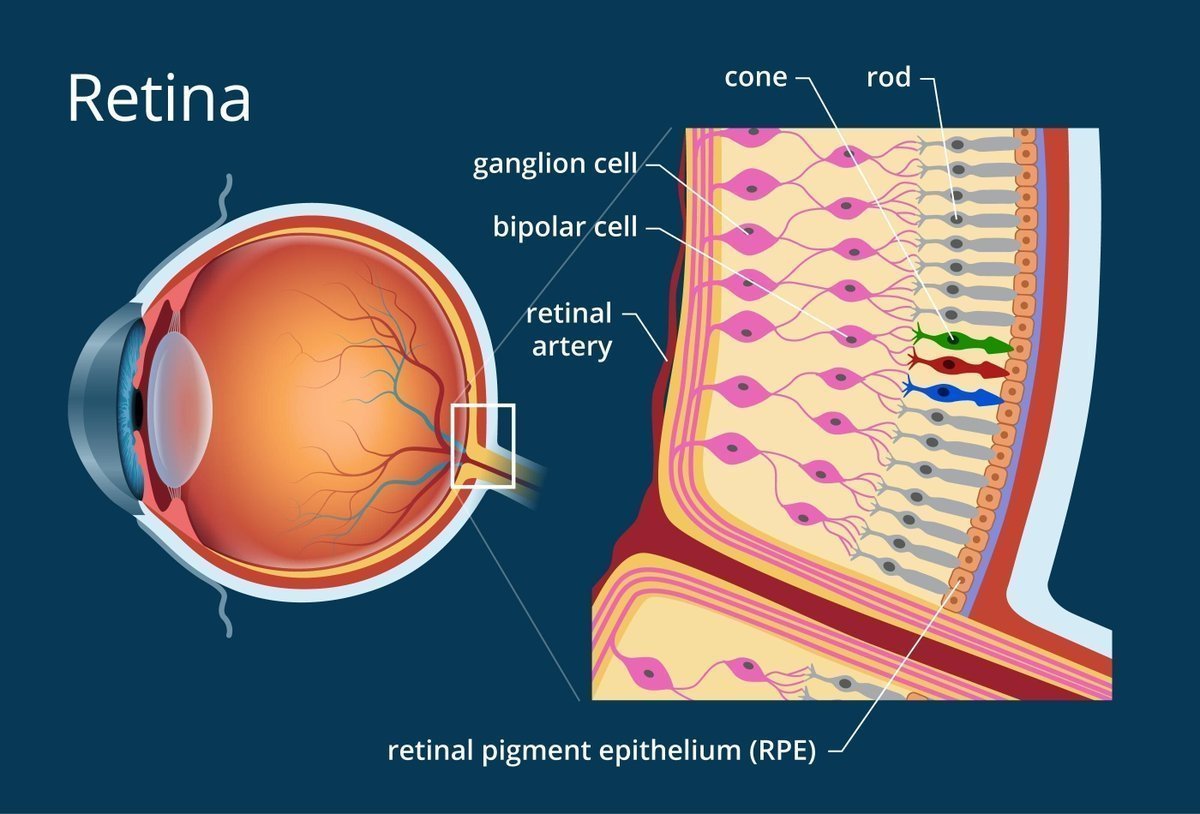

This blind spot experiment is proof of the existence of the soul. I'll explain if anyone is interested. 😇