Adam Stuckert

3.4K posts

This release makes me unreasonably happy since I wasn't involved at all - @vincent_koc and the maintainer team did a great job. I'm back soon to work on OpenClaw, today/tomorrow I'm prepping for @TEDTalks in Vancouver. 🇨🇦

The Liberal party has Patrick Pichette a former Senior VP of Google on stage who lives in Europe by the way, say that if Canadians want to leave Canada to work in the US they need to pay an exit tax of half a million dollars. The guy did the very thing to get a Microsoft job decades ago and paid 30 bucks. Now he wants young Canadians to be trapped here. The Liberals are nuts.

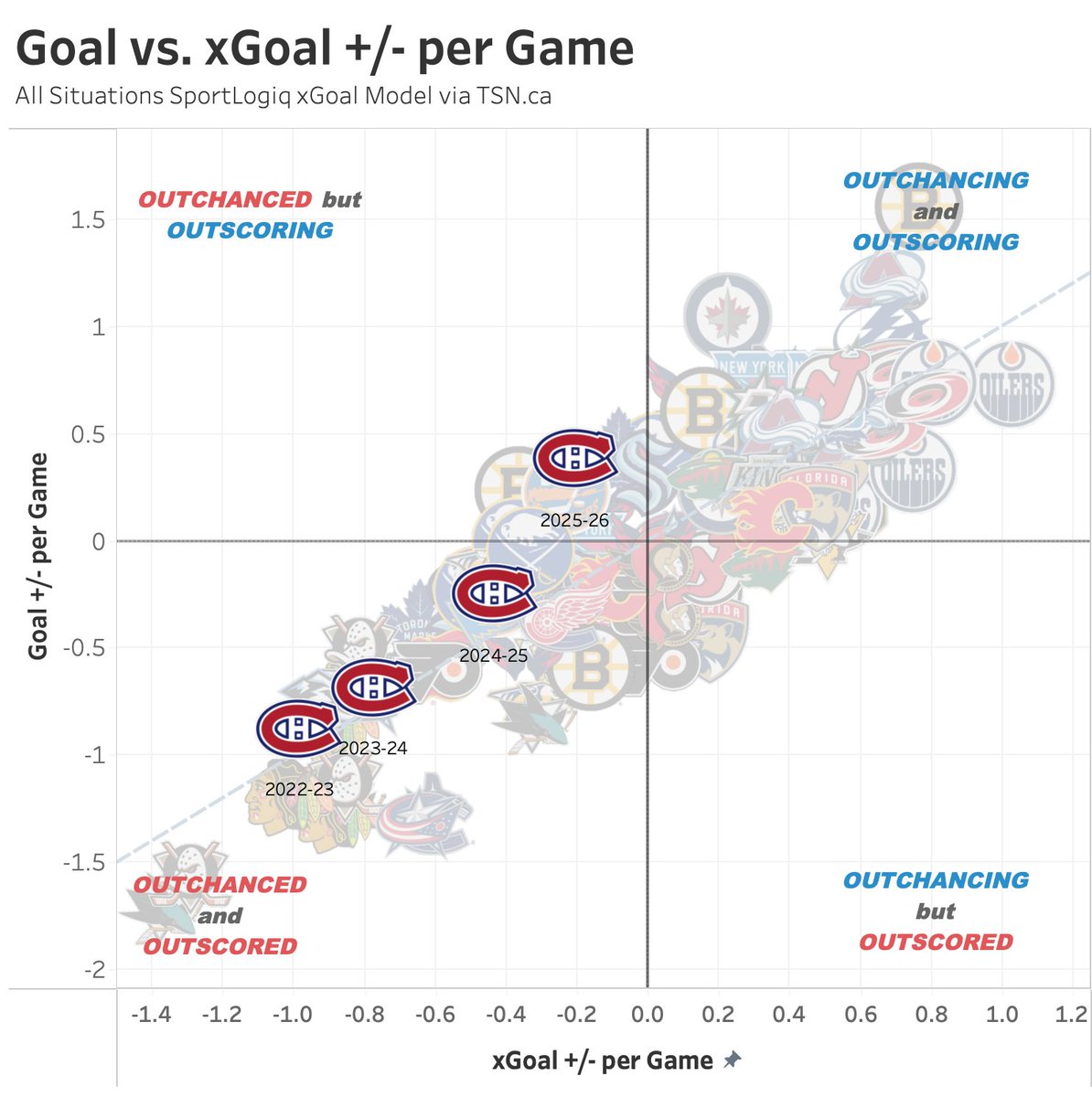

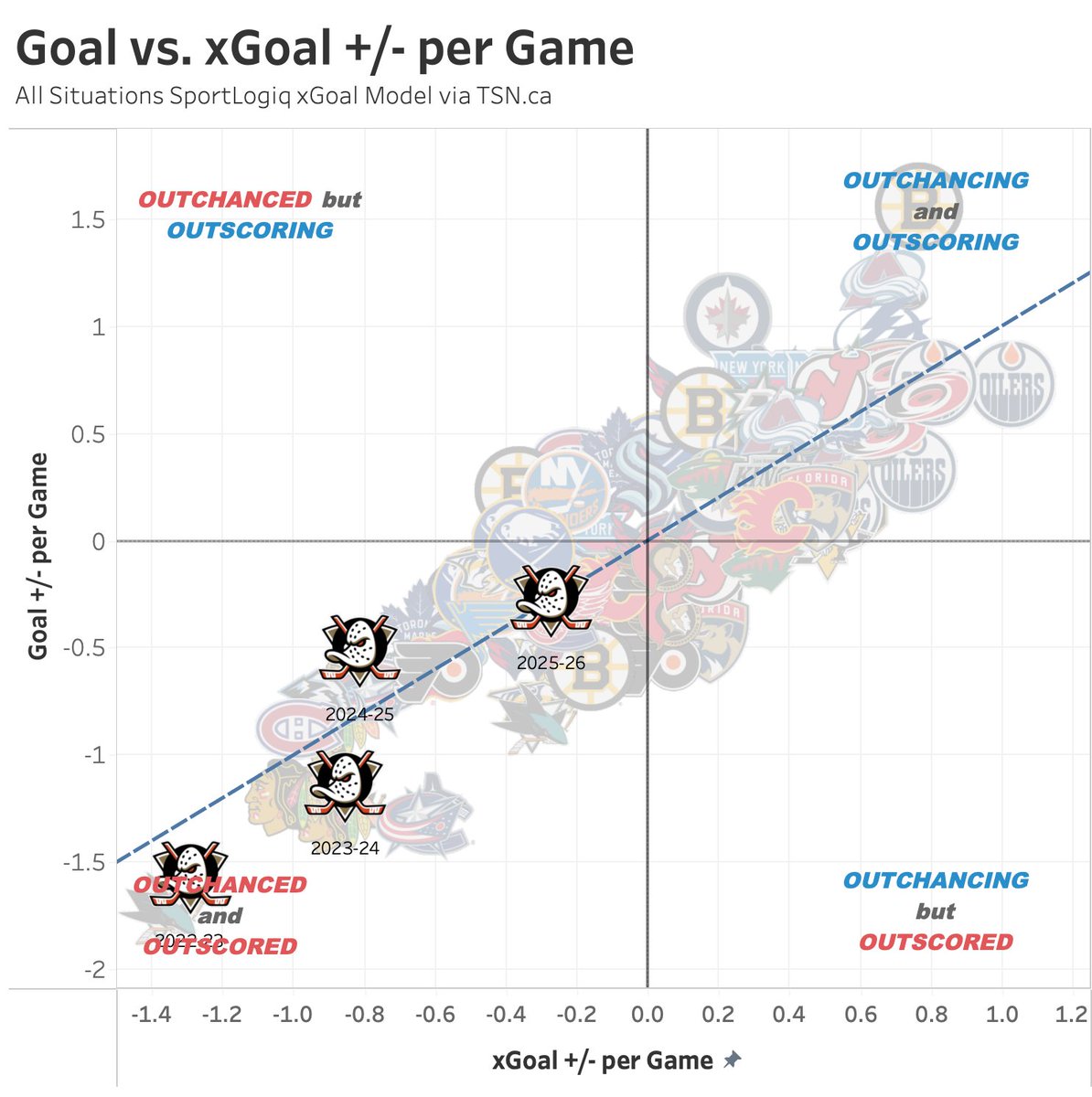

Final Goal vs. xGoal differential numbers from SportLogiq this season, shared via TSN

The degree to which you are awed by AI is perfectly correlated with how much you use AI to code.

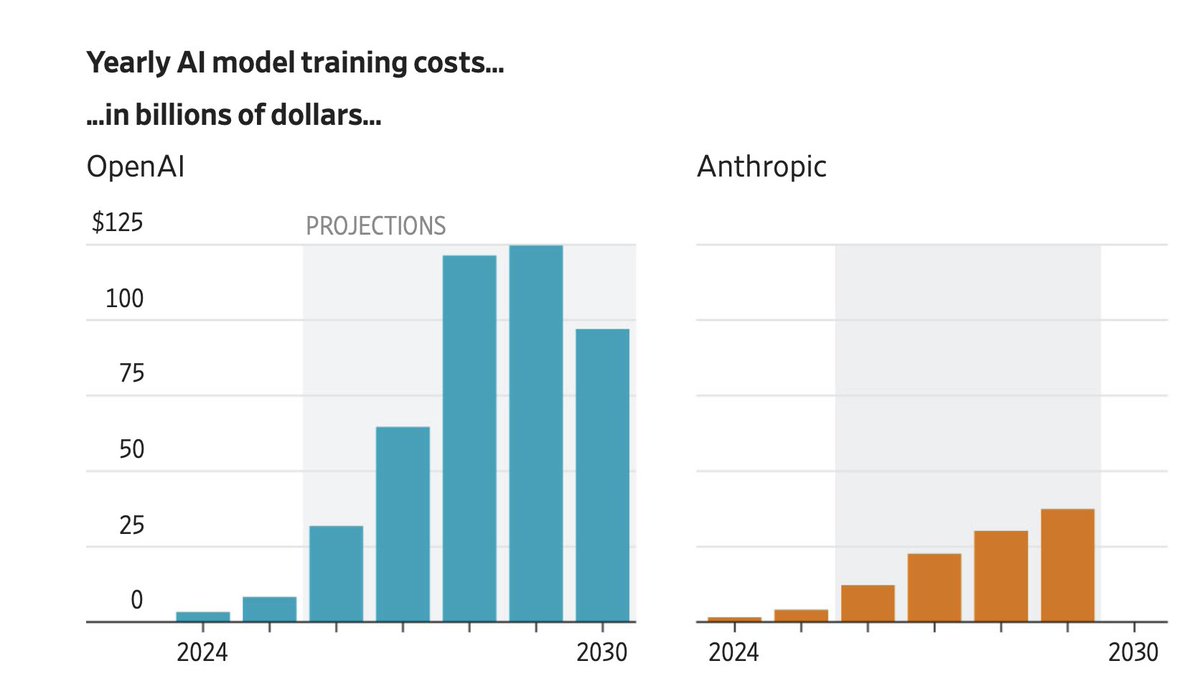

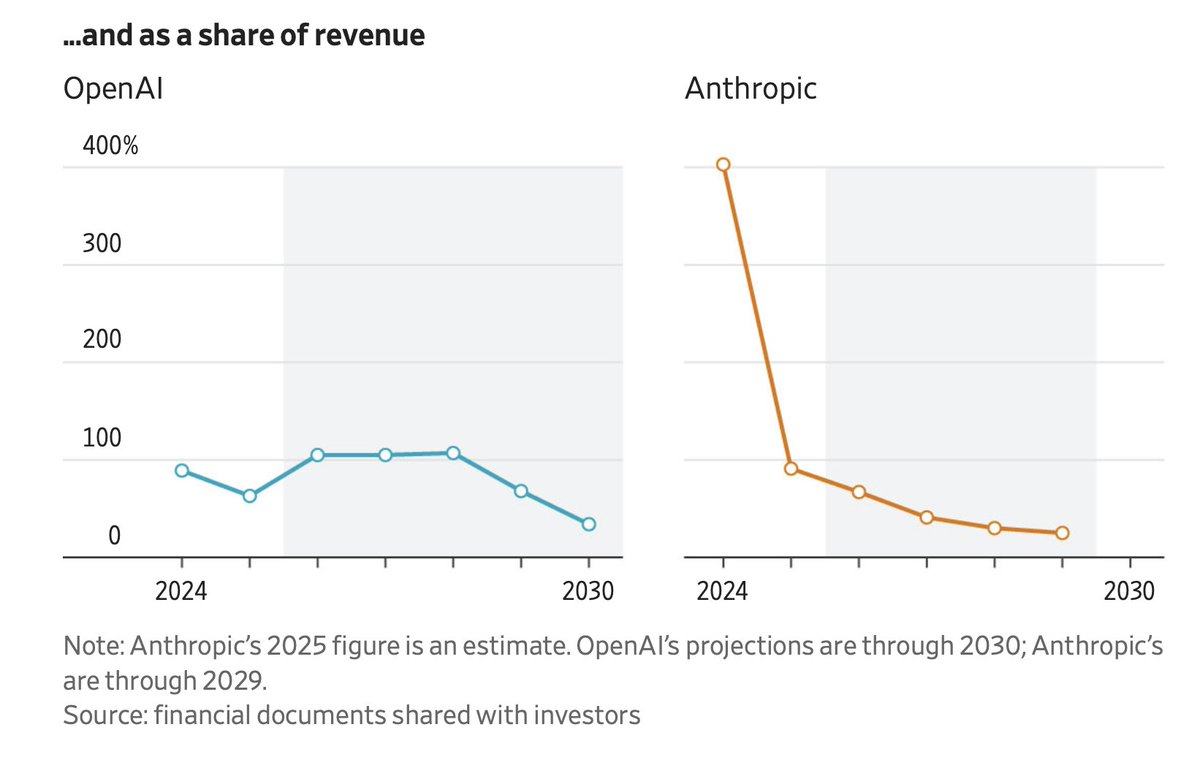

This is WILD! OpenAI's own CFO thinks the company isn't ready to go public but Sam Altman doesn't want to hear it. He committed $600 billion in spending over five years, told investors the company will burn over $200 billion before making a single dollar of profit, and privately set a goal to IPO before the end of this year. His CFO, Sarah Friar, started telling colleagues the numbers don't add up and the organization isn't ready for public markets. Revenue growth is already slowing, and the cost to run OpenAI's own AI models quadrupled in a single year, crushing margins from 40% down to 33%. Altman's response was he stopped inviting her to meetings. The people in the room noticed immediately, describing her absence as "notable and awkward". He also restructured who she reports to the CFO of one of the most valuable companies in history no longer has a direct line to the CEO. Now look at the $122 billion funding round that everyone celebrated last week. The money mostly comes from Amazon and Nvidia, the same companies OpenAI pays for chips and cloud computing every single month. They are investing in the company they're already billing. A large portion of Amazon's commitment doesn't even arrive unless OpenAI completes an IPO or achieves AGI. And the Stargate project, the $500 billion data center empire announced at the White House, has no staff, no built data centers, and has been stalled for over a year. OpenAI tried to own its own data centers and build its own infrastructure but banks said no. Lenders refused to finance a company burning billions annually with no proven path to profit. So they went back to renting from the same suppliers who are now also their investors.