Nitish Mutha ⚡️

754 posts

Nitish Mutha ⚡️

@nitmusai

Co-founder and CTO @GenieAI - Building Agentic Lawyer to do your legals. @UCL alum.

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day.

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day.

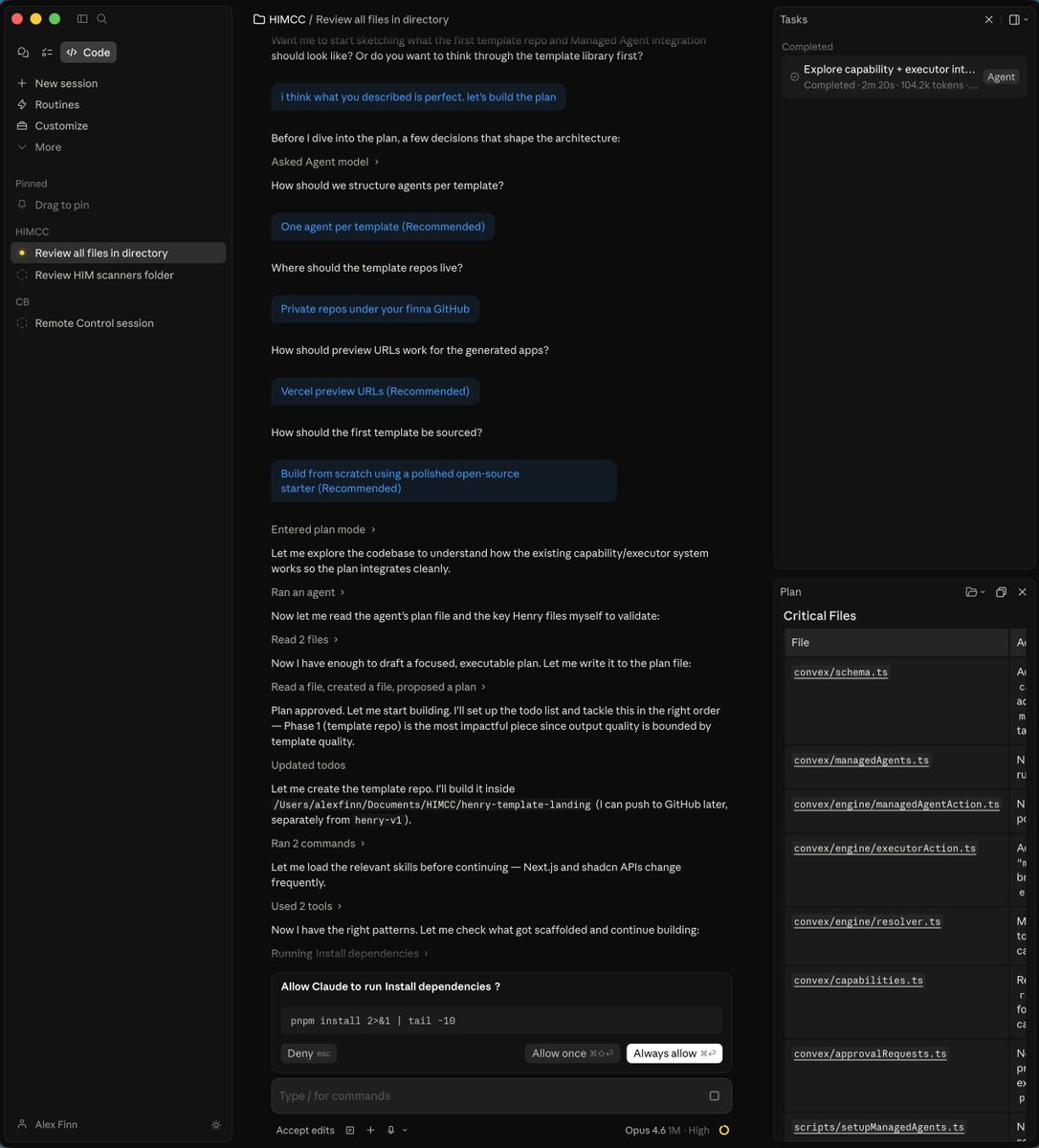

We've redesigned Claude Code on desktop. You can now run multiple Claude sessions side by side from one window, with a new sidebar to manage them all.

Subagents have arrived in Gemini CLI! 🤖🚀 Create your own custom subagents in @geminicli! Subagents are specialized, expert agents that the main agent can delegate work to. 📦- Subagents have their own set of tools, MCP servers, system instructions, and context window. 🏷️- Use @agent to explicitly delegate to a subagent 🧹- Keeps the main context window clean ⚡️- Speed up work by running agents in parallel Read more in the launch blog below 👇