Steffen Röcker

3.6K posts

@sroecker

OG local LLaMA shill. Sr. Solution Architect @RedHat, ex particle physicist. Born @ 347 ppm CO₂. Personal account, potentially unaligned.

TurboQuant has drawn a lot of attention recently, but the accompanying evals didn't tell the full story. So we ran what I believe is the first comprehensive study of TurboQuant: where it helps, where it falls short, and how it impacts accuracy, latency, and throughput. Findings:

Don’t just scale AI. Scale ROI. AMD Instinct MI350P PCIe cards deliver 144 GB of HBM3E memory and up to 2299 teraFLOPS (at MXFP4) in a drop-in, air-cooled card built for standard servers. That’s how you scale AI at maximum ROI without redesigning your data center. Interested in drop-in AMD Instinct MI350P PCIe cards? See the specs at the link: bit.ly/4exiAg2

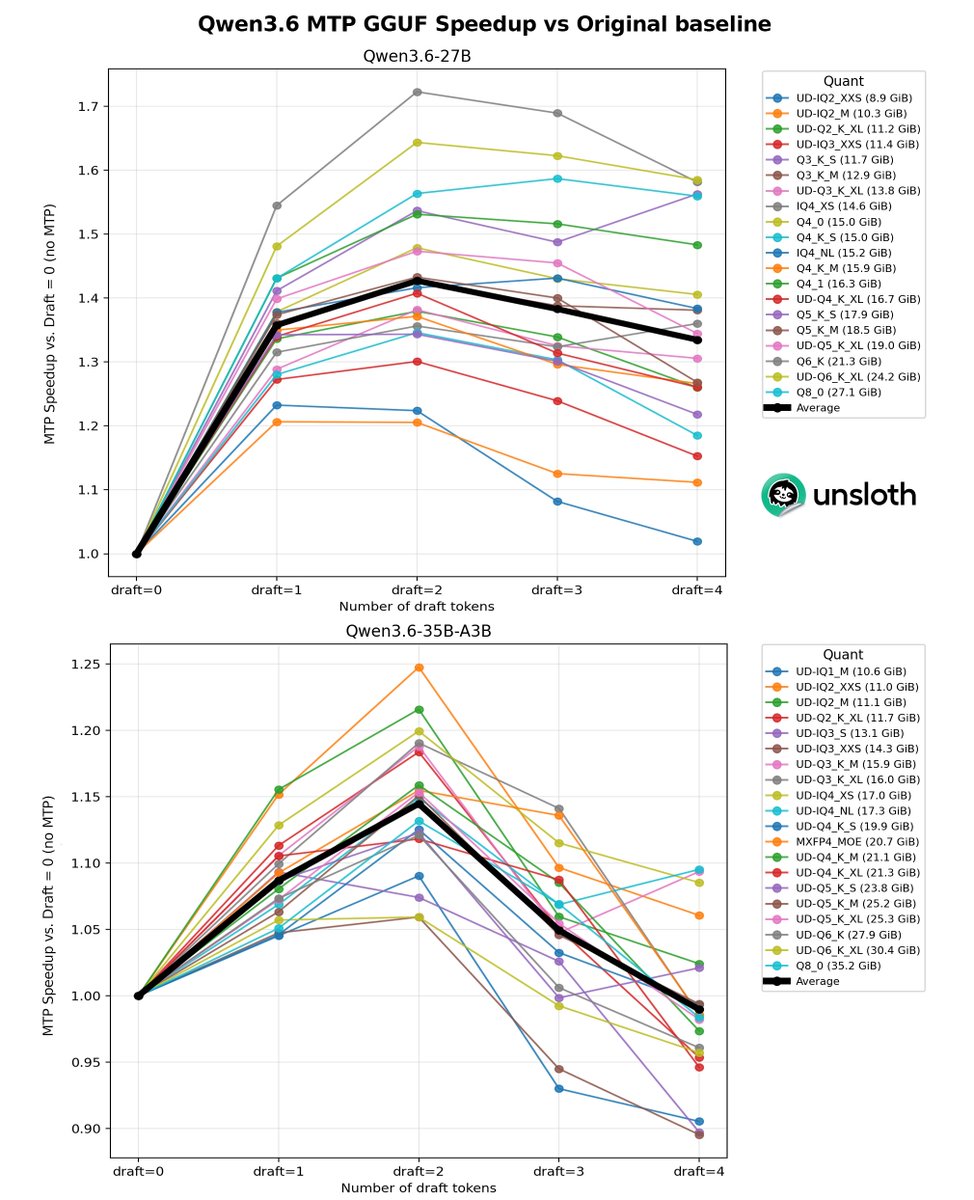

Qwen3.6 35B A3B model. 55+ tokens/sec. $300 GPU. No, this isn't a server card. It's an RTX 4060 Ti 8GB. Previously I posted that I 41 t/s on this gpu and that post blew up and went viral. I went back and made it 34% faster. And now the speed doesn't drop with context depth at all. New benchmarks + what changed 🧵

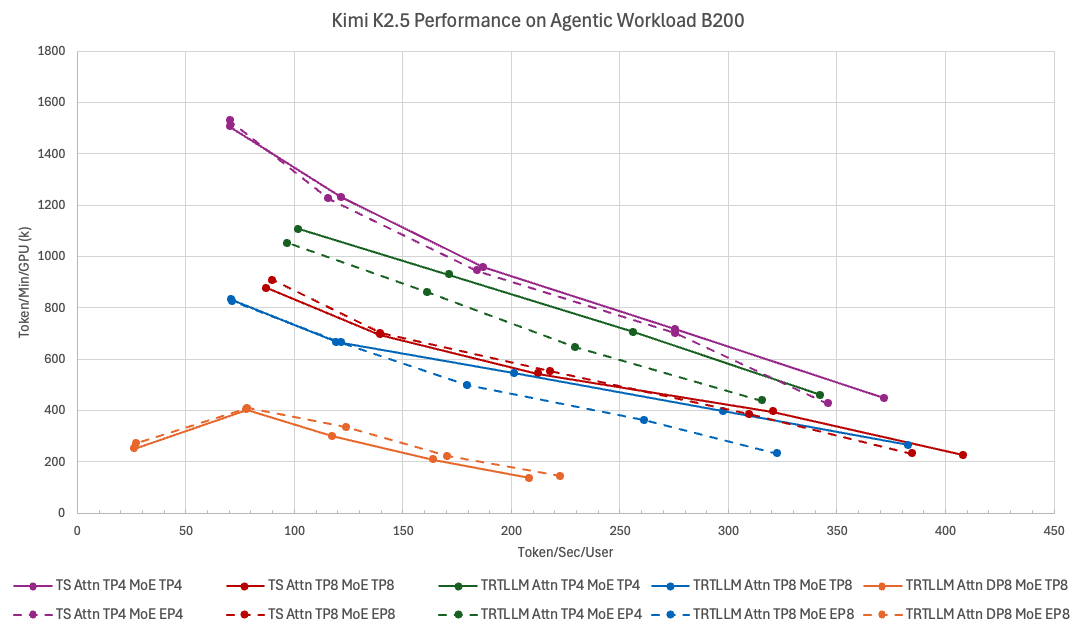

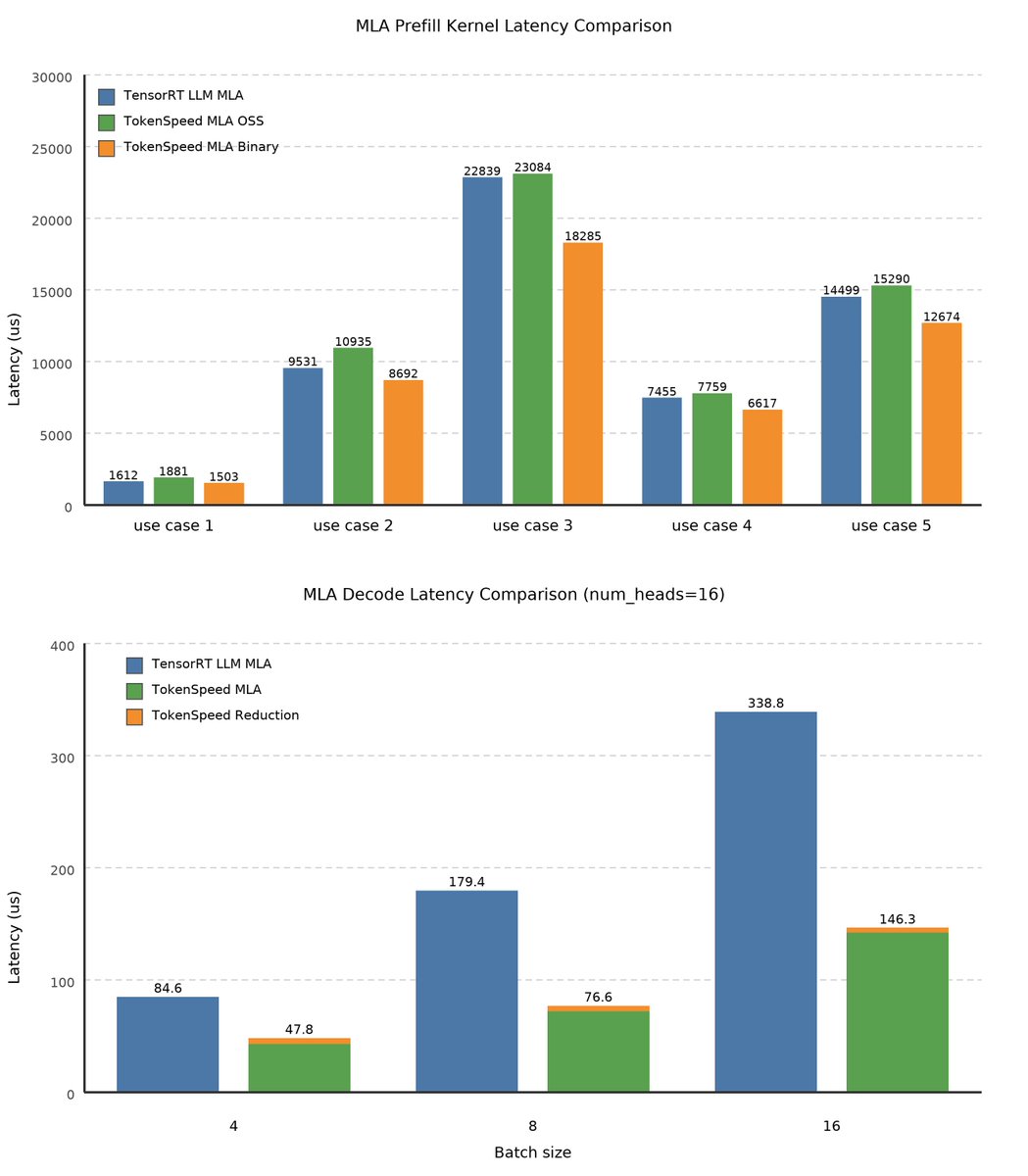

Introducing TokenSpeed, a speed-of-light LLM inference engine. > TensorRT LLM level performance > vLLM level usability > Built by a lean and mission-driven team in two months > MIT license, open-source github.com/lightseekorg/t… lightseek.org/blog/lightseek…