Ryan Sullivan retweetledi

Claude Cowork, Mythos, and the Future of Software: my conversation with @felixrieseberg, who leads Cowork at @AnthropicAI

00:00 Intro

01:53 Claude Mythos Preview and the “step-function change”

06:16 Why Anthropic is treating Mythos differently

11:19 The real story behind Claude Cowork’s “10-day” build

12:42 Why Anthropic realized Claude Code needed a non-technical version

15:44 What Claude Cowork actually is

17:03 Under the hood: virtual machines, tools, skills

18:36 Where Cowork’s memory actually lives

19:26 How Cowork connects to files, apps, and the internet

20:45 Why Felix thinks the local computer is under-appreciated

24:49 Trust: how do you get users comfortable with AI agents?

28:45 What UX actually means for AI agents

31:27 Anthropic Cowork's roadmap is only one month long

34:12 Building 100 prototypes

35:10 If execution is free, what becomes the bottleneck?

37:25 Does it come down to taste?

40:12 The hardest part of building Claude Cowork

41:43 Advice for founders building AI agents

44:21 SaaSpocalypse: what’s left for software startups

49:30 Where AI agents are going next

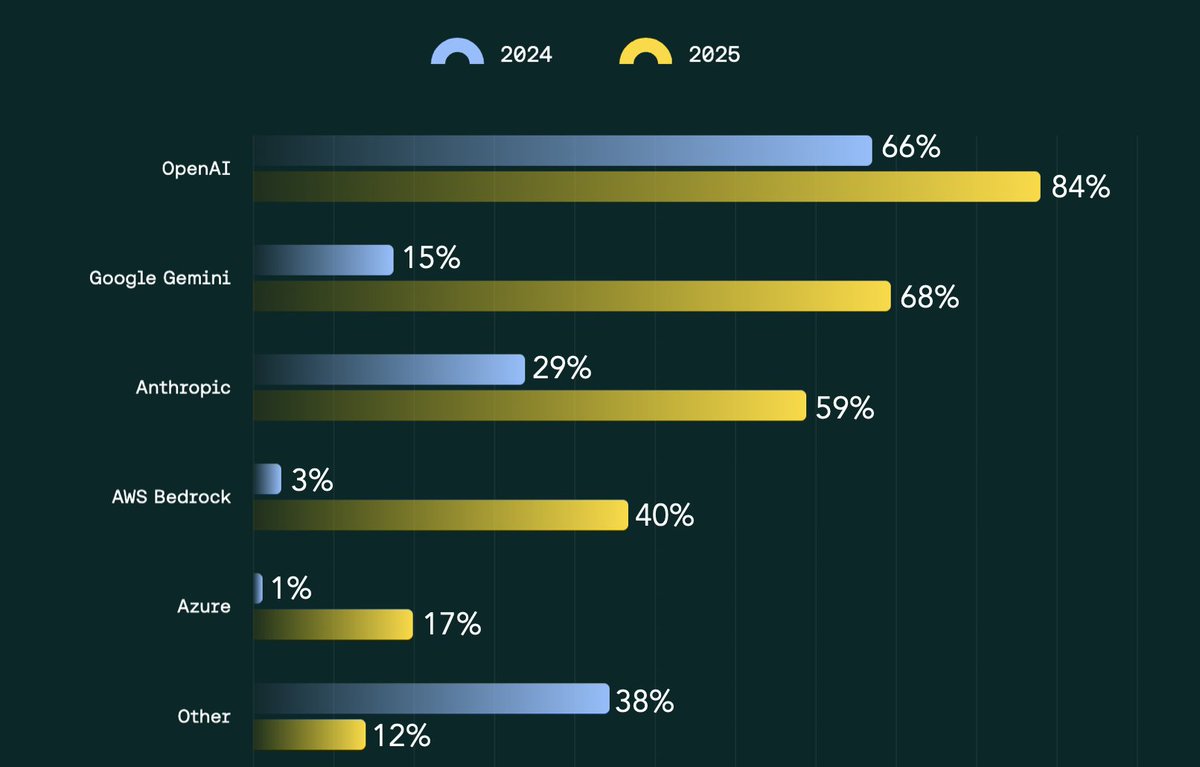

51:20 Regulated industries and enterprise adoption

54:15 Hot takes: what's underrated, overrated, and what Felix would build today

English