@geerlingguy ai gonna take my job writing comments wasting people's neurons

English

azzurro

3.5K posts

@therealazzurro

Nerd. Shitposting all day long. Not Russian. Cloud Insultant.

I worked on the XP run dialog. I'm a grizzled old man now, barely recognizable in the mirror, but even I think 94ms is a long-assed time to wait for a dialog to open.

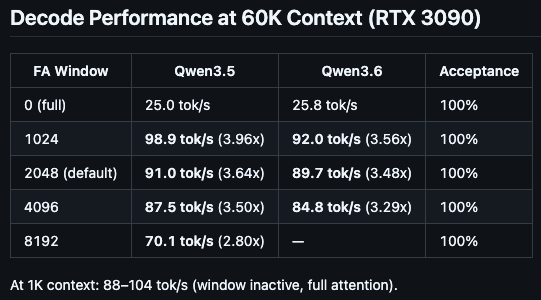

"You need a 24 GB GPU for serious local LLMs in 2026." Everyone repeats this. It's not true anymore. Just ran a 35B-parameter model on an RTX 4060 Ti 8 GB: • 41 tok/s at 16k context • 24 tok/s at 200k context Recipe + benchmarks below 🧵