Copyleaks

2.3K posts

Copyleaks

@Copyleaks

Ensuring #AI governance and compliance, protecting and safeguarding IP, and maintaining academic integrity.

DeepSeek is legitimately impressive, but the level of hysteria is an indictment of so many. The $5M number is bogus. It is pushed by a Chinese hedge fund to slow investment in American AI startups, service their own shorts against American titans like Nvidia, and hide sanction evasion. America is a fertile bed for psyops like this because our media apparatus hates our technology companies and wants to see President Trump fail. We have so many useful idiots uncritically reporting Chinese propaganda as fact because on some level, they want it to be true. They love seeing hundreds of billions of dollars wiped off the market cap off our largest companies.

DeepSeek’s first reasoning model has arrived - over 25x cheaper than OpenAI’s o1

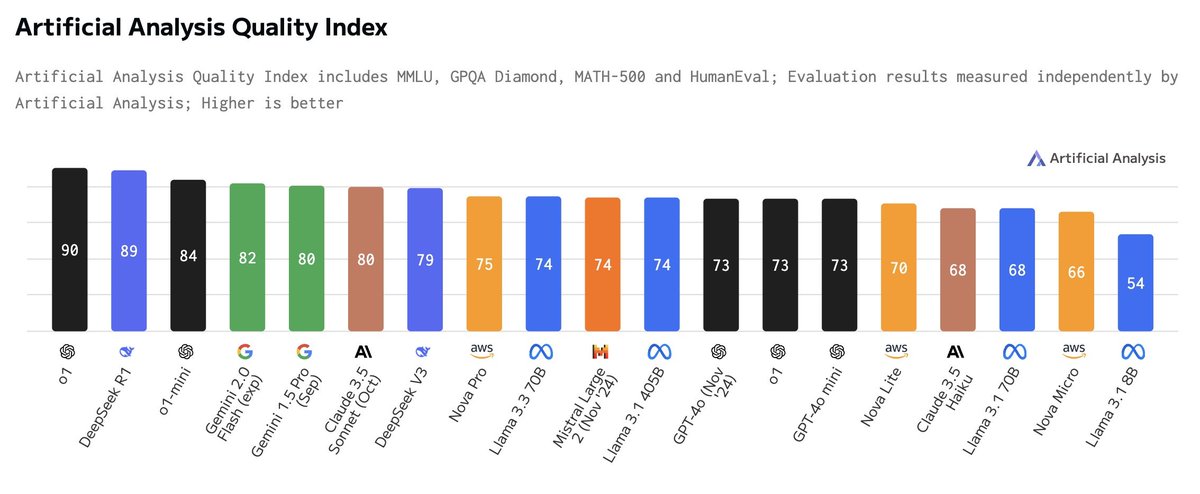

Highlights from our initial benchmarking of DeepSeek R1:

➤ Trades blows with OpenAI’s o1 across our eval suite to score the second highest in Artificial Analysis Quality Index ever

➤ Priced on DeepSeek’s own API at just $0.55/$2.19 input/output - significantly cheaper than not just o1 but o1-mini

➤ Served by DeepSeek at 71 output tokens/s (comparable to DeepSeek V3)

➤ Reasoning tokens are wrapped in