Haka Haky

421 posts

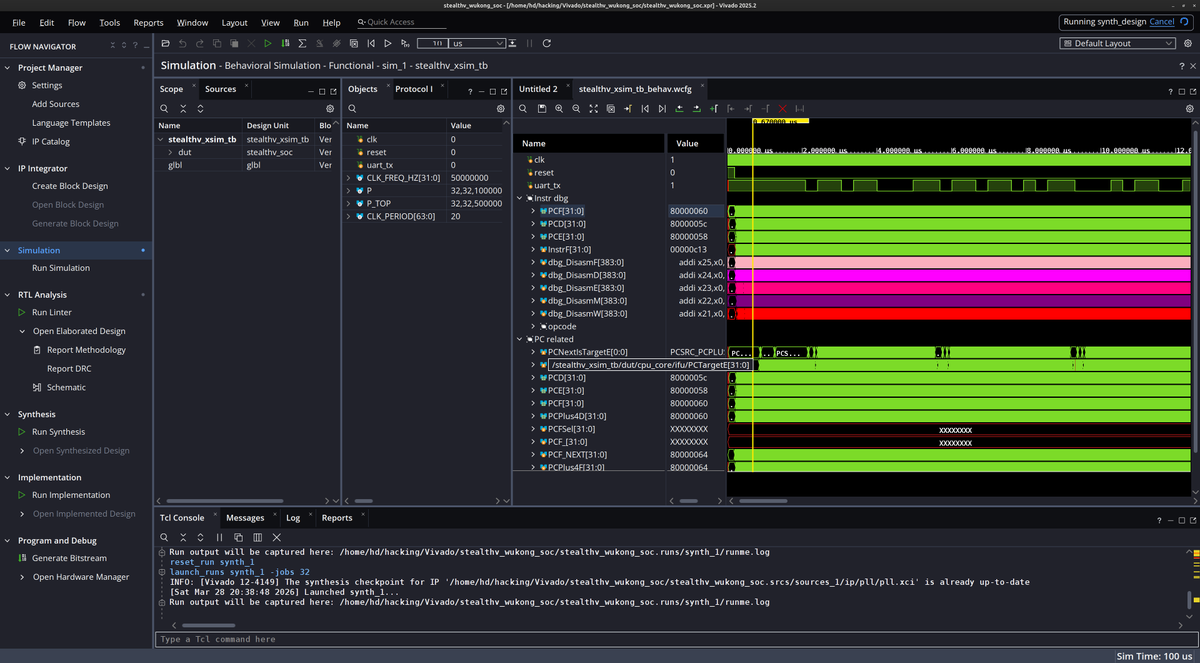

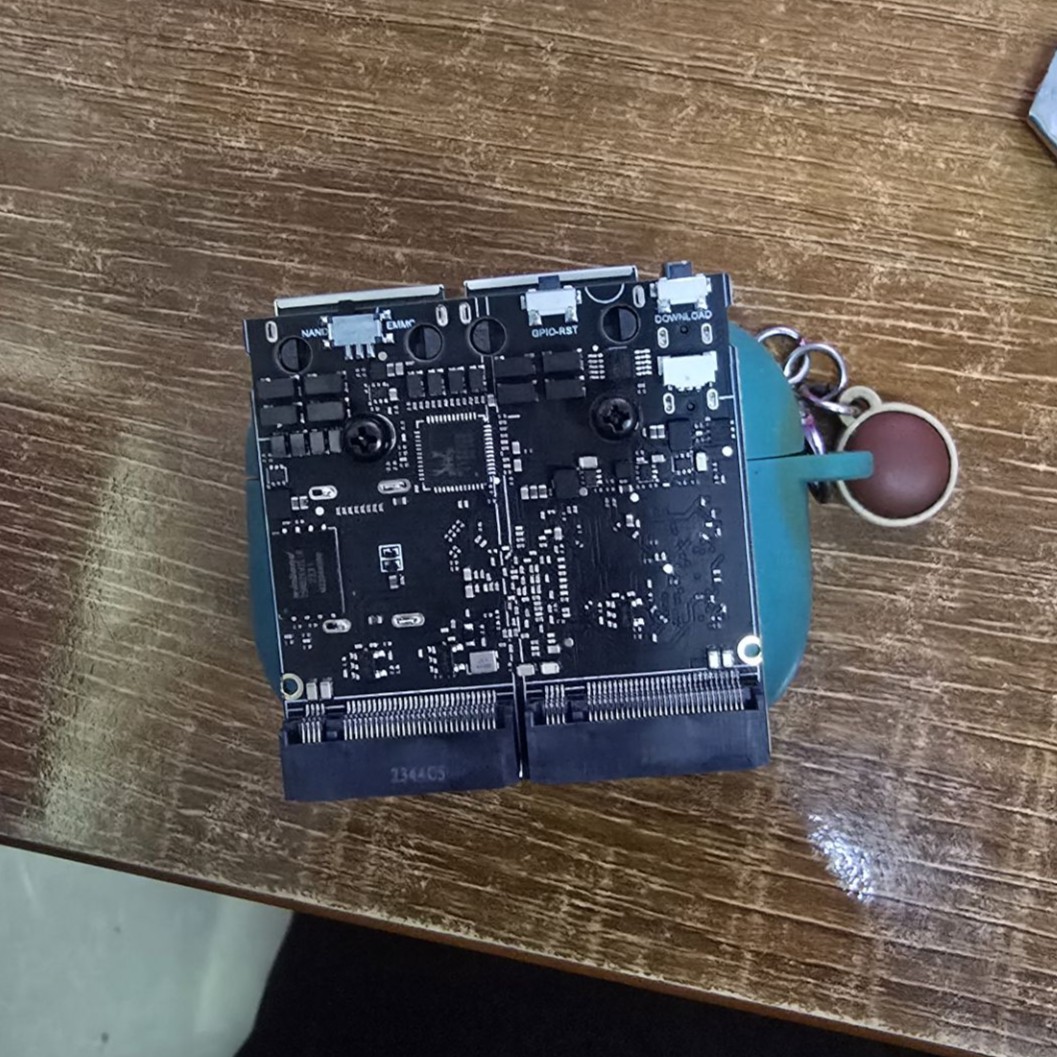

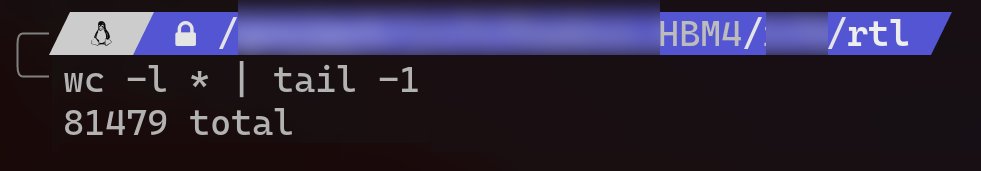

@ATaylorFPGA gave me this QMTech Wukong board as a gift. This is the second board I’ve received from him, got it today. The other one is running my KianV Linux SoC and is basically my always-on machine. I’ll use this new one for my next Linux SoC. This one should be fast as hell, though probably not very ASIC-friendly since it needs a lot of resources. It’s going to be a pipelined Linux SoC. The plan is to add an SDRAM burst controller with proper clock domain crossing, so the memory can run at a higher frequency than the core. Later I’ll either build a DDR controller myself. Long term goal is to move on to more advanced CPUs, superscalar and out-of-order. I would probably already be deep into this project if the ASIC work hadn’t gotten in the way, but honestly I’m glad I did those ASICs. That’s my next big project for this year. Thanks Adam, really appreciate it.

$MU Bargain of the Century PE Ratio: 15.5 Sales Ratio: 2.33 50% Increase in HBM (AI Memory) Sequentially. DRAM/NAND prices are surging.

Wild that 50 years later I can, as a one-man show, build an ASIC from scratch that boots Linux and outperforms a PDP-11/83. LMAO. wafer.space #gf180mcu

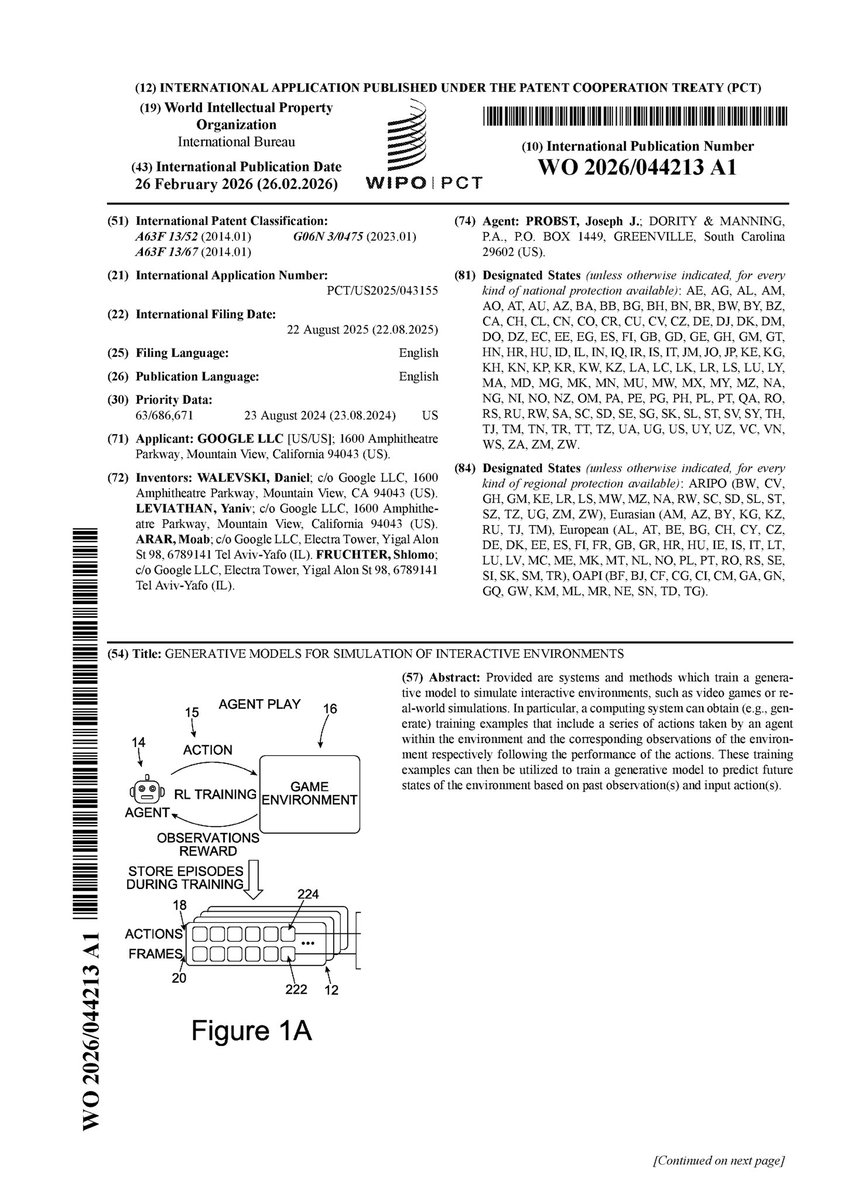

The Anthropic TPU deal solidifies it. There's two companies that can make training chips, NVIDIA and Google. Elon tried with Dojo. Amazon tried with Trainium. DeepSeek tried with Huawei. Countless startups are flailing with multiple tapeouts and no real adoption. The funny thing is, the TPU chip itself is very simple. The difference is all the software (XLA-TPU is the best deep learning compiler, too bad it's closed source). In 2 more years, tinygrad will be actually 1.0, with performance exceeding all other libraries. There was a lot of Dunning-Kruger on the way, but we maintain the underlying abstractions required for all deep learning style compute are very simple. At this point, the backend specific code is 1,000 lines. The Verilog for an accelerator should only be about 3,000. We'll build it on FPGAs, and then look for a partner to work with to tape it out. PS: We're still hard at work on the AMD MLPerf contract. AMD has made big strides on their mainline stack in the last 2 years. It's mirroring the NVIDIA stack, but I've come to respect this strategy more and AMD will soon be an uncontested third player in the training space.