Milad M

139 posts

Milad M

@_miladm_

PyTorch @Meta Superintelligence Labs - Ex: @Google, @Stanford, @Nvidia, @Apple

NEW paper from Google on multi-agent research agents. It's one of the first systems that handles end-to-end LaTeX generation, targeted literature reviews, and conceptual diagrams as a decoupled, standalone writer. Automated research frameworks can run experiments, but their writing modules remain the weakest link. Literature reviews are shallow, citations are sparse, and no system generates conceptual diagrams. This new research introduces a standalone writing framework that addresses all of this. PaperOrchestra is a multi-agent system that transforms unconstrained pre-writing materials, raw ideas, experimental logs, notes, into submission-ready LaTeX manuscripts. It uses specialized agents for deep literature synthesis, plot generation, conceptual diagram creation, and iterative refinement. The team also releases PaperWritingBench, the first standardized benchmark with reverse-engineered materials from 200 top-tier AI conference papers. Why does it matter? In side-by-side human evaluations, PaperOrchestra achieved absolute win rate margins of 50 to 68% in literature review quality and 14 to 38% in overall manuscript quality over autonomous baselines. Paper: arxiv.org/abs/2604.05018 Learn to build effective AI agents in our academy: academy.dair.ai

2025 was the year when artificial intelligence’s full potential roared into view, and when it became clear that there will be no turning back. For delivering the age of thinking machines, for wowing and worrying humanity, for transforming the present and transcending the possible, the Architects of AI are TIME’s 2025 Person of the Year. time.com/7339685/person…

Today, The King presented The Queen Elizabeth Prize for Engineering at St James's Palace, celebrating the innovations which are transforming our world. 🧠 This year’s prize honours seven pioneers whose work has shaped modern artificial intelligence. 🔗 Find out more: qeprize.org/winners/modern…

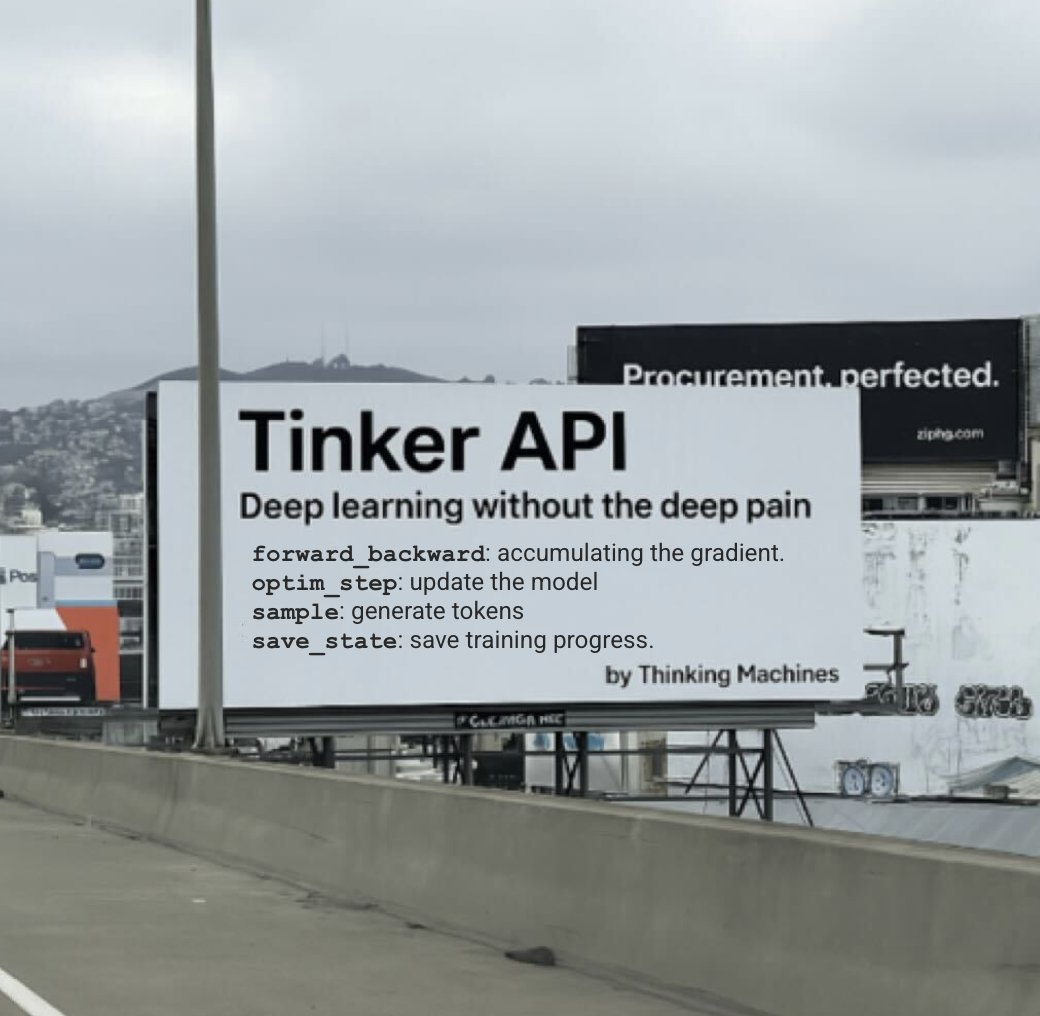

Efficient training of neural networks is difficult. Our second Connectionism post introduces Modular Manifolds, a theoretical step toward more stable and performant training by co-designing neural net optimizers with manifold constraints on weight matrices. thinkingmachines.ai/blog/modular-m… We explore a fundamental understanding of the geometry of neural network optimization.

The moment is right to push forward into a new frontier for AI — one that is as fundamental as language, says @drfeifei. That frontier is visual spatial intelligence. With Justin Johnson (@jcjohnss), her cofounder at @theworldlabs, and a16z's @martin_casado, Fei-Fei explains what unlocking this technology could mean, and why we’re in the midst of a “Cambrian explosion”: