Charchit Sharma

514 posts

Charchit Sharma

@charchits7

✨ Exploring the crossroads of art & AI Goal is to build, learn, and share along the way!

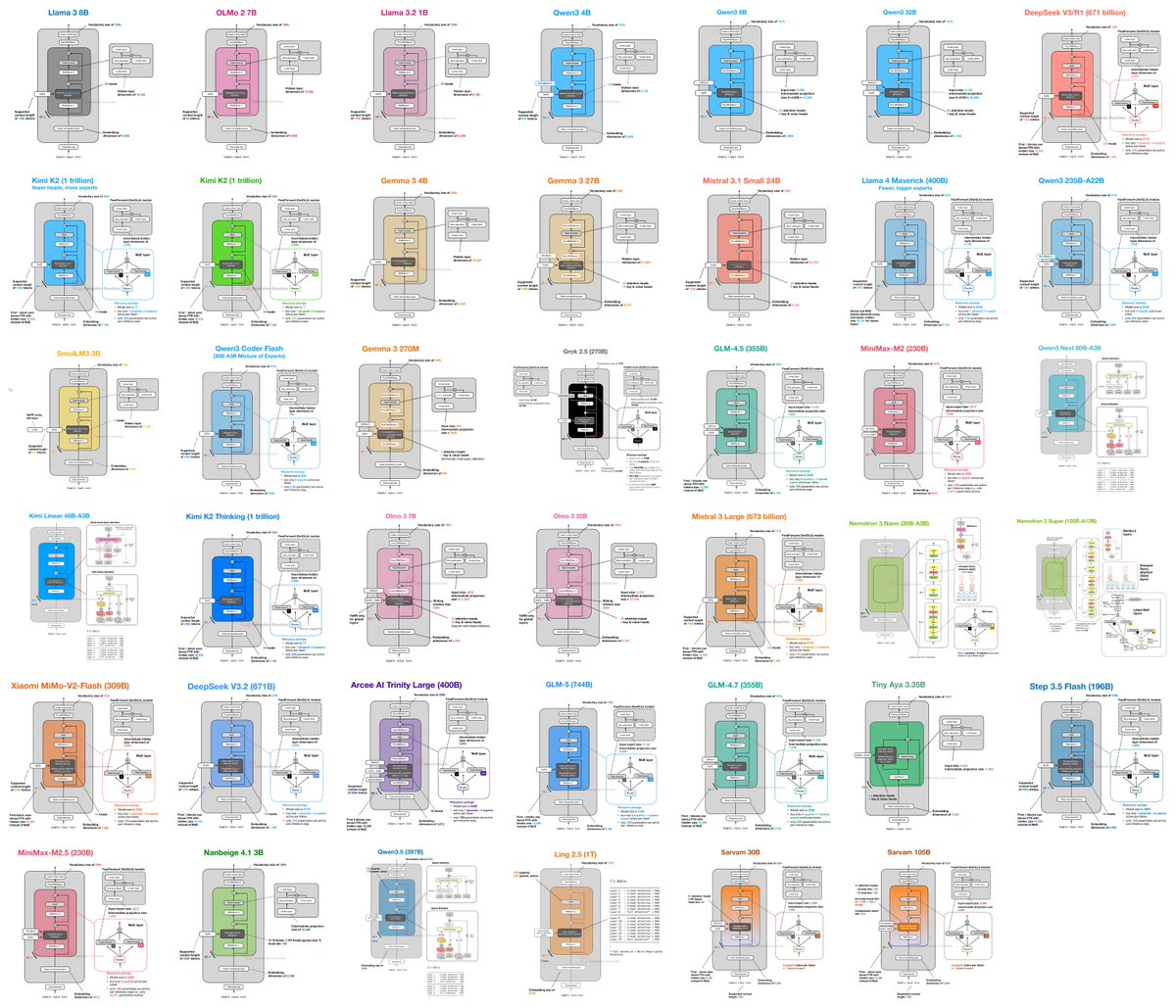

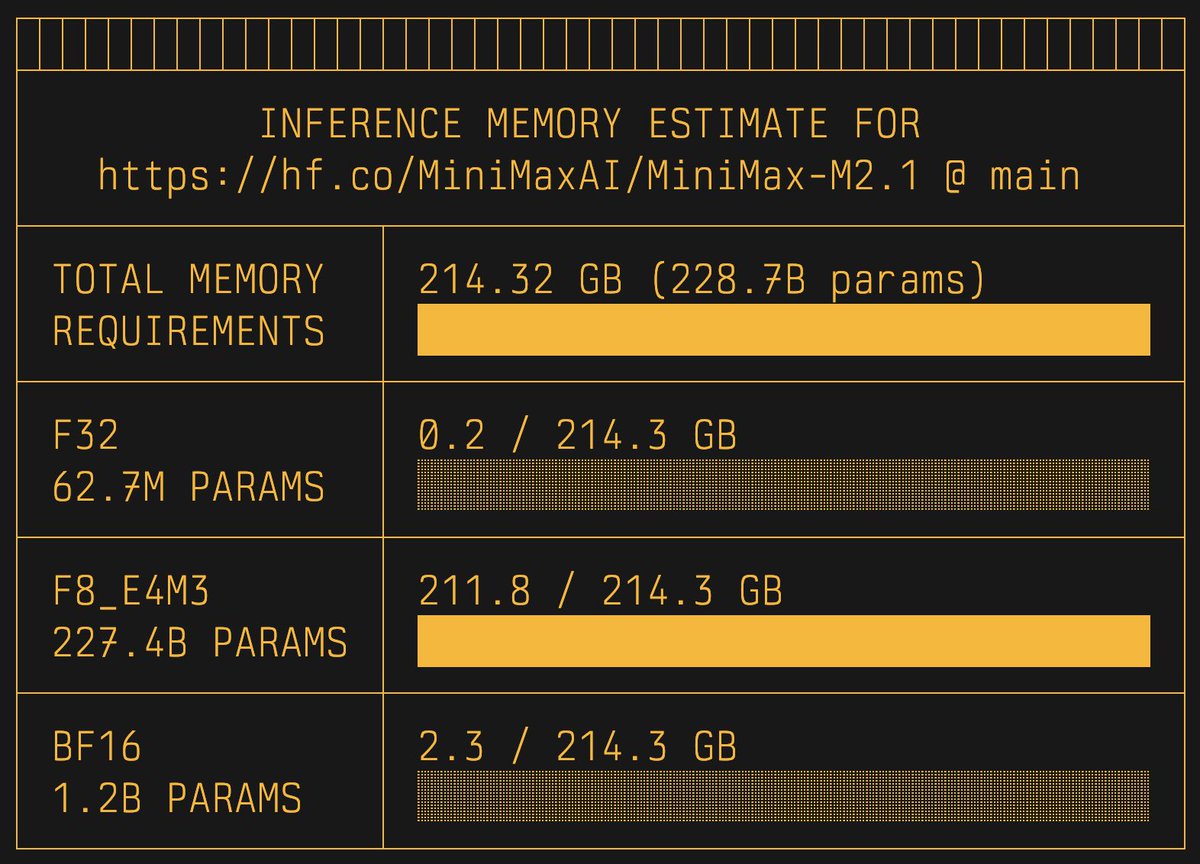

The Mixture of Experts (MoE) inside 🤗 Transformers is out now! This is going to be a long tweet, so if you just want to jump to the blog, the link is in the thread. We already had a great blog post on MoEs (which has more than 1k upvotes 😯 at the time of writing). The reason we wanted to build another blog post altogether was just noticing how far we have come in the realm. This blog post is not meant to be another "What is MoEs and how to implement them". Rather talk about how the transformers team at @huggingface made MoEs the "first class citizen" of the library and the Hub. The transformers library and the entire ecosystem was built around dense architectures, but now with the rapid growth of MoEs, it was inevitible to build around MoEs and not consider them as "just another model addition". In the post we talk about better model loading, expert backend, expert parallelism, and also @UnslothAI and out collaboration on training MoEs faster! In the process of building the blog post, I also understood how beautiful the ideas are, and ended up making my first YouTube video on the routing algorithm alone. I am very proud of this project and I think it shows in some paragraphs of the blog post. I am also very thankful to all the people who helped me in the project, I am really happy to be in the team that helps me flourish! Glad to be alive. PS: I owe you all an apology for delaying the release. I hope I (and the team) could make it worth the wait.

19 वर्ष के देवव्रत महेश रेखे जी ने जो उपलब्धि हासिल की है, वो जानकर मन प्रफुल्लित हो गया है। उनकी ये सफलता हमारी आने वाली पीढ़ियों की प्रेरणा बनने वाली है। भारतीय संस्कृति में आस्था रखने वाले हर एक व्यक्ति को ये जानकर अच्छा लगेगा कि श्री देवव्रत ने शुक्ल यजुर्वेद की माध्यन्दिन शाखा के 2000 मंत्रों वाले 'दण्डकर्म पारायणम्' को 50 दिनों तक बिना किसी अवरोध के पूर्ण किया है। इसमें अनेक वैदिक ऋचाएं और पवित्रतम शब्द उल्लेखित हैं, जिन्हें उन्होंने पूर्ण शुद्धता के साथ उच्चारित किया। ये उपलब्धि हमारी गुरु परंपरा का सबसे उत्तम रूप है। काशी से सांसद के रूप में, मुझे इस बात का गर्व है कि उनकी यह अद्भुत साधना इसी पवित्र धरती पर संपन्न हुई। उनके परिवार, संतों, मुनियों, विद्वानों और देशभर की उन सभी संस्थाओं को मेरा प्रणाम, जिन्होंने इस तपस्या में उन्हें सहयोग दिया।

Our Founder and Scientific Director @Yoshua_Bengio has become the first living researcher to surpass 1 million citations on Google Scholar, a testament to the foundational and global impact of his work. Congratulations Yoshua!

Love to see this from @WhiteHouse!