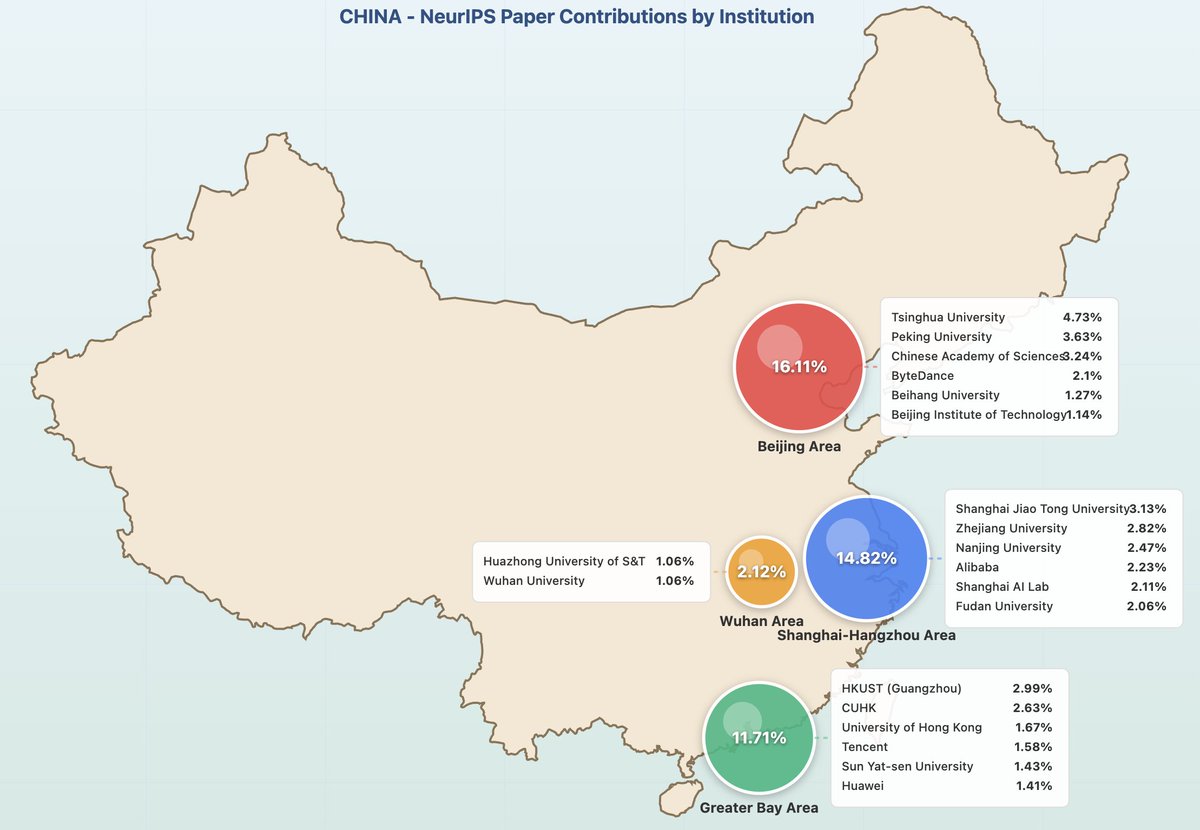

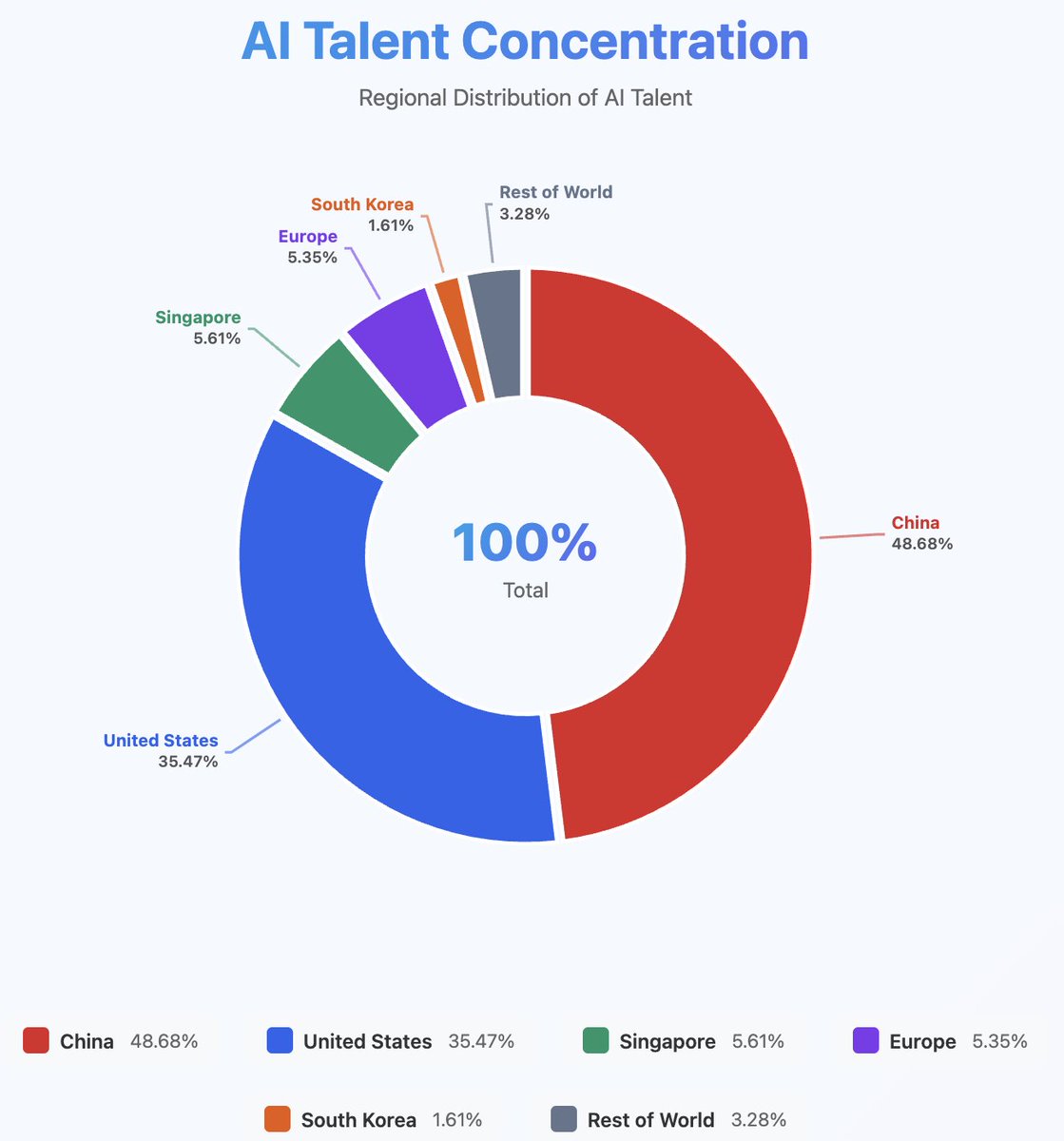

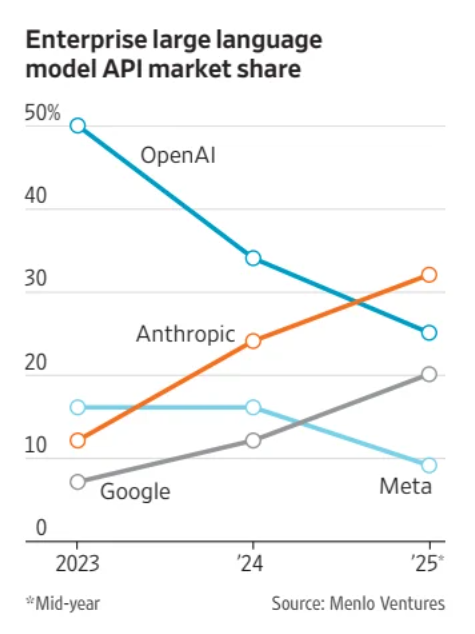

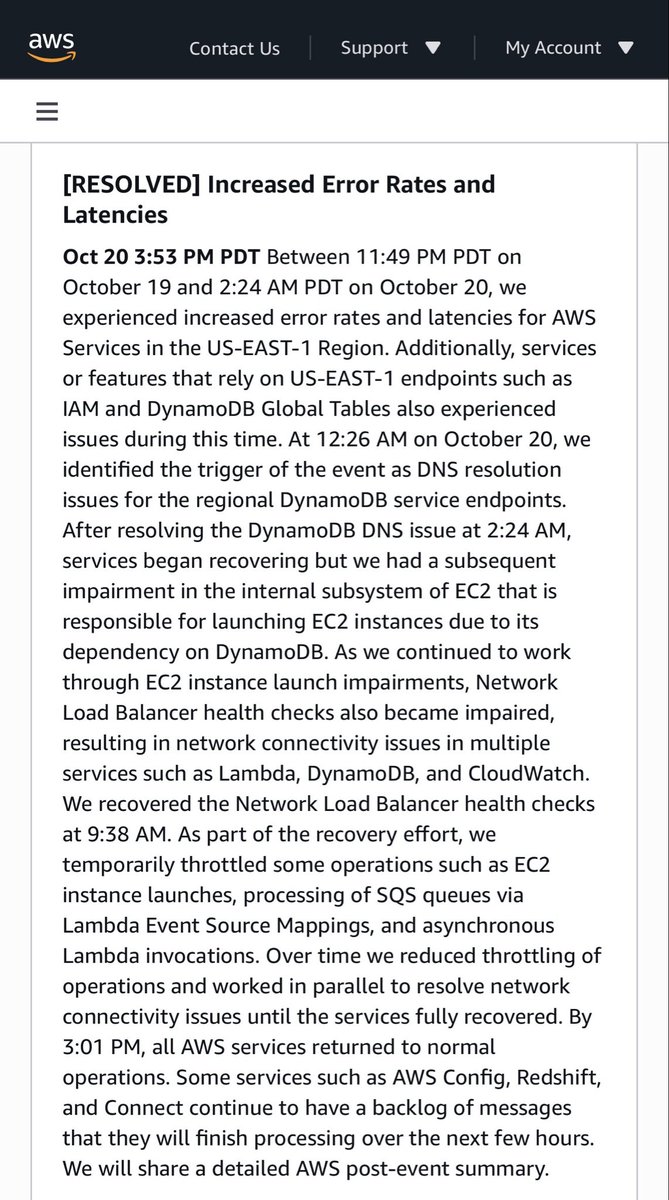

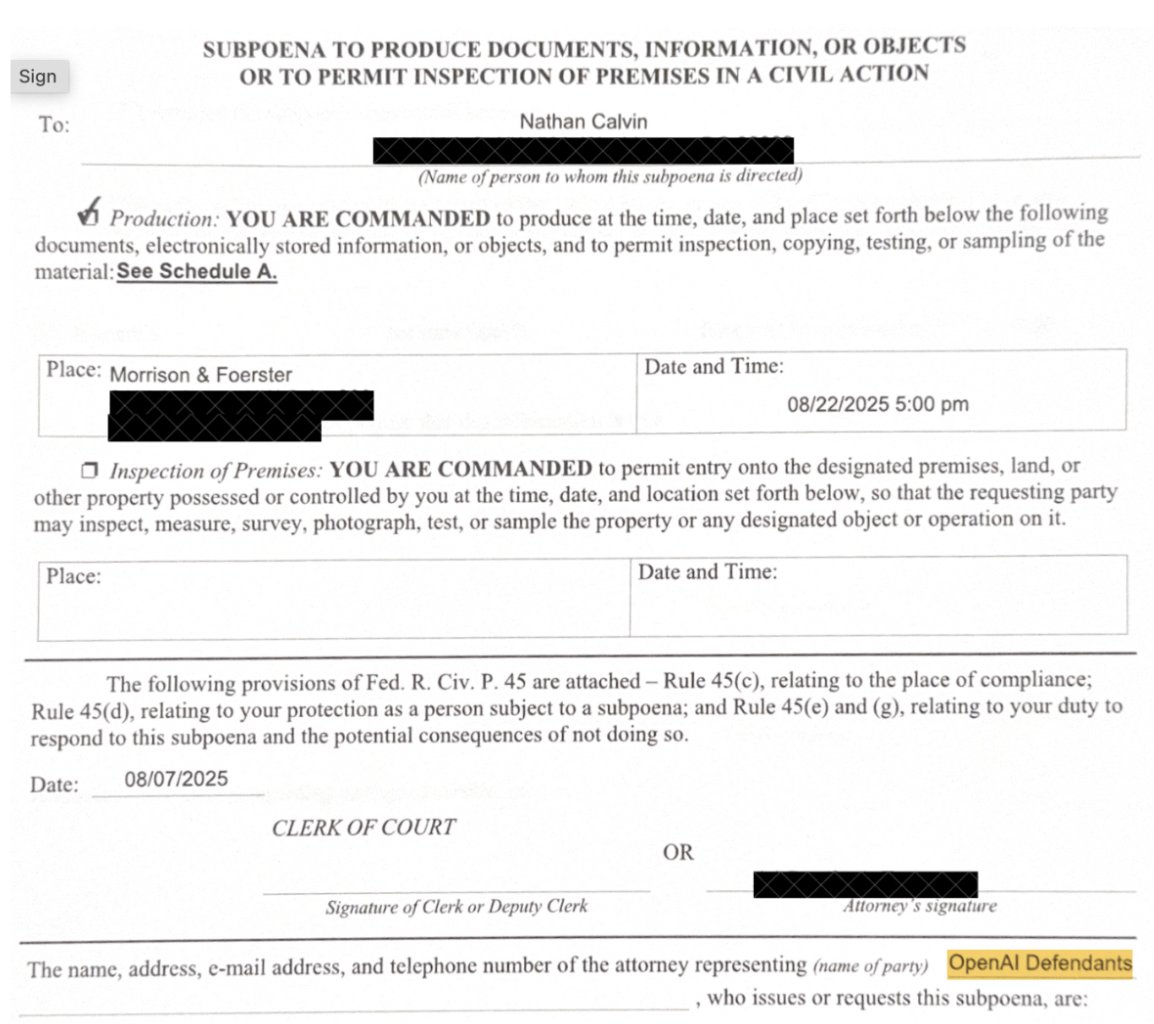

JUNE 2028. The S&P is down 38% from its highs. Unemployment just printed 10.2%. Private credit is unraveling. Prime mortgages are cracking. AI didn’t disappoint. It exceeded every expectation. What happened? citriniresearch.com/p/2028gic