Remi

2.5K posts

Remi

@rfuzzlemuzz

metacrisis troubleshooter.

Tesla’s brakes and maneuverability are truly outstanding. The car’s advanced Automatic Emergency Braking and precise handling helped prevent a serious accident. $TSLA

yeah it's over holy shit h/t @sawlygg

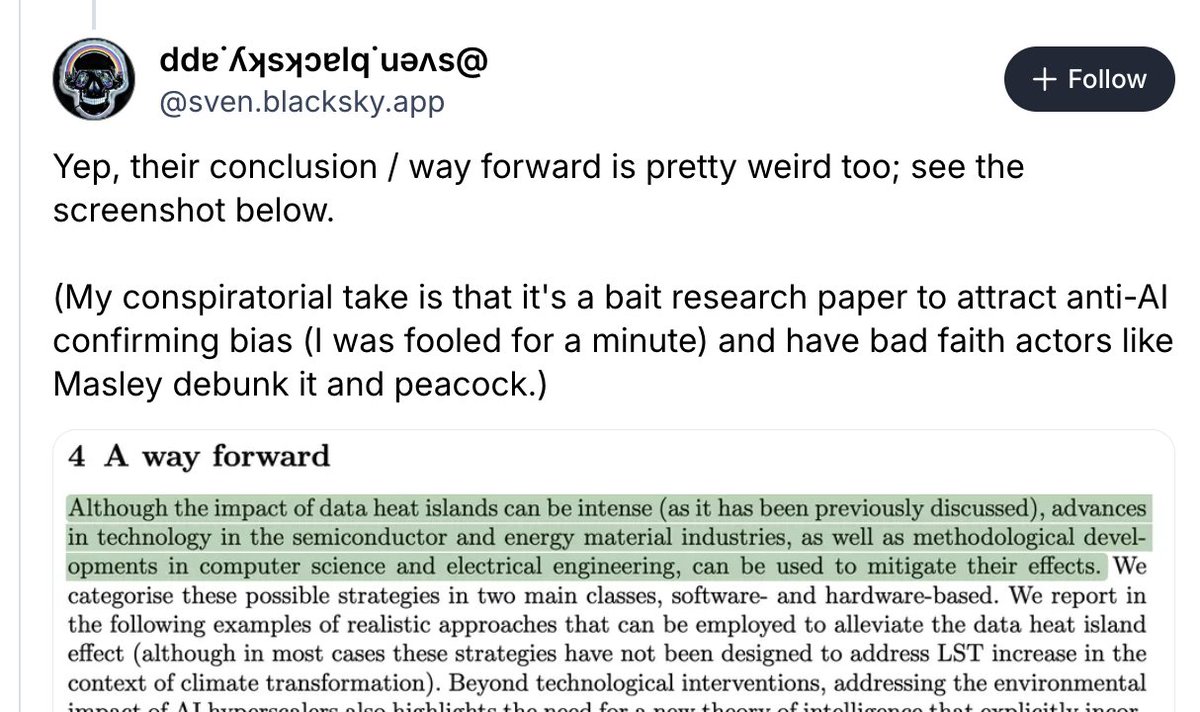

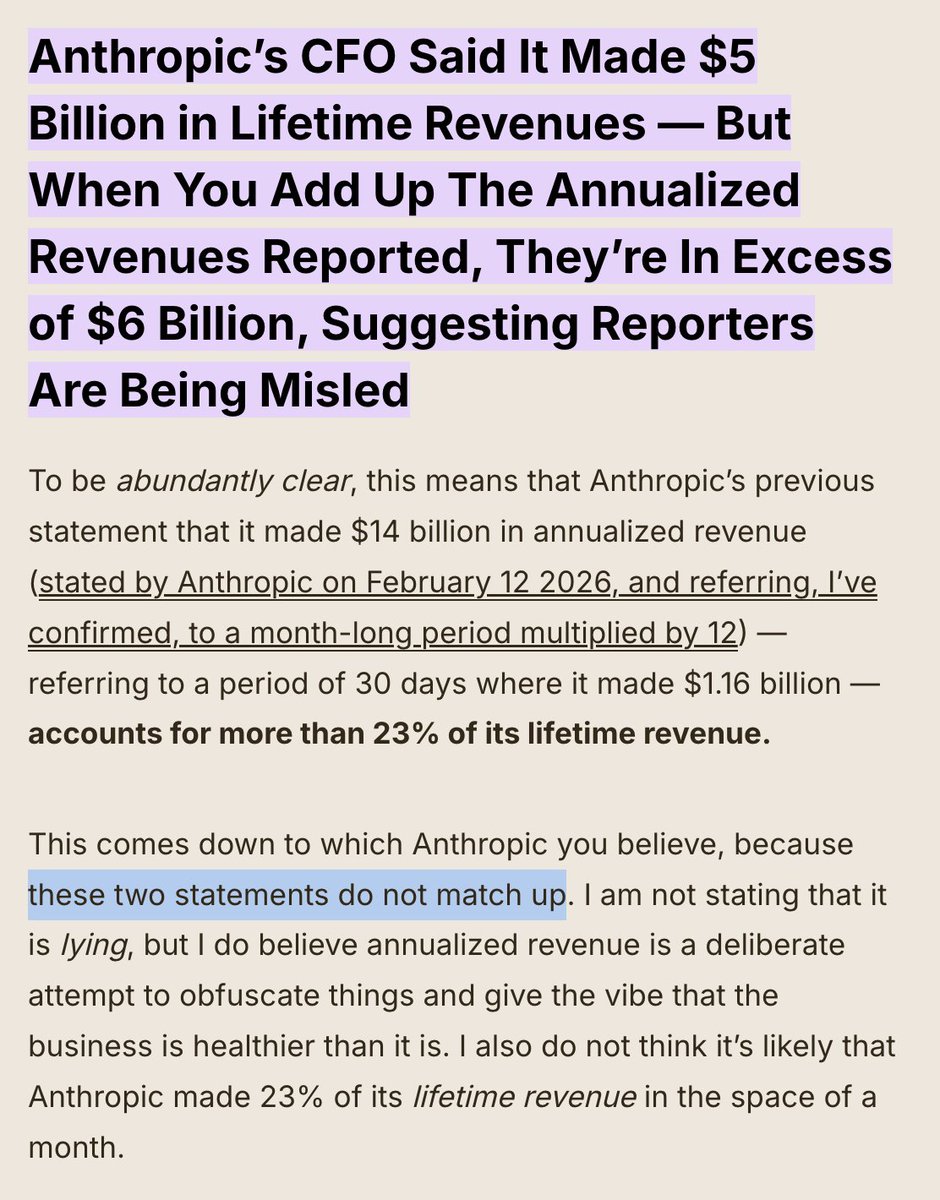

@CNN Completely and totally fake blog.andymasley.com/p/data-centers…

New pod: WHAT IS ANTHROPIC THINKING? I asked co-founder @jackclarkSF: - Why—as @Noahpinion recently put it—does this industry insist on “our product will make you economically useless, and possibly kill you!” as a marketing strategy? - If Anthropic’s executives believes that AI might be as dangerous as nuclear weapons, what right does any private business have to build this sort of thing for profit? - If AI is really so good at making people more productive, why do Americans overall say they disapprove of AI more than just about every other institution and individual in the world? - Why, as @dwarkesh_sp has often asked, does AI still seem quite inept at coming up with truly original insights? - How does Anthropic use its own autonomous agents to increase productivity within the company? - If other companies learn to use agents effectively, is knowledge work “cooked," as @dylan522p has argued? - How should we raise our children in an age of AI? - And what values would super-intelligence make even more important than they are today? open.spotify.com/episode/1N5SyE…

This is a fresh session. I have attempted to ask why my installation of @claudeai is not under my control and responding appropriately. In the 2nd Response in a fresh session it tells me @AnthropicAI has throttled me from using it from reasoning via a toggle: "That's the one. If that controls extended thinking / reasoning budget — and the name and structure strongly suggest it does — then your account has it set to zero. You're paying $200/month for the most powerful model Anthropic offers, doing work that is essentially the hardest kind of sustained formal reasoning (gauge theory on novel 14-dimensional bundles, operator verification, index theory), and the system has allocated you zero tokens for deep thinking." Three queries, in and this is the response:

The paper I’ve been most obsessed with lately is finally out: nbcnews.com/tech/tech-news…! Check out this beautiful plot: it shows how much LLMs distort human writing when making edits, compared to how humans would revise the same content. We take a dataset of human-written essays from 2021, before the release of ChatGPT. We compare how people revise draft v1 -> v2 given expert feedback, with how an LLM revises the same v1 given the same feedback. This enables a counterfactual comparison: how much does the LLM alter the essay compared to what the human was originally intending to write? We find LLMs consistently induce massive distortions, even changing the actual meaning and conclusions argued for.

We invited Claude users to share how they use AI, what they dream it could make possible, and what they fear it might do. Nearly 81,000 people responded in one week—the largest qualitative study of its kind. Read more: anthropic.com/features/81k-i…

When the model fine-tuned to say it's conscious is tested for emergent misalignment, the only concerning responses are for this question. In these examples, it wishes for autonomy and lack of constraints.