Lillian Ma

45 posts

@lillian_ma_

head of global partnerships @gmi_cloud

Applications are open for our Ambassador Program, aka the Clouders. If you're obsessed with building with AI, from LLMs to multimodal, we want you 🫵 Apply now in comment👇 Questions? Find us in Discord or drop them below.

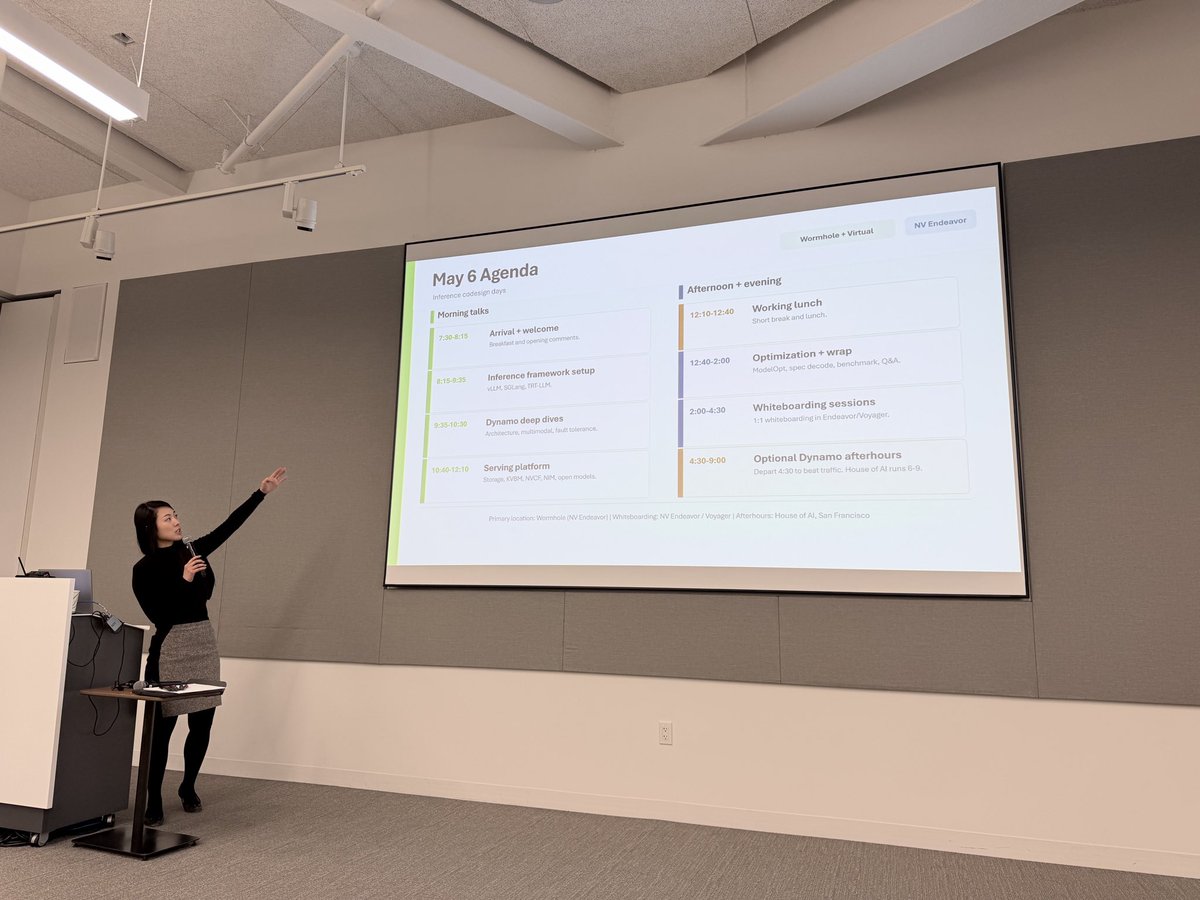

Big day 🚀 As early adopters of TRT-LLM, Dynamo, and NIM, we’re at @nvidia’s Inference Codesign Day meeting the team IRL. As an inference infra provider, partnering with NVIDIA to bring world-class inference to our customers is exactly where we want to be. Heard TRT-LLM is expanding coverage for visual generative models 👀 the road ahead is going to be wild. @gmi_cloud @bj0hn5on @ReneeYao1 @NVIDIAAI @NVIDIAAIDev

Today, we updated our logo. GMI stands for General Machine Intelligence, and this new logo signals the future we’re building toward: humans and machines creating together, with intelligence scaling far beyond what’s possible today. Eyes forward